Early detection and personalized academic support using a predictive chatbot for student success

- Published

- Accepted

- Received

- Academic Editor

- Feng Gu

- Subject Areas

- Agents and Multi-Agent Systems, Artificial Intelligence, Computer Education, Data Mining and Machine Learning

- Keywords

- Artificial intelligence, AI chatbot, Early intervention, Educational technology, Personalized learning, Risk prediction, Student engagement

- Copyright

- © 2026 Arévalo-Cordovilla and Peña

- Licence

- This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, reproduction and adaptation in any medium and for any purpose provided that it is properly attributed. For attribution, the original author(s), title, publication source (PeerJ Computer Science) and either DOI or URL of the article must be cited.

- Cite this article

- 2026. Early detection and personalized academic support using a predictive chatbot for student success. PeerJ Computer Science 12:e3656 https://doi.org/10.7717/peerj-cs.3656

Abstract

This study presents the preliminary validation of an integrated system that combines predictive analytics with an artificial intelligence (AI)-powered chatbot to address academic failure and dropout in higher education. The system leverages a high-performance Light Gradient Boosting Machine (LightGBM) predictive model, previously validated to achieve state-of-the-art accuracy (Area Under the Curve (AUC) = 0.953), to identify at-risk students at an early stage. The intervention component is an AI chatbot powered by the GPT-4o-mini large language model (LLM), which operates via WhatsApp® to deliver personalized, real-time support using context-aware semantic search. A pilot implementation with 108 students demonstrated the system’s viability, with 39 at-risk students identified and engaged in 742 interactions. Key operational metrics included a high student response rate of 89.7%, an initial intervention time of 6.6 seconds (s), and an average chatbot response time of 12.9 s. These findings confirm the technical feasibility of fusing machine learning with conversational AI to create timely, scalable, and personalized interventions, establishing a foundation for improving student success and retention in the future.

Introduction

Globally, high dropout rates and academic underperformance in higher education persist, undermining both students’ success and institutional goals (Al-Abdullatif, Al-Dokhny & Drwish, 2023; Thomas et al., 2024). Multiple interrelated factors, including academic struggles, financial pressure, and mental health challenges, contribute to student attrition, making targeted interventions imperative to improve student well-being and academic performance (Dekker et al., 2020). Although data-driven strategies are promising, a significant gap remains in translating predictive insights into timely and effective support.

Early warning systems can predict which students are at risk (Larrabee Sønderlund, Hughes & Smith, 2019), and educational chatbots can provide personalized assistance (Yang & Evans, 2019). However, these systems often operate independently. The independent operation of predictive analytics and intervention tools diminishes their overall impact, creating a need for systems that seamlessly connect early detection with immediate automated support (Abd-alrazaq et al., 2019). This disconnection is often exacerbated by fragmented data ecosystems within institutions, which hinder a holistic understanding of each student’s challenges (Cano & Leonard, 2019).

While prior studies have underscored the promise of artificial intelligence (AI) chatbots, the challenge of tightly integrating predictive analytics with automated intervention systems remains. By uniting these elements, institutions can shift from reactive to proactive strategies, ensuring that predictive insights directly inform timely support. This study responds to this need by developing and validating an automated intervention system that combines a predictive model based on the high-performance Light Gradient Boosting Machine (LightGBM) algorithm with an AI-powered chatbot deployed through the messaging platform WhatsApp®. The predictive model, which was previously validated in a peer-reviewed study to achieve state-of-the-art accuracy, accurately identified at-risk students early in their first academic term. The AI-powered chatbot then delivers personalized academic guidance, performance insights, and targeted resources using natural language processing.

To evaluate the initial viability of this integrated approach, this pilot study addressed the following research questions:

RQ1: Is it technically feasible to deploy an integrated system that identifies at-risk students and delivers a personalized support intervention in near real-time (i.e., under one minute)?

RQ2: What is the level of initial engagement from at-risk students, measured by their response rate to the system’s proactive outreach?

RQ3: What are the operational performance characteristics (e.g., chatbot response time, token processing) of the system under real-world usage conditions during the pilot implementation?

Addressing these questions helps validate the foundational viability of the system, addressing a critical gap in the literature and providing a template for the future development of holistic, learner-centered, and technology-enhanced educational environments.

Related work

Early warning systems and predictive analytics

Early interventions are particularly critical in online and blended learning environments, where timely support can prevent students from falling behind. Integrating machine learning-based predictive analytics helps to identify at-risk students before problems escalate (Arévalo-Cordovilla & Peña, 2024). For instance, a study at a large public university showed that referring struggling economics students to a Student Success Center improved their final exam scores, emphasizing the value of early targeted interventions (Gordanier, Hauk & Sankaran, 2019). Moreover, the advent of smart campus technologies, which leverage AI and data analytics, has enhanced the precision of early warning systems. These tools collect and analyze various data points to generate tailored recommendations that improve learning outcomes (Villegas-Ch, Arias-Navarrete & Palacios-Pacheco, 2020). Similar efforts are underway in engineering education, where analytic frameworks have been developed to identify potential dropout situations, illustrating the promise of predictive models for early risk detection in specific programs (Mussida & Lanzi, 2022).

As access to higher education expands, understanding and supporting diverse student populations has become increasingly urgent. Institutions face new challenges in adapting to various needs while maintaining academic standards (Kocsis & Molnár, 2024). Research has shown that social identity profoundly affects academic outcomes. Students experiencing “disidentification” (i.e., negative perceptions of their role as students) tend to underperform and drop out at higher rates, an effect particularly pronounced among those from lower social class backgrounds (Matschke, de Vreeze & Cress, 2023).

The role of conversational agents in education

Traditional interventions often rely on generic approaches that fail to accommodate learners’ diverse backgrounds and needs. In response, there is growing interest in personalized strategies facilitated by conversational agents, such as chatbots (Gupta et al., 2019; Liu et al., 2023). Chatbots have rapidly evolved from administrative tools that answer questions about courses and deadlines (Okonkwo & Ade-Ibijola, 2021; Al-Abdullatif, Al-Dokhny & Drwish, 2023) to advanced educational support roles. Currently, chatbots provide personalized feedback, tutoring, and assessment by adapting to specific learning styles (Yang & Evans, 2019; Pérez, Daradoumis & Puig, 2020; Puertas, Mariscal-Vivas & Martínez-Requejo, 2023).

Evidence from various educational domains supports the growing impact of these tools on learning. For example, in nursing education, an AI-driven chatbot enhanced students’ interest in learning and independent study skills (Han, Park & Lee, 2022), while a similar tool at a Vietnamese university improved student-centered learning and engagement (Nghi et al., 2024). In engineering, chatbots have been used since 2008 in subjects such as thermodynamics and have been shown to facilitate knowledge acquisition, enhance motivation, and foster self-directed learning (Maladzhi et al., 2023; Bravo & Cruz-Bohorquez, 2024). These pedagogical conversational agents (PCAs) are more effective when a “common ground” is established with the learner (Tolzin et al., 2023). Furthermore, gamified chatbots have been shown to outperform traditional versions in boosting academic performance and motivation (Xu et al., 2024), and efficient designs can yield high accuracy in student interactions (Elragal et al., 2024) and enhance learning outcomes through personalized feedback (Ashok et al., 2021).

The gap: integrating prediction with intervention

The integration of predictive models with AI-based chatbots represents a significant advancement in the field of educational technology. Predictive analytics can identify students at risk of underperformance or dropout well before these issues manifest (Arévalo-Cordovilla & Peña, 2024). When coupled with AI-driven chatbots capable of proactive engagement, these tools facilitate early, individualized intervention (Ma et al., 2024). However, implementation challenges persist, such as privacy concerns and ethical considerations, requiring careful evaluation to ensure that these approaches effectively support diverse student groups (Wang et al., 2023).

Although early warning systems have shown potential for targeted interventions (Buschetto Macarini et al., 2019; Baneres, Rodríguez-Gonzalez & Serra, 2019), and AI-based personalization holds promise (Maghsudi et al., 2021), a persistent gap exists in the literature. In the era of digital transformation, many studies on AI chatbots have focused on student perceptions rather than integrating early predictive modeling (Monrad Schei, Møgelvang & Ludvigsen, 2024). Similarly, research on tools such as MoodleBot highlights improved engagement but stops short of linking these improvements to tailored interventions based on predictive insights (Neumann et al., 2025). Addressing this gap is critical for realizing the full potential of AI-based tools in comprehensive educational frameworks.

Materials and Methods

Design of the study

This study was structured as a pilot investigation to assess the technical feasibility and initial user engagement of an integrated early alert and automated intervention system. The research design follows a quantitative, single-group approach focused on operational metrics, such as identification coverage, system latency, and student response rate, rather than pedagogical outcomes. Because the pilot was conducted before the final course results were available, predictive accuracy could not be evaluated. Instead, system reliability and user responsiveness were used as proxies for functional validity.

The primary goal of this phase was to establish proof-of-concept and determine whether the system could operate reliably in a real-world educational setting and successfully engage students, serving as a prerequisite for subsequent studies that will integrate academic outcome data for full predictive validation (Monrad Schei, Møgelvang & Ludvigsen, 2024).

Consequently, this study did not include a control group or a comparison with human tutor interventions. Its scope is intentionally limited to answering foundational questions regarding system performance and student receptiveness. Therefore, a pilot test controlled by the researchers was conducted to collect the necessary operational metrics for preliminary validation.

Objective of the study

To design, implement, and preliminarily validate an automated educational intervention system that combines a previously validated, high-performance predictive model with an AI-driven chatbot to identify at-risk students early and provide them with personalized tutoring.

System functionality details

The developed system consists of two main components: a predictive model and an intervention chatbot.

Predictive model

The system’s ability to provide early support is based on a predictive model designed to identify students at academic risk. In the context of this study, an ‘at-risk student’ is operationally defined as any learner for whom the predictive model forecasts a ‘FAILED’ outcome for the course. This identification is performed midway through the academic term using performance data from the first partial assessment as predictors. This approach aligns with early warning systems that leverage predictive analytics to identify students who need timely support, enabling proactive rather than reactive interventions (Arévalo-Cordovilla & Peña, 2024).

The predictive model is based on the best-performing algorithm from a previously published comparative study that evaluated seven machine learning models on a cohort of 2,225 students from the same institution (Arévalo-Cordovilla & Peña, 2025a). That study concluded that the LightGBM model provided the optimal balance of high accuracy and stability (Area Under the Curve (AUC) = 0.953, F1-score = 0.950), significantly outperforming a more complex and unstable stacking ensemble. Therefore, our system employs the validated LightGBM model. Its function is to predict, at the midpoint of the term, whether a student will ultimately pass or fail a subject, thereby codifying the ‘at-risk’ status that triggers the automated intervention.

The predictive model operates as an independent system that connects to the institutional database and is not directly integrated into the Moodle platform. The prediction process is triggered by an automated script (cron job) that runs periodically on the server. Once the grades for the first partial assessment are officially closed and recorded in the academic management system for a given course, the script extracts these key performance indicators (e.g., exam scores and continuous assessment grades) as input features for the model. The model then generates a ‘FAILED’ or ‘PASS’ prediction. The results populate a table that contains the student data structure for that semester and period, including the following fields:

enrollment id

status (PASS o FAILED)

status_bot (1 if the chatbot has already contacted the student, 0 if it has not)

prediction_date

notification_date

An automated process (cron job) runs periodically to identify students with status_bot equal to zero and status equal to FAILED. These students are considered at risk and are automatically contacted by the chatbot via WhatsApp®. Once the message is sent, the status_bot field is updated to one to prevent further contact. The automated script (cron job) used to trigger this process is provided in Supplemental Material S1.

Intervention chatbot

The chatbot is the system’s proactive component, which is designed to translate predictive insights into personalized support. It communicates with students via WhatsApp®, informing them of the prediction of possible non-approval and offering tailored assistance.

Once a student is flagged as ‘at-risk’ by the predictive model (predicted status = FAILED and status_bot = 0), the chatbot automatically initiates a support conversation. This process is managed by a background automation script that triggers an initial outreach message using a predefined template. The first contact message is strategically designed to (a) acknowledge the academic challenge detected in a supportive tone, (b) offer immediate access to resources for review, and (c) open a conversational channel for further academic assistance.

Once the chatbot sends this initial message, the system updates the database (status_bot = 1) to avoid duplicate notifications. Subsequent interactions are driven by student responses and managed by the chatbot’s core functionalities, leveraging the OpenAI® API with the GPT-4o-mini model, function calling, and file-search techniques. Each subject had an associated syllabus, which was vectorized to provide contextualized responses based on the academic content and learning objectives defined by the instructor. This end-to-end process ensures that predictive alerts are translated into timely and context-aware interventions rather than passive notifications.

Chatbot functionalities

Personalized Interaction: Students can interact with the chatbot as if it were a tutor, making inquiries about course topics.

Grade Consultation: Access to current grades and feedback on previous activities.

Pending Activities: Information about tasks and activities that require immediate attention.

Academic Advising: Guidance on how to improve performance and take advantage of additional resources.

Tools and technologies used

The development and implementation of the system were performed using the following technologies and dependencies:

Node.js v20.18.0: JavaScript runtime environment used for the development of the chatbot backend.

Baileys—TypeScript/Javascript WhatsApp Web API: Library for interacting with the WhatsApp Web API and enabling the sending and receiving of automated messages.

Main Dependencies:

@hapi/boom: Structured management of HTTP errors.

@prisma/client and prisma: ORM interacts with PostgreSQL database.

@whiskeysockets/baileys: Interface for WhatsApp Web API.

dotenv: Environment variable management.

pino and pino-pretty: Logging and formatting for system performance monitoring.

qrcode: Generation of QR codes for WhatsApp authentication.

API: Used to integrate generative AI capabilities using the GPT-4o-mini model, which enables natural and contextual responses.

Syllabus Vectorization: Processing and transformation of syllabi into vectors using semantic search algorithms, which facilitates precise and contextualized responses.

Implementation procedures

Development of the predictive model

The best-performing model from a previously published comprehensive study was used: a LightGBM model, which is a state-of-the-art gradient boosting framework (Arévalo-Cordovilla & Peña, 2025a). This model was selected because of its proven high accuracy in predicting academic performance. As part of the validated methodology from that study, the synthetic minority oversampling technique (SMOTE) technique was applied to balance the classes and improve the detection of at-risk students.

Chatbot integration

The chatbot was developed following these steps.

-

•

Setting up the Development Environment: Installation of Node.js and all necessary dependencies, establishing a favorable environment for development and testing.

-

•

Connection with WhatsApp: Using the Baileys library, a secure connection was established with WhatsApp Web through QR code authentication, which enabled messages to be sent and received.

-

•

Implementation of Key Functionalities: The main features of the chatbot were coded, including integration with the predictive model, retrieval of student data, personalized interactions, and provision of academic advice.

-

(a)

Automated message sending: The chatbot is programmed to send messages to at-risk students identified by the predictive model.

-

(b)

Function calling: Development of specific functions that allow the chatbot to access and provide personalized information, such as grades and pending activities.

-

(c)

Syllabus file search: Integration of a semantic search system that uses vectorized course syllabi to respond to questions about academic content.

-

-

•

State Management: Implementation of logic to update the status_bot field in the database, ensuring that each student is contacted only once per at-risk subject.

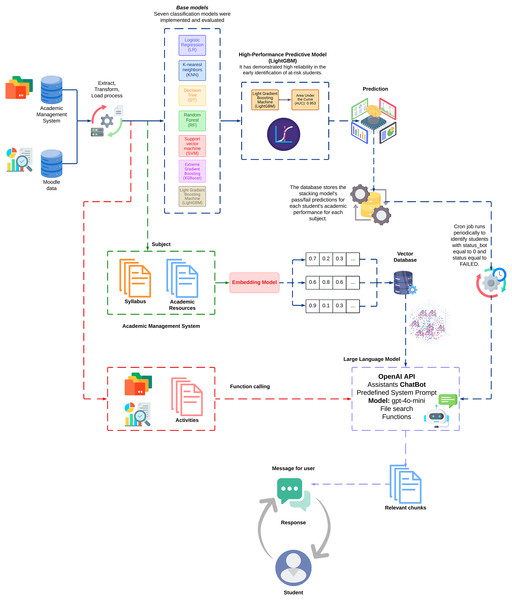

Chatbot interaction flow

To provide a comprehensive understanding of the chatbot’s operational structure, the following flowchart illustrates the sequential and interconnected processes that drive its functionality. The diagram represents the end-to-end flow, starting from the student’s risk identification using the predictive model for the delivery of personalized academic support.

Figure 1 shows a flow diagram that encapsulates the operational logic of the chatbot.

Figure 1: Chatbot operational flow.

Data, code, and schema availability

The full source code for the chatbot implementation, including the Node.js backend, the cron job scripts used for data extraction and prediction, and the complete SQL database schema, is openly available on Zenodo (Arévalo-Cordovilla & Peña, 2025b).

In accordance with institutional data protection policies and the sensitive nature of student academic records, the original student-level dataset used in this study cannot be shared, even in an anonymized form. However, the provided database schema and example configuration files are sufficient for other researchers to adapt the code to their institutional data while preserving student privacy.

Results

Interaction and general use of the chatbot

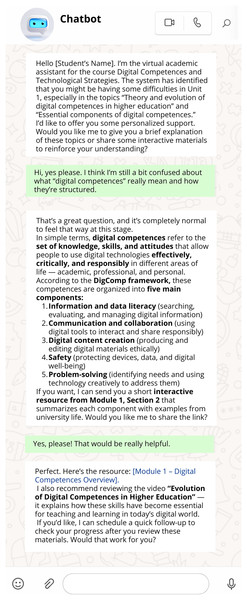

During the 27-day trial period, the chatbot was successfully integrated into the Digital Competencies and Technological Strategies course (Master’s in Education, Cohort II-2024, Parallel B3: N = 108 students). The system identified and contacted 39 at-risk students, resulting in 742 messages being exchanged (355 sent by the chatbot and 387 by users). This high level of interaction indicates significant student participation. To illustrate the nature of these conversations, a typical anonymized interaction is shown in Fig. 2.

Figure 2: Example of an anonymized interaction between the chatbot and a student.

The chatbot demonstrated a fast response time with an average generation time of 12.9 s per response, allowing smooth conversations without delays. The chatbot processed more than 1.36 million tokens during the pilot test, without any issues. Notably, 100% of at-risk students were contacted by the chatbot, achieving full intervention.

Overall, these results suggest that integrating the chatbot led to a high level of student engagement and that the system could sustain frequent interactions with short response times, which is a critical requirement for an effective educational conversational agent in real-world settings. This is further supported by the data presented in Table 1, which provides a summary of the key performance metrics.

| Metric | Value | Details |

|---|---|---|

| Analysis period | 27 days | From 02/02/2025 to 02/28/2025 |

| Total chatbot messages | 355 | Daily average: 16.1 |

| Total user messages | 387 | Daily average: 17.6 |

| Average input tokens | 3,494.6 | Range: 980.2–9,422.6 |

| Average output tokens | 171.4 | Range: 48.5–372.5 |

| Response time | 12,897.6 ms | Range: 5,342.7–38,600.0 ms |

| Generation speed | 180.17 ms/token | Total tokens processed: 1,360,587 |

| Initial response time | 0.11 min | Median: 0.09 min |

| User response rate | 89.7% | 35 out of 39 |

| User response time | 5.92 h | For 35 responses received |

| Text messages usage | 370 (95.6%) | Average: 15.4 per hour |

| Audio messages usage | 17 (4.4%) | Average: 0.7 per hour |

| Peak usage hour | 03:00 | 52 messages (0.0% audio) |

| Message discrepancy | 32 messages | 8.3% messages without response |

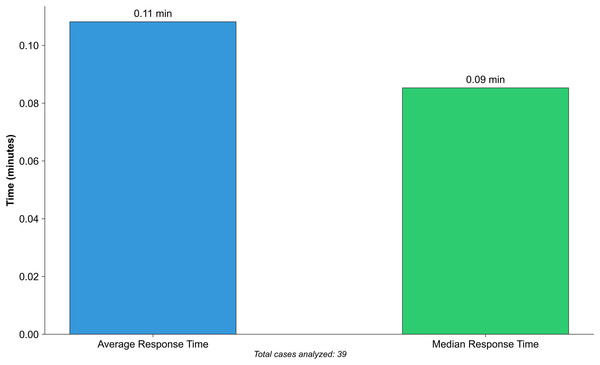

Preventive intervention time

The chatbot demonstrated excellent performance in terms of the speed of intervention after the first alert signal. The average time between the automatic detection of an at-risk student and the chatbot’s first support message was 0.11 min (~6.6 s), with a median of 0.09 min (~5.4 s). This near-instantaneous response means that, upon identifying a student with potential difficulty, the system acts within seconds, providing timely assistance, as shown in Fig. 3. The small difference between the mean and median suggests that there were virtually no atypical delays in the interventions, which were consistently rapid in all cases.

Figure 3: Early intervention response time.

In our study, the chatbot achieved reaction times well below one minute, far exceeding the recommendation for immediate intervention. Therefore, our results confirm that a rapid preventive intervention through chatbots is feasible and potentially valuable in addressing academic issues before they escalate, aligning with best practices for early warning systems in education.

Response rate to initial intervention

The response rate of the students to the first contact with the chatbot was remarkably high. Of the 39 students who were initially contacted, 35 responded to the initial message, resulting in an 89.74% response rate for the first stage. In other words, nearly nine out of ten students engaged in conversations with the agent following the initial intervention, demonstrating a high level of receptiveness and commitment from the users. The average response time for students to respond to the first message was approximately 5.9 h. This indicates that while most did not respond immediately, many did so on the same day they received the initial notification, which is reasonable given that messages could have arrived outside class hours or at unexpected times.

Notably, only 10.26% of the students did not respond to the chatbot on their first attempt, which is a considerably low percentage compared with other automated interventions. The lack of an initiall response is often an indication that something is failing in the design or relevance of the contact message. In this case, the low level of non-response suggests that the chatbot’s initial message successfully captured students’ attention and overcame potential adoption barriers. This indicates that the initial communication strategy was effective in motivating students to engage.

Establishing this communication channel is a crucial step, as a system that fails to attract student participation is unlikely to have a positive effect on student performance. The fact that a vast majority of students voluntarily interacted with the chatbot from their first contact demonstrates that chatbots can be viable mediators in educational interventions, aligning with studies that have shown their high acceptance in providing personalized and relevant support to at-risk students.

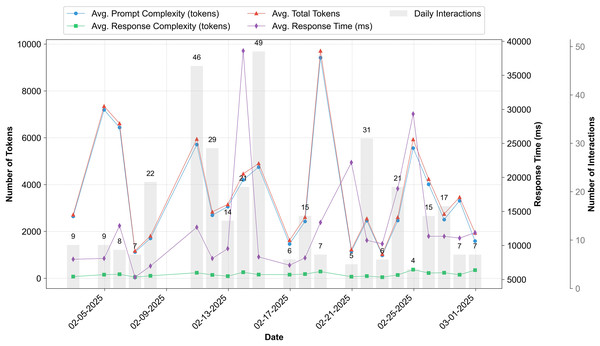

Chatbot efficiency

The collected efficiency data showed that the chatbot exhibited strong technical performance throughout the pilot test. On average, the student queries contained approximately 3,495 tokens (model-specific tokens as processed by the OpenAI API), whereas the chatbot responses averaged approximately 171 tokens. This indicates that chatbots can process complex queries and provide concise, focused answers. The average response time was approximately 12.9 s per query, even with variations in the daily volume. Throughout the trial period, the chatbot handled 355 interactions without any noticeable decrease in response speed and processed more than 1.36 million token. No significant increases in latency were observed, even on high-usage days, suggesting that the system scaled effectively during demand peaks. The technical performance indicators demonstrated that the chatbot was robust and reliable in real-world settings. It can maintain low response times even when handling large amounts of information and complex queries, thereby demonstrating the efficiency of its design. This finding is crucial because the technical challenge of maintaining AI performance under real-world conditions is often underestimated, which is essential for intervention scalability. In our case, the chatbot operated in a stable and effective manner, consistent with previous reports of well-designed conversational systems that achieved high-quality interactions with students.

In summary, the results indicate that the chatbot is not only theoretically viable for providing personalized support but also meets the practical operational efficiency requirements necessary for sustainable integration into real educational environments (Fig. 4).

Figure 4: Chatbot efficiency metrics over time.

Usage patterns and communication modality

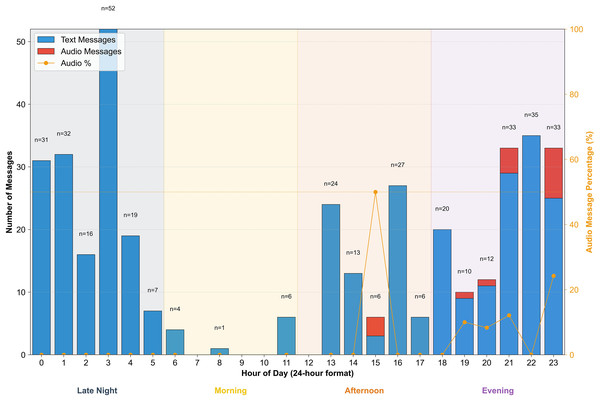

Regarding communication methods, students showed a clear preference for text-based over audio communication. Of the total recorded interactions, 95.6% were text messages (370 messages), compared with 4.4% for voice messages (17 messages). This indicates that written communication is the primary channel for most users, likely because of the familiarity and convenience of text messaging during online learning. Although the option of sending voice messages was available, its use was limited to specific cases, suggesting that students predominantly chose to type their queries and read the chatbot responses. This result aligns with expectations in educational chatbot environments, where interactions typically occur primarily throughh text, highlighting that written language remains the preferred medium for immediate academic support.

In addition, significant temporal patterns emerged in chatbot usage, highlighting its role in supporting asynchronous study. The distribution of messages was not uniform, with substantial activity during non-traditional working hours. Specifically, 157 messages were exchanged during the early morning hours (between 12:00 and 5:59 AM). The highest number of interactions occurred at 3:00 AM, with a peak of 52 messages recorded in a single hour. Crucially, this activity was not driven by a single individual but was generated by 12 distinct students, representing nearly a third (30.8%) of the entire cohort. Furthermore, this late-night usage was a recurring pattern, with interactions at this specific hour recorded on seven different days during the 27-day trial. This finding strongly suggests that the chatbot fills a critical support gap by providing assistance during key independent study times when traditional academic resources are unavailable.

This suggests that many students took advantage of the chatbot’s 24/7 availability to seek assistance outside regular academic hours, possibly while studying independently or working on assignments late at night. The ability to access online support at flexible times appears to reinforce students’ self-study strategies, aligning with previous findings that personalized feedback through chatbots fosters student autonomy and independent learning.

Overall, the detected usage patterns highlight one of the key advantages of educational chatbots: providing immediate assistance at any time and adapting to students’ study needs and habits, whether through quick text responses for discreet inquiries or audio options for cases in which users prefer verbal communication. This underscores the chatbot’s flexibility in integrating into students’ diverse routines, offering personalized support whenever needed, without the time constraints of traditional tutoring resources, as shown in Fig. 5.

Figure 5: Communication modality preference by hour of day.

Ethical considerations

This study was conducted in accordance with the institutional regulations for research involving academic records at the Universidad Estatal de Milagro (UNEMI). Such research is overseen through formal institutional authorizations rather than numerical IRB-style protocol codes.

The use of student data for this doctoral research project was formally authorized in 2023 through a series of official memoranda: UNEMI-FACI-2023-0423-MEM (Faculty of Science and Engineering authorization), UNEMI-VICEACADFYG-2023-0586-MEM (Academic Vice-Rectory authorization), and UNEMI-R-2023-2595-MEM (Rector’s Office authorization). These authorizations cover the broader, multi-year doctoral project on predictors of academic performance in higher education, under which the present pilot study with the 2024 cohort is conducted. The 2024 cohort analyzed in this study corresponds to a later phase of the same approved project, and no changes were made to the scope, type of data, or risk level originally authorized.

UNEMI does not issue numerical IRB-style approval codes; instead, ethical oversight is executed through formal institutional approvals, which are archived and available upon request. All student data used in the predictive modelling and interaction log analyses were fully anonymized prior to analysis, and no personally identifiable information (PII) was retained in the research database. In addition, informed consent was obtained from all the student participants involved in the chatbot pilot intervention.

Discussion

The reliability of this proactive intervention is anchored in its predictive capabilities. By employing the LightGBM model, which was previously validated as the top-performing and most stable algorithm in a rigorous peer-reviewed comparison (Arévalo-Cordovilla & Peña, 2025a), we ensured that the identification of at-risk students was based on state-of-the-art accuracy.

Comparison with previous studies

The findings of this study largely align with the trends identified in the literature on educational chatbots and learning analytics. First, the high student participation rate observed (89.74% of at-risk students responded to the automated intervention) is consistent with previous reports highlighting students’ positive attitudes toward chatbots as an academic support tool (Shoufan, 2023; Hind et al., 2023; Monrad Schei, Møgelvang & Ludvigsen, 2024). Various studies have documented that well-designed chatbots can enhance motivation, engagement, and student performance by providing personalized feedback and assistance. For example, Xu et al. (2024) gamified chatbots significantly increased student motivation and participation compared to traditional approaches, which aligns with the positive responses and recurring chatbot use observed in our pilot study. Similarly, (Al-Abdullatif, Al-Dokhny & Drwish, 2023; Huang, Lee & Kwon, 2023) it was found that integrating an educational chatbot had favorable effects on learning strategies and student autonomy, which is reflected in our context, where the conversational agent provided on-demand personalized tutoring.

However, our approach of combining predictive early warning models with automated chatbot interventions addresses the gap identified in previous studies. Recent studies have emphasized the potential of predictive analytics to anticipate academic risks and chatbots to offer personalized support; however, these two areas have often operated independently of each other.

Villegas-Ch, Arias-Navarrete & Palacios-Pacheco (2020) proposed theoretical architectures for integrating AI-powered chatbots into university settings; however, empirical evidence of their effectiveness is still lacking. In this regard, our results provide concrete data that complement the literature by achieving near-instant proactive intervention following risk detection (average time of ~0.1 min) and successfully engaging with most of the contacted students. We demonstrate how the synchronization of prediction and intervention can lead to tangible improvements.

This empirical finding validates the recommendations of previous studies that advocate a shift from reactive approaches to integrated proactive strategies, indicating that an effective combination of analytics and chatbot interventions is viable and beneficial in practice. However, unlike some early studies that focused primarily on usability perceptions without linking them to educational outcomes, this study provides initial evidence of operational impact (e.g., response times and student response rates), thus bridging the gap between the theory of automated intervention and its real-world classroom application.

Practical implications

The results of this pilot study have significant implications for higher education, particularly in terms of early intervention strategies, personalized learning, and dropout reduction. The system’s abilityy to react within seconds of identifying at-risk students suggests a paradigm shift toward proactive prevention. Thus, institutions can implement intelligent agents that continuously monitor student progress and provide immediate assistance as soon as the warning signs emerge. The literature has highlighted that early and timely intervention can disrupt patterns of poor performance before they worsen, thus maximizing their positive impact. Consistently, our chatbot responded with immediacy that would be unfeasible through traditional human intervention, establishing a help channel at a critical moment when the student was still receptive to help. This immediacy is particularly valuable in preventing minor academic setbacks from escalating into major problems. For example, in our pilot study, chatbot activity was observed even during unconventional hours (peak usage at 3:00 AM) when students typically lack access to available instructors. Having an automated tutor available 24/7 not only facilitates addressing questions outside regular hours but also helps reduce academic anxiety by providing continuous support, which is recognized as beneficial in research on automated learning assistance.

It is important to properly interpret the value of a system’s immediacy in this context. While the chatbot initiated contact in seconds, the average student response time was approximately 5.9 h. This does not diminish the system’s value but rather reframes it: the primary benefit is not forcing a synchronous, real-time conversation but ensuring 24/7 availability and eliminating institutional delays. The system’s speed ensures that a support channel is opened the moment a risk is detected, making help available for the student to access whenever they are ready—whether immediately or hours later, as evidenced by the peak usage at 3:00 AM. This asynchronous support model respects student autonomy and schedules, providing assistance at their point of need, which is a distinct advantage over human-only interventions that are limited by traditional work hours.

In summary, integrating a chatbot with predictive analytics can transform student support efforts by shifting from delayed reactive interventions to personalized real-time guidance, with the potential to improve student retention and academic success on a large scale.

Another key implication is the level of learning personalization enabled by AI-powered chatbots. Unlike traditional early alerts, which typically only notify tutors or generate generic warnings, the system presented herein provides feedback and resources tailored to the specific needs of at-risk students (Davies et al., 2021). This individualized approach, such as offering study tips or review materials related to the difficulties detected by the model, addresses the growing demand to accommodate student diversity. Studies on educational technology emphasize that personalization and immediate feedback are crucial for improving student self-regulation and engagement (McWilliams, 2023; Chang et al., 2023). Our findings reinforce this idea, as the high student response rate suggests that students found the information provided by the chatbot useful and relevant, which likely increased their motivation to resume their coursework with greater confidence.

The ultimate goal of such a system is to reduce academic dropout rates. The underlying premise, supported by the literature, is that if at-risk students—identified with high accuracy by a predictive model (Arévalo-Cordovilla & Peña, 2025a)—receive early and personalized support, they are more likely to overcome challenges that typically lead to attrition. Although this pilot study was not designed to measure longitudinal outcomes, such as dropout rates, the high student engagement observed (89.7% response rate) serves as a crucial validation of the intervention’s first step: successfully establishing a support channel with the target population. This initial success is a necessary precondition for achieving any downstream impact on student persistence and aligns with evidence suggesting that proactive support enhances academic performance and retention.

In summary, adopting systems such as ours in higher education settings could lead to substantial improvements in academic success, early identification and support for vulnerable students, more personalized learning experiences, and potentially lower failure and dropout rates in the long term.

Study limitations

Although the initial results are promising, this study has several limitations. First, the sample size and context were limited; the pilot study involved only 108 students from a single program over 4 weeks. This restricted scope does not guarantee that the effects will be reproduced in larger populations or across different disciplines, as institutional or cultural factors may influence the outcomes differently. Second, the short evaluation period meant that we could only measure short-term impacts and immediate operational metrics. We did not directly assess the effects on final grades, pass rates, or semester retention; therefore, it remains unknown whether the observed improvements would persist beyond the pilot study. Previous studies have noted that there is still limited evidence on how the prolonged use of educational chatbots influences deep learning and knowledge retention (Monrad Schei, Møgelvang & Ludvigsen, 2024), a question that our study could not address because of its exploratory nature.

Furthermore, the intervention’s design exclusively focused on students identified as at-risk. The potential benefits of using the system for broader pedagogical strategies, such as providing positive reinforcement or proactive engagement for all students were not assessed. While this targeted approach was intentional for this pilot, it represents a design limitation and a valuable avenue for future research to explore the chatbot’s role in fostering a positive learning environment for the entire cohort.

Another limitation is the varying acceptance of chatbots, depending on the type of student. While most students used it, some subgroups may have been more hesitant or exhibited different usage patterns than others. For example, those who are less familiar with AI may distrust automated recommendations, whereas students who are more digitally adept may perceive them as more useful and reliable (Shoufan, 2023). Our relatively homogeneous sample may not reflect the diversity of a full campus, which includes students of different ages and academic fields. Therefore, the results on response rates and satisfaction should be generalized with caution; the system may require adjustments for audiences with diverse demographic characteristics and digital skills.

Finally, scalability and sustainability were considered. While the small-scale pilot made technical support manageable, expanding the system to multiple courses or institutions would likely present several challenges, including integration with diverse learning management systems, handling simultaneous queries, and managing the computational costs associated with large-scale natural language processing. Furthermore, ensuring data privacy and regulatory compliance will become increasingly critical for broader deployments. Although the system’s design appears replicable, its effectiveness across various institutional and cultural contexts remains to be validated. Consequently, future research should explore logistical, technical, and adoption factors to determine whether the solution can maintain its efficacy beyond controlled environments.

A significant limitation of this pilot study was its focus on operational metrics without a corresponding qualitative or perception-based evaluation. This study did not capture students’ experiences, perceived value, or attitudes toward the chatbot intervention. Incorporating a theoretical framework, such as the Technology Acceptance Model (TAM), through surveys or interviews would have provided invaluable insights into whether students found the system helpful, intrusive, or motivating. This represents a crucial step for future research to complement the technical validation presented here.

Future research directions

Building on the successful technical and operational validation of this pilot, future research should adopt a more comprehensive experimental design to critically evaluate the pedagogical impact of the system. As this study established feasibility, the next logical step is to address the limitations of the single-group design. Future work should prioritize the following directions.

Long-term Impact Analysis with a Controlled Design: A subsequent study should employ a randomized controlled trial (RCT) design. This would involve comparing a group of students who received the chatbot intervention with a control group that did not, allowing for a rigorous assessment of the system’s direct impact on academic performance (e.g., final grades), retention rates, and the development of self-regulated learning skills (Nghi et al., 2024; Guan et al., 2024). Such a longitudinal design is essential for determining whether high initial engagement translates into sustained educational benefits.

Qualitative Evaluation of Interventions: To assess the pedagogical substance of the interactions, future research should include a qualitative analysis of conversation logs. This would help understand how students use the support offered, the nature of their queries, and the effectiveness of the chatbot’s responses, moving beyond quantitative metrics to evaluate the quality and pedagogical value of the “meaningful exchanges.”

Exploring Scalability and Generalizability: The system should be deployed across diverse academic disciplines and institutional contexts to test its generalizability (Villegas-Ch, Arias-Navarrete & Palacios-Pacheco, 2020). This would also involve assessing its cost-effectiveness at an institutional scale and addressing challenges related to its integration with different learning management systems and data privacy protocols.

Addressing these research directions will provide the requisite supporting evidence to validate not only the technical feasibility but also the pedagogical effectiveness of integrating predictive analytics with automated interventions in higher education.

Long-term impact analysis

Therefore, the evaluation should be extended over longer periods and to real-world settings in future studies. Future studies should track students who interact with chatbots and examine indicators such as their academic performance in subsequent courses, retention or dropout rates, and potential improvements in autonomous learning skills (Nghi et al., 2024; Guan et al., 2024). A longitudinal and controlled design would help determine whether initial improvements translate into sustained and statistically significant educational benefits.

More advanced and adaptive personalization of the chatbot

Another promising direction is to make chatbots more adaptable to students’ individual characteristics and needs. While the current system personalizes content based on detected academic needs, future versions could incorporate additional information about learning profiles, demographic data, and behavioral and personality elements. For instance, it would be useful to investigate whether adapting the chatbot’s tone, communication style, or message frequency based on the student’s learning style or level of autonomy further enhances the effectiveness of the intervention.

Exploring new channels and environments

As the pilot study was limited to a messaging platform, future research should examine the effectiveness of these interventions through other communication channels and educational environments. Evaluating the integration of chatbots into learning management systems where students interact or into university mobile applications could provide insights into how the medium influences student adoption and comfort, maximizing the solutions reach. Additionally, a hybrid model could be considered, combining the chatbot’s automated interventions with human follow-up in more complex cases, leveraging AI’s efficiency alongside tutors’ empathy and judgment.

Future research should focus on two key areas: validating the long-term effects of this technological intervention under diverse conditions and refining the system to make it more adaptable and scalable to different environments. Addressing these research directions will help strengthen the evidence on how intelligent chatbots can be optimally integrated into higher education, contributing to a more personalized, equitable, and effective learning model in the data-driven era. Each step in this research agenda will bring institutions closer to fully leveraging AI’s potential to anticipate student needs and provide support, thus minimizing the barriers that lead to academic setbacks and dropout.

Conclusions

This pilot study successfully demonstrated the technical feasibility and operational performance of an integrated system for automated student support, directly addressing the research questions. The system proved capable of delivering near real-time interventions, identifying all at-risk students, and initiating contact in a median of 5.4 s (RQ1). Furthermore, the high student response rate of 89.7% indicates strong initial receptiveness and engagement with proactive outreachh (RQ2). The chatbot maintained a stable average response time of 12.9 s across 742 interactions, confirming its operational reliability under real-world conditions (RQ3).

These results provide strong evidence for the viability of fusing predictive analytics with conversational AI to create scalable intervention mechanisms. While the findings support the system’s technical robustness and high potential for student engagement, they do not yet measure pedagogical impact. The study’s limited scope—a single course over a 4-week period—means that long-term effects on academic outcomes, such as grades and retention, remain to be investigated. Future research, building on this successful feasibility study, should employ controlled experimental designs to assess the pedagogical effectiveness and direct impact of these automated interventions on student success.