DiscourseDash: designing and evaluating a tool for analysing social media discourses to support decision-making

- Published

- Accepted

- Received

- Academic Editor

- Ankit Vishnoi

- Subject Areas

- Human-Computer Interaction, Network Science and Online Social Networks, Social Computing, Visual Analytics

- Keywords

- Social media analysis, Social media discourses, Social media monitoring, Decision support tools, User-centered evaluation, Industry experts

- Copyright

- © 2026 Liew et al.

- Licence

- This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, reproduction and adaptation in any medium and for any purpose provided that it is properly attributed. For attribution, the original author(s), title, publication source (PeerJ Computer Science) and either DOI or URL of the article must be cited.

- Cite this article

- 2026. DiscourseDash: designing and evaluating a tool for analysing social media discourses to support decision-making. PeerJ Computer Science 12:e3632 https://doi.org/10.7717/peerj-cs.3632

Abstract

Social media discourses have become a significant source of information for decision-makers across various fields, including public health, policy, and brand management. Although analytical tools exist to support these practices, there is a limited understanding of how industry experts engage with and incorporate a discourse-level analysis perspective into their decision-making workflows. To address this gap, we developed DiscourseDash, a dashboard tool that integrates stance detection, sentiment analysis, harmfulness detection, and popularity analysis of evolving social media discourses. DiscourseDash provides a structured view of discourse-level dynamics to support users in interpreting not just what is happening, but why. DiscourseDash differentiates itself from existing tools that primarily visualise high-level statistics by emphasising transparency and collaborative decision-making. We conducted a two-phase study: (1) a mixed-method evaluation with general users to assess effectiveness in navigating social media discourses within a scenario-based decision-making compared to an unstructured method, and (2) in-depth interviews with industry experts to understand their workflows, gather perceptions of DiscourseDash, and explore alignment with real-world practices. Our findings underscore the value of qualitative understanding in social media analysis by demonstrating how discourse-level insights support interpretation and encourage strategic decision-making. In recognising the central role of human expertise, our work highlights the importance of collaborative design by facilitating human interpretation with the prototype. This article contributes practical insights through a conceptual and design-led approach to social media analysis for decision-making.

Introduction

The Global Digital Report 2024 reported that social media users in the United Kingdom comprise 82.8% of the total population (Kemp, 2024). This indicates that social media use is a necessity for most people (Subrahmanyam et al., 2008; Lupinacci, 2021; Kizgin et al., 2020). A prominent example showcasing the social media impact is through crises such as the pandemic, people increasingly turn to platforms to express their opinions, stay informed, and form connections (Bouvier & Machin, 2020; Appel et al., 2020; Saud, Mashud & Ida, 2020). Industries are evolving their decisions and strategies in response to these dynamic spaces to remain relevant (Persily, Tucker & Tucker, 2020). As Jim Coleman, CEO of We Are Social, a global socially led creative agency, highlighted the need for industries to remain responsive to evolving online behaviours by being “on top of these continuous shifts in the way that people are spending time online and adapt their approach” (Underwood, 2024). However, this shift raises a new set of challenges, e.g., the spread of misinformation (“infodemic”) (Cinelli et al., 2020), reputational harm (Jahng, 2021), and manipulation of public narratives or ideologies (Reisach, 2021). These challenges consequently impact vulnerable individuals or communities (Drouin et al., 2020), making it increasingly difficult for policymakers, fact-checkers, and government agencies to manage and navigate evolving narratives (Mahoney & Tang, 2024; Azzaakiyyah, 2023).

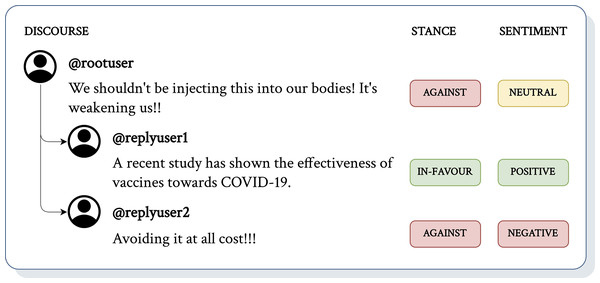

The study of misinformation is prominent in social media analysis through a substantial methodological basis for examining the application of analytical approaches within social media (Zhao et al., 2023; Aïmeur, Amri & Brassard, 2023). Early interventions have explored strategies such as content labelling (Spradling, Straub & Strong, 2021), removing misleading narratives (Sharma et al., 2019), and implementing suggestive measures (Vicario et al., 2019). With the advancement in machine learning, research efforts have shifted toward analysing stance (Hardalov et al., 2021) and sentiment (Bhutani et al., 2019; Alonso et al., 2021) to understand the scale of narratives. Findings from recent studies show that root-level comments often serve as ‘clickbait’ to attract attention (Lama et al., 2020; Bu et al., 2023). This frequently leads to computational ambiguity, as illustrated in Fig. 1, where machine learning models struggle to resolve what ‘this’ refers to without additional context, resulting in incomplete or inaccurate insights regarding what @rootuser is ‘against’. By broadening the analysis beyond the root-level context, we hypothesise that incorporating the full discourse provides a more accurate interpretation. In the same example, analysis of the full discourse reveals that ‘this’ refers to ‘vaccines’, as supported by follow-up textual interactions where @replyuser1 argues ‘for’ with reasons supporting vaccines and @replyuser2 argues ‘against’, illustrating how @rootuser influenced the spread. This highlights a problem where overlooking the context situated within evolving replies and comments limits analysis approaches.

Figure 1: An example of a (short) discourse with additional context from corresponding textual interactions (e.g., replies, comments).

Many existing analytical tools for social media focus on translating insights into summarised visualisations. While effective at highlighting large-scale trends, they are limited in capturing the complexity of social media discourse or supporting users in interpreting the meaning behind these measures. Without qualitative support, analyses struggle to investigate complex phenomena such as misinformation, polarisation, or controversy, as evolving discourse interactions can either contribute to debunking misinformation or exacerbate its dissemination (Alsaif & Aldossari, 2023). Discourse-level context introduces the perspective of narrative dynamics (De Fina & Georgakopoulou, 2011), identifies influential discourses driving dissemination (Shu et al., 2017), enhances contextual understanding in machine learning (Yu et al., 2020; Zubiaga et al., 2018; Poddar et al., 2018; Li et al., 2023a, 2023b), and tracks emerging trends or agenda shifts (Egelhofer & Lecheler, 2019). Yet, it remains unclear whether existing tools leverage surrounding discourses as contextual information in machine-driven or human analyses. Besides, there is a gap in creating tools that align with real-world workflows, as many are designed around technical affordances rather than the practical needs. This research addresses gaps in existing analytical tools by adopting a discourse-level design perspective that reduces computational ambiguity and the labour-intensive demands of qualitative analysis. We developed DiscourseDash, a visual analytics dashboard to support understanding social media analysis for decision-making (see Page 10). By connecting analysis with discourse-level insights, this research supports human users in interpreting these machine outputs. The long-term goal is to manage impactful information before it spreads widely by providing a better understanding of complex social media issues.

We conducted a two-phase evaluation of DiscourseDash. In the first phase, general users (non-experts) completed scenario-based decision-making tasks using DiscourseDash and a baseline platform, Reddit. This evaluation aims to investigate the efficiency and relevance of our design when compared to unstructured discourse. Building on these results, the second phase involved in-depth interviews with industry experts to explore how they use social media discourses, what informs their decisions, workflow limitations, and their perceptions of DiscourseDash. This article investigated how DiscourseDash can support human interpretation of social media discourses in real-world decision-making. To achieve this, we formulate the following Research Questions (RQs):

-

RQ1

How do both general users and industry experts perceive and interact with a dashboard tool that offers a detailed analysis with discourse-level context to make informed decisions?

-

RQ2

To what extent do the proposed features in DiscourseDash, such as stance detection, sentiment analysis, harmfulness labels, popularity insights, influence or support decision-making workflows?

-

RQ3

How do industry experts currently analyse social media discourses, and how well does DiscourseDash align with these real-world practices?

-

RQ4

What key design implications can we gather to guide future iterations of DiscourseDash to enhance support for diverse real-world decision-making scenarios?

In this article, our main contributions are as follows:

-

1.

Design of a dashboard tool: Informed by social media analysis literature, we developed and evaluated a conceptual dashboard that integrates stance detection, sentiment analysis, harmfulness detection, and popularity analysis as key analytical features (see 5). DiscourseDash supports informed decision-making by linking analytical outputs directly to the underlying discourses. This design allows users to trace the reasoning behind machine-summarised results. This connection encourages users to explore discourses with insight to support their organisational goals. Our approach emphasises that social media analysis should not rely solely on machine-summarised outputs; instead, human users should have access to interpretive analysis to reason about these measures in the context of the emerging narratives they represent.

-

2.

Proof of concept: We conducted a two-phase user study to evaluate DiscourseDash. In the first phase, general users interacted with the prototype to assess its usefulness, identify design strengths and weaknesses, and provide feedback for user-centred refinement. Our findings showed that DiscourseDash effectively supports users in exploring social media discourses and making informed judgments. In the second phase, we interviewed industry experts to examine how the DiscourseDash could fit into real-world decision-making workflows. This extended the initial evaluation and offered insights into practical adoption and long-term relevance.

-

3.

Transferable results: Given the limited research on dashboard design to analyse online discourses, little is known about how industry experts interpret and incorporate discourse insights into their existing workflows. This lack of understanding may result in designs that overlook critical needs or lead to solutions that are not applicable in real-world contexts. Our expert study addresses this by uncovering how industry experts make sense of social media discourses and what they require from computational tools to support this process. By combining insights from both general users and domain experts, our research provides an understanding of user interaction with an analytical tool and its applicability in professional contexts. Our findings demonstrate the effectiveness of our design, highlight design implications, and identify opportunities for future development. Importantly, this research emphasises the critical role of human-centred collaborative design that augments human interpretation rather than attempting to automate it in producing transferable insights for similar tools across domains.

Related work

This section reviews previous work that informs the design and evaluation methods of DiscourseDash by examining computational linguistic approaches to social media analysis, followed by an exploration of interactive systems developed for social media analysis. Finally, we review relevant Human-Computer Interaction (HCI) methodologies for designing tools that are focused on user needs and practices.

Computational linguistic measures for social media analysis

Natural Language Processing (NLP) techniques are widely used to identify linguistic patterns and extract important signals from textual data (Hirschberg & Manning, 2015). Drawing on their established use in previous work, our dashboard incorporates the following NLP measures: stance detection, sentiment analysis, harmfulness detection, and popularity analysis (summarised in Table 1).

| Computational measures | Definition |

|---|---|

| Stance detection | Categorising the opinion expressed in a piece of text towards a target premise, e.g., in favour, neutral, or against. |

| Sentiment analysis | Categorising the emotional tone of a piece of text, e.g., positive, negative, or neutral. |

| Harmfulness detection | Measuring the level of harm in a piece of text by measuring toxicity, hate, or other harm-related aspects. |

| Popularity analysis | Measuring the temporal view of the trend or virality of content. |

Stance detection: This is a domain-specific task to categorise viewpoints from in-favour, neutral to against (Mohammad et al., 2016; Hardalov et al., 2021). This computational approach is a target-specific task that reflects how a social media user positions themselves with respect to an entity, claim, or issue (Lillie & Middelboe, 2019). Recent advances in Large Language Models (LLMs) represent the improved approach of stance detection (Zhang et al., 2022). Multi-agent LLM systems have been proposed to address multi-aspect stance reasoning targeting scalability challenges in traditional approaches (Yan, Joey & Ivor, 2024; Lan et al., 2024). The use of synthetic data generated by LLMs was proposed to overcome data scarcity and imbalance. Studies proposed that augmenting stance detection datasets with LLM-generated examples improves robustness and reduces bias in domains like political discourse (Wagner et al., 2024b, 2024a). Applications of stance detection span misinformation detection (De Magistris et al., 2022), rumour verification (Pamungkas, Basile & Patti, 2019; Alsaif & Aldossari, 2023), credibility analysis (Karande et al., 2021), and uptake of misleading narratives (Weinzierl, Hopfer & Harabagiu, 2021). These applications highlight the broader significance of stance detection as an indicator for understanding public perceptions for informing decision-making around societal issues.

Sentiment analysis: Sentiment analysis refers to the computational study of affective states expressed in text by categorising emotional tone into positive, negative, or neutral classes (Liu, 2020). This differs from stance detection, which captures an author’s position towards a target; sentiment analysis reflects the emotional valence of the text. This distinction is important in analysing social media discourses because comments may convey strong emotions without explicitly revealing a position on a given issue. Recent approaches were proposed using transformer-based architectures and LLMs (Krugmann & Hartmann, 2024). The Generative Pre-trained Transformer (GPT) model was adopted to understand the surrounding context to improve the detection of sarcasm in sentiment analysis (Kheiri & Karimi, 2023). Studies have also highlighted the use of LLMs for cross-lingual sentiment analysis across different online communities in low-resource settings, such as minority languages (Chen, Shang & Wang, 2025; Miah et al., 2024). Sentiment analysis is used to track social media issues such as the spread of misinformation, which is frequently correlated to emotionally charged information (Alonso et al., 2021; Preston et al., 2021). Negative emotions like fear and anger have also been shown to increase the circulation of social media information regardless of its factual accuracy (Wang et al., 2019; Weeks, 2015). Sentiment can also serve as a proxy for reputation management for organisations to detect negative public reactions and implement corrective strategies in brand communication (Guo, Fan & Zhang, 2020). Monitoring sentiment in real time can support timely interventions in health, disaster, and humanitarian contexts within crisis informatics research (Reuter, Hughes & Kaufhold, 2018).

Harmfulness detection: Harmfulness, such as toxicity (Sheth, Shalin & Kursuncu, 2022) and hate speech (Cinelli et al., 2021), has increased in conjunction with research on false social media information (Alam et al., 2021). This occurs because malicious actors frequently use inflammatory language to amplify the reach and impact of misleading information (Sharevski, Jachim & Florek, 2020). Hence, research has linked harmfulness to the perpetuation of misinformation, showing how toxic language (Salminen et al., 2020; Pascual-Ferrá et al., 2021) and hate speech (Sharma et al., 2020; Evolvi, 2018) can direct attention to engagement. A recent study with fact-checkers found that harm assessment helped prioritise interventions (Sehat et al., 2024). The effectiveness of LLMs similarly extends to detecting hate speech (Albladi et al., 2025). Some benchmark work was conducted using GPT-3.5 and GPT-4 on Twitter datasets covering hate speech, offensiveness, and emotions (Bauer, Preisig & Volk, 2024). A structured evaluation framework and a toxicity metric were proposed by outlining the challenges of bias, reliability, and consistency when deploying LLMs for harmfulness detection at scale (Koh et al., 2024). A promising and emerging direction of research has used LLMs to uncover coded or evolving forms of harmful speech, such as novel antisemitic terms circulating in extremist communities (Kikkisetti et al., 2024). Recent surveys underline that although these models generalise across multiple forms of abuse (hate, harassment, toxicity), persistent issues remain around data imbalance, evolving vocabularies, and false positives (Diaz-Garcia & Carvalho, 2025).

Popularity analysis: Popularity, commonly discussed interchangeably with the term virality, denotes the degree of engagement a piece of content achieves on social media platforms (Güner, Cebeci & Aydemir, 2025; Ngai, Singh & Yao, 2022; Solovev & Pröllochs, 2022). This measures the engagement on social media to track the spread of information and develop intervention strategies (Shao et al., 2016; Bessi et al., 2015), emphasising the need for early intervention to manage social media content (Kim, 2018; Shu, Bernard & Liu, 2019). For example, monitoring the popularity of content helps gauge public opinion on topics such as vaccines to assess the influence on public health (Xu & Guo, 2018). Approaches like Latent Dirichlet Allocation (LDA) (Blei, Ng & Jordan, 2003) and newer topic modelling methods (Dieng, Ruiz & Blei, 2020; Srivastava & Sutton, 2017) are commonly used to group underlying themes in large-scale textual data. This will allow the identification of dominant concerns and recurring discussion threads within social media discourses (Chakkarwar & Tamane, 2020). More recently, a multi-layer temporal Graph Neural Network (GNN) framework for popularity prediction in social media (Jin, Liu & Murata, 2024). Their work primarily focused on understanding how information spreads across social media networks over time. A Popularity-Aligned Language Model (PopALM) was proposed to improve predictions of social media responses by aligning language representations with popularity trends (Yu, Li & Xu, 2024). Meanwhile, Twitter’s (currently known as X) “Viral Tweet” feature was examined, and it was pointed out that the ratio of retweets to followers offers a more reliable indicator of virality than absolute retweet counts (Elmas, Stephane & Houssiaux, 2023).

Discourse-level analysis: The following section reviews studies that specifically examine learning from context at the discourse level. This shifts the focus from analysing isolated posts (e.g., root-level comments) to learning contextual information from the discursive structure of discourses. This research work defines social media discourse as textual interactions on platforms, such as discussions, threads, and conversations. Our approach should not be confused with the social science ‘Discourse Analysis’ method, which generally refers to examining the social and linguistic aspects of language use.

This perspective is motivated by prior research examining discourse across various conversational contexts and linguistic patterns to improve model learning. Besides, many studies have highlighted the effectiveness of analysing interactions within discourse to improve the identification and management of online narratives and their impact on public opinion (Haupt, Li & Mackey, 2021; Silva et al., 2020; Kalantari, Liao & Motti, 2021). For example, recent stance detection for misinformation has integrated local and global context-based attention mechanisms. The Coupled Hierarchical Transformer model has been used to gain a deeper contextual understanding in discourses (Yu et al., 2020). Similarly, Long Short-Term Memory (LSTM) networks combined with attention mechanisms have improved conversation representation by modelling conversation branches (Zubiaga et al., 2018; Poddar et al., 2018). Further developments refined these approaches using Branch-Bidirectional Encoder Representations from Transformers (BERT) models, which more effectively capture discourse-level context (Li et al., 2023a). Subsequent accuracy improvements have also incorporated target-specific contexts (Li et al., 2023b).

More recent approaches continue to emphasise discourse-level contexts for model learning. A Global–Local Attention Network (GLAN) was proposed on a multi-turn conversational stance detection dataset (Niu et al., 2024). Their work demonstrated how both short-range and long-range dependencies across conversational threads influence stance prediction. Similarly, the Transformer-based Architecture for Stance Detection with Embeddings (TASTE) was proposed to integrate structural embedding of conversations with transformer-based text encoders to jointly capture content and discourse structure (Barel, Tsur & Vilenchik, 2024). Stance Reasoner was proposed to combine pre-trained models with explicit reasoning steps and background knowledge to improve zero-shot stance detection (Taranukhin, Shwartz & Milios, 2024). MultiClimate was proposed to integrate textual transcripts (similar to a format of social media discourse) from climate change videos with visual content to model multimodal context for stance detection (Wang et al., 2024). All of the mentioned literature demonstrated the relevance of discourse-level insights but failed to adapt them into an interaction tool.

Interactive systems for social media analysis

Visual analytics are commonly used to translate computational linguistic measures into actionable insights for human users (Endert et al., 2017; Salamkar, 2024; La Rosa et al., 2023). Furthermore, empirical studies show that presenting data in interpretable formats within visual analytics systems can reduce cognitive load and help users focus on key aspects of decision-making (Abdul et al., 2020).

Many existing interactive systems have been developed to support navigation on social media analysis through interactive visualisations. exRumourLens provides a social media analysis of rumours by summarising their spread and assessing their credibility (Phan et al., 2022). Although their work provided targeted support for rumour detection, its scope remains limited, as it does not support broader analysis of discourses or user-driven exploration beyond credibility evaluation. When X was previously known as Twitter, TweetCred offered real-time credibility analysis for Twitter content, supporting rapid user judgments (Gupta et al., 2014). However, their reliance on automated credibility scores may oversimplify social media content and overlook the evolving contexts that affect the trustworthiness of these scores. Besides, CrowdTangle was widely used by journalists and researchers to monitor public social media content and track trends (Garmur et al., 2019). However, its discontinuation by Meta underscores the uncertainty of relying on proprietary tools for research and public accountability. Before that, the system offered little transparency regarding how content was selected and ranked. This could constrain reproducibility and raise concerns about possible algorithmic bias.

MisVis is a web-based platform that analyses the prevalence of misinformation on websites by indicating reliability and visualising connections to other sources (Lee et al., 2022). Although valuable in highlighting misinformation trends, they primarily focus on static website content rather than the dynamics of social media. The focus on misinformation is narrow and may not generalise to other issues in the social media landscape. PeakMetrics offers a social media management dashboard for commercial purposes. This dashboard was designed to identify and counteract harmful narratives using indicators such as emerging narrative threats, threat scores, and sentiment analysis. However, the system lacks open-source documentation and transparency regarding its internal processes, making it difficult to assess its analytical robustness or biases. Finally, these existing interactive systems have demonstrated how computational insights can be useful in decision support tools. At the same time, they reveal important limitations around transparency and adaptability.

Multi-phase evaluations in HCI

In HCI, user studies are fundamental to developing and refining proof-of-concept systems by incorporating human factors throughout the design process (Hartson, 2012). Common approaches involve developing prototypes and conducting phased evaluations (Vermeeren et al., 2010). A fundamental distinction in HCI research is between expert users with specialised domain knowledge and general end-users with influence of broader general concerns (Sharp, Rogers & Preece, 2007). A common approach is mixed-method evaluation, which combines qualitative and quantitative techniques to capture complex user interactions, effectively validate conceptual designs, and gather interactive insights from general users (Creswell, 1999; Östlund et al., 2011; Edmonds & Kennedy, 2016). This is because while qualitative methods capture user behaviours and experiences, revealing why certain interactions occur or fail (Seaman, 1999). Meanwhile, quantitative methods provide measurable and statistically valid data that help generalise the findings to larger user groups (Nardi, 2018). This mixed approach ensures reliability and credibility for validating findings from multiple perspectives (Golafshani, 2003; Rolfe, 2006).

Incorporating real-world considerations is crucial when designing analytical tools for practical deployment. Prior studies have shown the benefits of involving expert participants (Atreja et al., 2023), healthcare experts (Patel, Kaufman & Kannampallil, 2021), and medical specialists (Lindgren, Winnberg & Winnberg, 2011) in shaping HCI efforts within specific domains. These investigations demonstrate the value of expert insight in developing systems that support domain-specific reasoning from expert users who have knowledge-based experiences. Such knowledge is fundamental for designing tools that facilitate complex analytical workflows and strategic decision-making in real-world environments. To bridge these two perspectives, it is common to synthesise user studies in HCI research in multi-phase evaluations to cover all-rounded aspects (Zimmerman, Stolterman & Forlizzi, 2010). Despite growing efforts to validate interactive systems with both general and expert users, relatively few studies have examined how tools designed for analysing social media discourses can balance human interpretation with alignment to professional decision-making workflows.

From literature gap to design goals

While prior research has demonstrated the strength of computer linguistics and analytical tools, there is still room for improvement to support the interpretive process for decision-making. Most existing studies do not adequately address the evolving and complex nature of online discourses. Plus, interactive systems mostly prioritise visualising quantitative outputs over qualitative insights. This presents limitations in tasks that require users to uncover deeper contextual interpretation, such as identifying the causes of specific issues on social media. Unlike many existing systems, our approach considers both the analytical depth and the interpretive needs of users to support their navigation in the social media space.

Transparency in design helps build user trust, but many Artificial Intelligence (AI)-driven tools lack proper documentation of their design processes (Deekshith, 2020). Previous work highlighted the importance of making model outputs interpretable and actionable to improve adoption (Rong et al., 2023). In response to these concerns, we employ a design approach that encourages human interpretation by incorporating a discourse-level perspective in social media analysis. A two-phase evaluation study that integrates insights from general users with validation from domain experts contributes an open-source design for a collaborative human–machine system that augments and facilitates human interpretation instead of seeking to automate it. Table 2 summarises how DiscourseDash addresses these limitations of prior systems.

| Limitations | DiscourseDash design |

|---|---|

| Lack of context around how automated analysis derives its outputs | Visualise the information (context from discourses) that informed the model’s output by displaying the same information for human users to inspect when using automated analyses. |

| Oversimplifies social media dynamics due to limited analytical depth to capture complexity | Allow users to explore discourses in depth and develop their own judgement on the reliability of the automated analyses. |

| Prioritise computational affordability and encourage dependence on automated analyses | Prioritise interpretative flexibility by encouraging humans to question, refine and reinterpret automated analyses instead of treating them as definitive outputs. |

Design process

This section outlines our design process for DiscourseDash. We begin with a technical definition of ‘discourse’. Next, we present the initial design concept. Finally, we describe how these insights were translated into a functional prototype for the next multi-phase evaluation studies.

Understanding discourse in social media

In our research, we technically define “discourse” for our tool development as consisting of two main components: root-level comments ( ), such as those initiated as a post or thread on social media, and child-level comments ( , where ), which are hierarchically structured replies to the root-level comments, also referred to as “replies.” A complete discourse (D), can be represented as: , where . This implies that a complete discourse must include both root-level comments ( ) and one or more child-level comments ( ). If a root-level comment ( ) does not have corresponding child-level comments ( ), we do not consider it as a discourse. These child-level comments ( ) represent a form of engagement on social media, contributing to the evolution of narratives and influence (Neubaum & Krämer, 2017; Epstein et al., 2021). For example, a root-level comment ( ) that does not generate engagement and therefore lacks a discourse ( where ), reflects a low impact on public narratives.

This focus enables us to capture discussions where ideas, opinions, and narratives evolve through replies and interactions over time. Prior research shows that these discursive structures of replies reflect relevant social engagement, shifts in stance, and influence dynamics, which are crucial factors for understanding phenomena such as misinformation and formulations of public opinions (Benamara, Inkpen & Taboada, 2018). For instance, a Reddit post without replies generally has limited impact, while one with multiple layers of interaction indicates a richer and more influential discourse (Choi et al., 2015). By defining discourse in this way, our tool aims to capture and visualise the complexity of online discourses to support informed decision-making.

Conceptualising the design approach

The design of our dashboard was guided by the objective of providing structured and actionable insights into online discourses. Drawing on our literature review (see Page 3), we identified four analytical features-stance detection, sentiment analysis, harmfulness detection, and popularity analysis-that facilitate navigating complex social media environments. These dimensions were selected to support decision-making processes in areas such as public health, communication, and policy response, where understanding the nature and dynamics of online discourses is important. By presenting discourses in detail, DiscourseDash enables users to investigate how the broader narrative landscape contributes to the analytical depths. The overall design is organised into two tabs: an overview of visuals for initial identification and prioritisation, and a detailed view for in-depth investigation and contextual understanding.

Providing a visual overview

The overview provides users with a high-level summary of trending discourse topics and their implications. It includes the following components:

Trending Topics: We use clustering techniques to identify the most actively discussed topics within a selected time window. This surface-level aggregation enables users to quickly understand which issues are dominating public discourse and assess their alignment or conflict with organisational goals.

Harmfulness: Each topic from the Trending Topics is assessed for its potential level of harm, based on the proportion of posts containing toxic, hateful, or otherwise harmful language. This classification helps users allocate priorities by identifying those that may require immediate attention or intervention.

Stance: We display the distribution of stances (favour, neutral, against) within each topic. We hypothesise that understanding the stance distribution on topics previously categorised as harmful can help gauge the urgency of a topic. For example, if a highly harmful topic receives predominantly favourable stances, it can inform the prioritisation of further investigation.

Sentiment: Sentiment (positive, neutral, negative) provides an additional emotional context to each topic. This helps users understand public emotions and make informed decisions about countermeasures.

Popularity: Engagement metrics over time reveal the relevance and impact of topics. Visual graphs showing the popularity of each topic help users understand the dynamics of discourses and identify emerging trends or sudden spikes that may indicate concerning impacts.

Further detailing the analyses

Building on the overview, users can select specific topics to investigate in detail, exploring analyses accompanied by discourse through the following features:

Summary An in-depth view of the selected topic, including visual graphs of stances and sentiments. This provides clarity and supports users in unfolding the progression and influence of different viewpoints within the discourse.

Discourses We present all the discourses on a selected social media platform that reflect the topic under investigation. The goal is to support effective countermeasures by providing a detailed view of the stances and sentiments labelled within each text in the discourse. It also facilitates the extraction of specific contexts and dynamics revealed through the analysis. Presenting these discourses in detail, accompanied by visuals and interactive elements, is a key design goal aimed at enhancing transparency and explainability.

Prototyping DiscourseDash

Our interactive dashboard was developed using the Dash Open Source Framework, created by Plotly, chosen for its robust integration with Python libraries and its flexible integration with machine learning frameworks in future iterations. This framework allowed us to customise functionalities to meet our research requirements. For example, we implemented a custom collapsible table using React boilerplate JavaScript UI libraries to represent the hierarchical structure of discourses. The full code is available at GitHub. In Algorithm 1, we briefly illustrate how the system constructs hierarchical conversation trees from root posts and replies. Each post and reply is annotated with stance, sentiment, and other metadata for the hierarchical visualisation in the prototype.

| Input: root_posts, comments |

| Output: rows of hierarchical discourse |

| Function create_rows (root_posts, comments): |

| rows = []; |

| foreach root_post in root_posts do |

| row = {id, text, date, stance, sentiment, num_replies}; |

| row.replies = generate_rows(root_post.id); |

| rows.append(row); |

| end |

| return rows; |

| Function generate_rows(parent_id): |

| subrows = []; |

| foreach reply where reply.Parent == parent_id do |

| subrow = {id, text, date, stance, sentiment, num_replies}; |

| if reply has replies then |

| subrow.replies = generate_rows(reply.id); |

| end |

| subrows.append(subrow); |

| end |

| return subrows; |

To ensure that the prototype closely reflects a realistic scenario, we used a dataset of open-access Reddit discourse on COVID-19 vaccination for the evaluation studies (Brambilla & Kharmale, 2022). As of its retrieval in October 2023, this dataset consisted of 1,726 Reddit posts and 11,189 corresponding replies, posted between April 2020 and May 2021. Each entry was labelled with a stance (favour, none, against) towards the topics of “General about vaccine”, “Second Dose”, “Vaccine side effects”, “General after vaccination”, “Thankful.” Additionally, they provided labels of sentiments (positive, neutral, negative). We included all entries containing one or more replies to ensure every data point represents a discourse structure. The labels for stance and sentiment were directly taken from the dataset.

Since they did not include labels for harmfulness, we manually and randomly assigned harmfulness values in the prototype. This means that harmfulness labels were distributed without any specific pattern or criteria, solely to demonstrate the feature within DiscourseDash. We determined that this approach was suitable given that the focus of the user study was to evaluate the conceptual design rather than the accuracy of the labels. Each row in the dataset includes timestamps and the total number of child-level comments for each row belonging to a root-level comment. These columns are used to illustrate the popularity of topics in the prototype. Overall, the dataset was sufficient to support the prototyping for DiscourseDash’s evaluation.

System overview

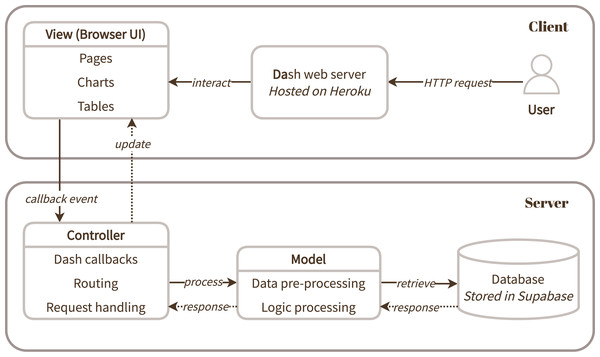

Figure 2 illustrates the client-server architecture of the developed web application prototype following a Model-View-Controller (MVC) architecture. For accessibility and ease of deployment, DiscourseDash was hosted on Heroku, a cloud-based platform-as-a-service (PaaS). This facilitated a web-based access to the prototype for convenience throughout the user evaluation.

Figure 2: A system overview of DiscourseDash presented based on a model-view-controller (MVC) architecture.

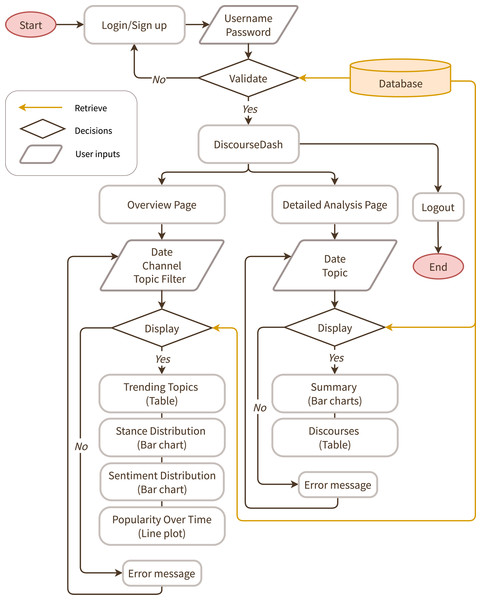

The dashboard

Figure 3 illustrates the intended interactive flow for DiscourseDash by showing the sequence of interactions between a user and the prototype across its key components. The core dashboard is divided into two main pages: (1) the Overview Page (see Figs. 4, 5, 6) and (2) the Detailed Analysis Page (see Figs. 7, 8). The final prototype used for this research is publicly accessible here: DiscourseDash.

Figure 3: A user flow diagram for DiscourseDash.

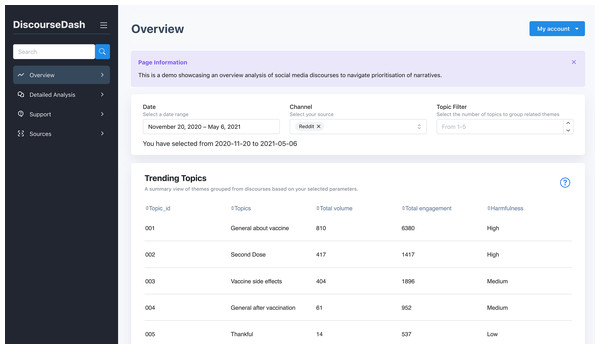

Figure 4: Overview page showing user inputs and the Trending Topics table.

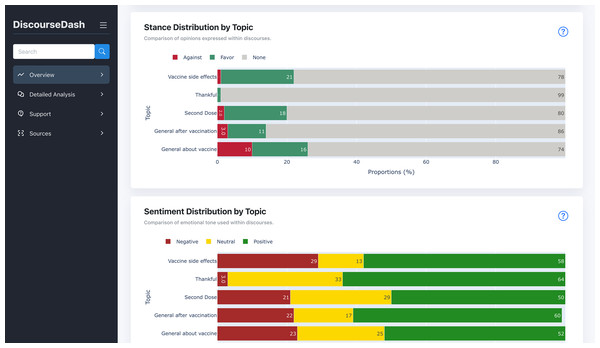

Figure 5: Overview page displaying stance distribution by topic and sentiment distribution by topic charts.

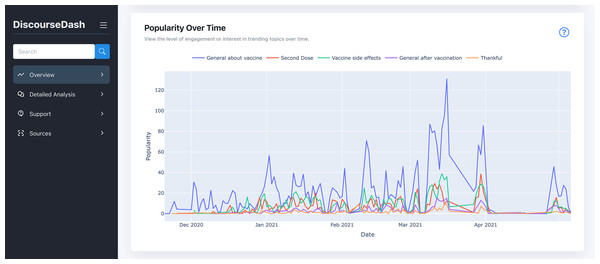

These charts are positioned below the “Trending Topics” table, scrolling down the page.Figure 6: Overview page with popularity over time.

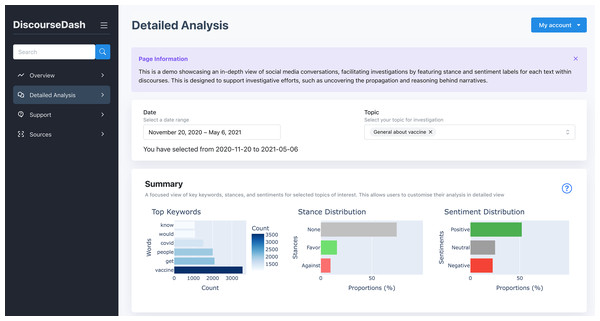

This graph is located further down the page from the previous figure.Figure 7: Detailed Analysis page with the user inputs and summary.

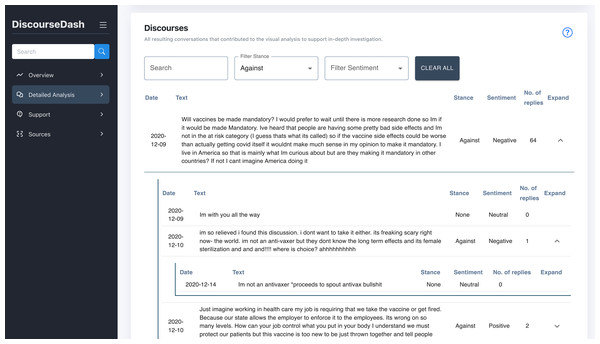

Figure 8: Detailed Analysis page with the discourses, with an example of a full discourse with activated collapsible function.

This table is located further down the page from the previous figure.Overview page

The Overview Page provides users with a high-level summary of trending discourse topics and their key analytical insights. The page consists of the following interactive components:

A. User Inputs: Users specify a date range and optionally the number of topics to display. The dashboard then updates components B through E accordingly.

B. Trending Topics: Displayed in a table summarising the results of the analysed discourses. Each topic includes a unique identifier (Topic_ID), the topic label (Topics), counts of root-level comments (Total volume), the number of associated child-level comments (Total engagement), and a harmfulness label (Harmfulness), categorised as low, medium, or high.

C. Stance Distribution by Topic: Displayed as a bar chart, this component shows the distribution of stances (favour, none, against) across all discourses within each topic from B. The y-axis lists topics by Topic_ID, while the x-axis shows the aggregated count of stance labels from both root-level and child-level comments.

D. Sentiment Distribution by Topic: This bar chart presents the distribution of sentiments (positive, neutral, negative) associated with each topic from B. The y-axis lists topics by Topic_ID, while the x-axis shows the aggregated counts of sentiment labels from both root-level and child-level comments.

E. Popularity Over Time: The line graph displays user engagement trends for each topic from B, with dates on the x-axis and popularity (number of engagements) on the y-axis.

Detailed analysis page

This page presents the detailed discourses corresponding to the topics of the previous Overview Page. It allows users to dive deeper into specific topics they wish to investigate specific issues based on actual discourses.

A. User Inputs: Users specify a date range and select a targeted topic(s) for detailed investigation. The dashboard then updates components B and C accordingly.

B. Summary: This component provides a summary of a discourse selected by the user to investigate. By topic, a bar chart on the left illustrates the distribution of stances (favour, none, against) across the discourse. Similarly, another bar chart on the right presents the sentiment distribution (positive, neutral, negative) across the discourse.

C. Discourses: Displayed in a collapsible table format, allowing users to expand each discourse to view its associated child-level comments. Each row, representing root/child level comments, is accompanied by labels of the corresponding stance (favour, none, against) and sentiment (positive, neutral, negative). This is designed to facilitate detailed analysis within the broader context of the selected topics. Additional functionalities include filters that allow users to refine results based on specific stances, sentiments, and keyword searches.

Methods

This study comprised two sequential phases, each refined through pilot testing and guided by relevant literature. Ethical approval for both studies was granted by the Research Ethics Committee of the School of Computer Science at the University of Nottingham (Ethics Application Ref: CS-2023-R61). All participants provided informed consent before participating in the study. Written consent was obtained using an electronic consent form distributed through a secure online platform. Participants were required to read the information sheet and sign the consent form electronically to confirm their voluntary participation. All data were anonymised before analysis. Details of all anonymised participant codes and data related to this research are publicly available in the Nottingham Research Data Management Repository under accession number: 10.17639/nott.7564.

Phase 1: mixed-method study with general users

We conducted a mixed-method study with 26 participants to compare perceptions when interpreting unstructured social media content (Reddit) vs using our dashboard in decision-making tasks. This phase explored DiscourseDash’s conceptual and functional usefulness through complex interactions. The study was conducted in English via Microsoft Teams between June and July 2024.

Recruitment and participants

Participants were recruited from the university research groups, including professors, researchers, and students. No further screening was applied. Participants were assigned to interact with the conditions in a counterbalanced order. To respect participant privacy and because demographic details were not relevant to the study’s aims, detailed demographic data were not collected. This aligns with prior evidence suggesting that participants may be uncomfortable sharing such information (Frederick, 2021). Participant codes (e.g., G1) serve as anonymised identifiers, where “G” denotes a general user. A complete list of participant codes, their assigned counterbalanced condition orders, interview dates, and anonymised excerpts from the transcripts is available in the dataset.

Study design

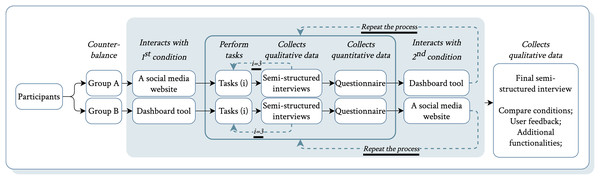

We employed a within-subjects design where participants completed three predefined tasks using two experimental conditions in counterbalanced order: (1) Reddit as a baseline, and (2) DiscourseDash. After each task, semi-structured interviews were employed to gather qualitative feedback, followed by questionnaires to quantify user experience. Following the completion of both conditions, participants engaged in a final interview to compare their experiences and provide feedback for improvements. This approach enabled an evaluation of DiscourseDash’s effectiveness in supporting decision-making, the usefulness of its features, and opportunities for user-centred enhancements.

Baseline justification: Baseline selection should reflect realistic, representative user practices (Purchase, 2012). Manual exploration of unstructured social media is common among experts such as journalists (Humayun & Ferrucci, 2022; Hellmueller et al., 2024). Thus, an unstructured social media platform serves as a realistic analogue. Reddit was chosen for its recognised open discourse structure: threaded comments, subReddits, upvotes, and downvotes (Hollender et al., 2010). Compared to Twitter/X or Facebook, Reddit’s longer discussions, clear hierarchical threads, and topic-focused communities make it suitable as a baseline for this study. We acknowledge Reddit may not capture all interaction types of other platforms. Yet, its open, community-driven nature represents unstructured social media well. For clarity, we define a root-level comment as equivalent to a Reddit post, and a child-level comment as equivalent to a Reddit reply (see Page 7).

Procedure

Participants were provided with an initial briefing on the research topic and the study’s objectives, which focused on evaluating the effectiveness of DiscourseDash in analysing online discourse. To minimise bias, the comparison between conditions was not disclosed. Each participant experienced two counterbalanced conditions: (1) baseline Reddit and (2) DiscourseDash. Participants began by familiarising themselves with the assigned condition. Drawing from virtual experience methodologies, a realistic narrative was provided to enhance ecological validity (Gorini et al., 2011). Participants assumed the role of a fact checker tasked with identifying newsworthy topics and crafting impactful articles, inspired by journalistic practices (Humayun & Ferrucci, 2022; Hellmueller et al., 2024). This scenario helped link DiscourseDash’s metrics with its intended impact (Lam et al., 2011). Then, participants completed three separate, predefined tasks specifically designed to reflect these realistic workflows. These tasks are detailed in File S1, Set A. During these tasks, participants used the think-aloud protocol to verbalise their thought processes (Jaspers et al., 2004). After each task, a semi-structured interview and questionnaire assessed their experience. This procedure was repeated for the second condition. The study concluded with a final semi-structured interview comparing both conditions to identify preferences and gather feedback. Participants received a £10 Amazon voucher for approximately 60 min of participation. Figure 9 illustrates the study conditions and procedure.

Figure 9: Overview of the study procedure in Phase 1.

Data collection and analysis

We used a mixed-method approach (Creswell, 2021) to gather both quantitative and qualitative data. Quantitative data provided measurable insights into participants’ interactions and perceptions, while qualitative data helped explore underlying reasons. All audio recordings were transcribed via Microsoft Teams, then manually reviewed to ensure accuracy. The transcriptions were then extracted and organised by questions, as shown in the provided dataset, allowing for a structured mapping of participant responses to facilitate the analysis process.

Data collection

Interview: We conducted semi-structured interviews to collect qualitative data. After each task in both conditions, participants reflected on their experience through a predefined set of sub-questions (see File S1, SET B), targeting insights into their interactions and decision-making. After completing both conditions, a final semi-structured interview was conducted to compare the conditions and identify improvements for DiscourseDash (see File S1, SET C).

Questionnaire: Participants completed Likert-scale questions evaluating task experience and usability for each condition. Shared questions assessed aspects like ease of topic prioritisation, while condition-specific questions addressed stance detection, sentiment analysis, harmfulness detection, popularity analysis, and discourse-level features. The System Usability Scale (SUS) (Brooke, 1996) was also employed for quantitative usability assessment. Complete questionnaires for DiscourseDash and Reddit conditions are provided in Files S2 and S3, respectively.

Data analysis

Qualitative analysis: We conducted thematic analysis (Braun & Clarke, 2012) to systematically identify, organise, and interpret patterns within the qualitative data. This method was selected for its ability to capture both explicit and implicit themes from participant narratives during interactions with DiscourseDash. The process began with familiarisation, where transcripts were reviewed and responses were summarised. Open coding was then conducted using an inductive, interpretive approach. Codes were iteratively refined through regular discussions to ensure consistency, accuracy, and alignment with the study’s research questions. Illustrative participant quotes are included to substantiate the findings.

Quantitative analysis: Quantitative questionnaire data collected after each interaction were analysed using appropriate non-parametric statistical tests for ordinal and paired data. To compare participant ratings between the two conditions, we applied the Wilcoxon Signed-Rank Test, which is suitable for related samples and non-normally distributed data. Additionally, the Friedman Test was used to evaluate differences across multiple related features within conditions. These analyses allowed us to assess the relative performance of both conditions. SUS are calculated by converting participant responses on a 5-point Likert scale into a total score ranging from 0 to 100, where scores above 68 are generally considered to indicate above-average usability. The average score across participants reflects overall user acceptance and satisfaction (Bangor, Kortum & Miller, 2009).

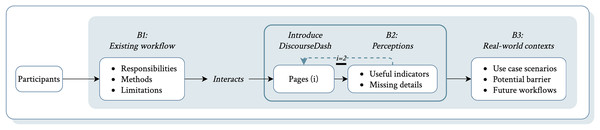

Phase 2: interview study with industry experts

Building on insights from Phase 1, we conducted semi-structured interviews with 11 industry experts experienced in analysing social media discourses for decision-making. The study aimed to explore their current workflows, practices for social media discourse analysis, experts’ perceptions of DiscourseDash, and suggestions for its practical integration in real-world contexts. Interviews were conducted in English via Microsoft Teams between March and April 2025.

Recruitment and participants

Participants were recruited through existing networks, direct outreach on LinkedIn, and targeted recruitment via Prolific (See File S5). The final sample includes 11 participants with diverse roles, responsibilities, and sector experiences. While most were UK-based, some had experience in Japan, Nigeria, and Europe. Initial expert selection was based on predefined criteria (see File S4, SET A), excluding years of experience as a sole indicator of expertise, since prior research shows it does not reliably reflect skill or judgment (Ericsson et al., 2018). Instead, we prioritised domain knowledge, contextual understanding, and reflective insights. Participants lacking these qualities during the interview were excluded from the final sample. Participant codes (e.g., E1) serve as anonymised identifiers, where ‘E’ denotes an expert. A complete list of participant codes, their profession, interview dates, and anonymised excerpts from the transcripts is available in the dataset.

Study deign

Our interview study employed an inductive approach to explore how experts interpret social media discourses and their needs for supportive tools. This allowed us to gather insights through pre-defined questions while allowing flexibility for emergent themes. The interview questions were divided into three parts. The first explored participants’ professional roles and workflows, including decision types, methods for analysing social media discourses, and workflow challenges. The second focused on their perceptions of the conceptual dashboard prototype, asking which features stood out and what was missing. The third focuses on practicality within experts’ workflows, inviting use case scenarios, potential barriers, and suggestions. Full question sets are provided in (see File S4, set B). All questions were open-ended, supplemented with prompts for clarity and coverage of key themes.

Procedure

The interview began with a brief introduction outlining the study goals and assuring participant confidentiality. Given the small sample size and focus on high-quality insights, a short demographic questionnaire was included to ensure diversity and contextualise perspectives. The interview comprised three stages. First, to establish a baseline, participants answered questions about their engagement with social media discourses in decision-making, current tools and methods, and their limitations (see File S4, set B: B1). Next, participants interacted with a working prototype of the DiscourseDash tool via a shared screen. Participants first explored the Overview page, followed by perception-focused questions regarding the design’s relevance and any missing elements relative to their workflow (see File S4, set B: B2). This process was repeated for the Detailed Analysis page to gather perception and relevance on DiscourseDash’s feature in providing in-depth discourse insights. Finally, participants reflected on their overall experience with DiscourseDash through examples of real-world use cases, adoption barriers, and integration considerations (see File S4, set B: B3). Participants received £20 Amazon vouchers for approximately 45 min of participation. Figure 10 illustrates the study design and procedure.

Figure 10: Overview of the study procedure in Phase 2.

The two key interactive pages are denoted as (i): when , this refers to the Overview Page; when , this refers to the Detailed Analysis Page.Data collection and analysis

All interviews were transcribed using Microsoft’s automated transcription service immediately after each session and manually reviewed for accuracy against the original audio. Relevant responses were extracted and organised according to interview questions, as reflected in the provided dataset. We applied the same thematic analysis approach as described above (See 14, Qualitative analysis), using inductive coding to structure the data by interview sections. Then, broader themes were developed through iterative team discussions to illustrate patterns aligned with our research objectives.

Results for phase 1: mixed-method study with general users

This section reports quantitative results from questionnaire ratings across conditions, followed by themes from the qualitative analysis. Together, they reveal user perceptions, feature usefulness, and areas for improvement.

Quantitative results

The following results are based on all responses collected from the Likert scale questionnaire after each interaction with a condition. The Wilcoxon Signed-Rank Test was chosen to compare ratings between two paired conditions by each participant, as it is suitable for non-parametric, non-normally distributed data. Additionally, we chose the Friedman Test to compare perceptions across multiple features, as it handles more than two related samples and is appropriate for ordinal data. All detailed questionnaire responses are provided in the dataset.

Comparative analysis of the ease of prioritisation

We conducted a Wilcoxon Signed-Rank test to compare the ease of identifying a topic between the Reddit baseline and DiscourseDash. The analysis revealed a Z statistic of and a p-value of . These results were statistically significant, suggesting that users perceive DiscourseDash as making it easier to prioritise topics for creating impact compared to the Reddit baseline.

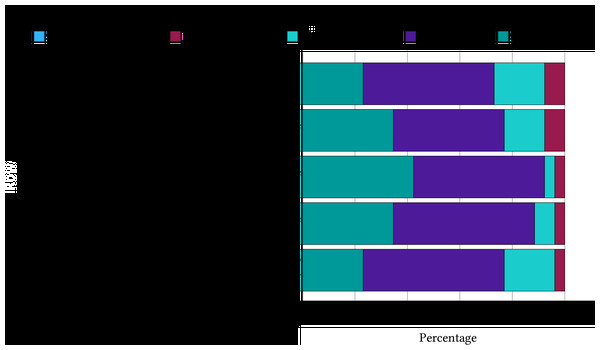

Evaluating the perceptions of the usefulness of dashboard features

To illustrate the Likert scale agreement by participants, we present our results in a stacked bar chart (see Fig. 11). Observing the proportions of “Agree” and “Strongly Agree”, we can see that these categories occupy the majority, indicating the relevance of our proposed features in achieving decision-making tasks.

Figure 11: A stacked bar chart shows the proportion of responses from participants evaluating each feature.

Comparative analysis of the perceptions of the usefulness of dashboard features amongst participants

Additionally, a Friedman test was conducted to compare the participants’ perceptions of the usefulness of features. The features evaluated are the harmfulness label (M = 2.56), stance detection (M = 2.90), sentiment analysis (M = 3.40), popularity analysis (M = 3.25), and discourse investigation (M = 2.88). Although sentiment analysis received the highest mean rank, the test did not reveal a statistically significant difference in perceived usefulness among the features, , . This suggests that participants rated the usefulness of these features similarly, with no feature standing out as significantly more or less useful than the others.

Preliminary usability testing outcomes

DiscourseDash achieved a SUS score of 78.9, which is well above the commonly accepted usability benchmark. This suggests that participants found DiscourseDash to be usable and accessible. This result reflects positive user perceptions of the system’s ease of use and overall design suitability for achieving the tasks provided. These initial usability scores indicate that the design flow was sufficiently intuitive, demonstrating its appropriateness for evaluation in the next phase of the study.

Thematic analysis

We conducted a thematic analysis of the qualitative data, organising it into three subsections. Each subsection highlights key themes from participant perceptions, providing an understanding by contrasting their experiences across both conditions.

General user perceptions of DiscourseDash

Participants consistently preferred DiscourseDash over the baseline social media platform Reddit for the given tasks, citing its efficiency in consolidating extensive data into actionable insights. G26 highlighted that “This would make it easy to justify your thoughts… then you can tell him [your superior] how many thousands of people are interested in this [topic] right off the bat.” Similarly, G15 noted, “I’d say that was much more informative and easier to navigate through.” Another frequently mentioned reason for preferring DiscourseDash was its visual clarity and ease of use. G19 commented, “DiscourseDash gave us a clear overview of where you should focus on.”

The ability of DiscourseDash to quickly access and interpret large volumes of data allowed participants to make more informed decisions, which is especially valuable in contexts where decisions need justification. G25 explained, “The graphical representation and all of these graphs were telling me exactly the information required to [achieve tasks] and finding out what’s going on [in the discourse].” Another participant, G15, added, “[Reddit] gave me comments that I can read through, but I would see the statistics and graphs [from DiscourseDash] to make sure I make an informed decision. By doing that passively, I not only get information from all the comment sections, but I also get to read through the comments if needed.” These suggest that DiscourseDash is perceived as an effective means of providing objective analysis for informed decision-making.

Theme 1: Effectiveness in guiding a decision-making process. Participants found that DiscourseDash facilitated a more structured approach to decision-making by providing a “good overview” (G16) that “helps to boil down the overall topics that are being discussed” (G6). For example, G1 compared their experiences and mentioned that “it gave a better overview… whereas [Reddit] is not conducive for me to find out the most important viewpoints and topics that I [as fact checker] should be addressing.” Participants perceived this structured approach as beneficial in professional settings when decisions require concrete reasoning. Another advantage mentioned is time efficiency. As G10 mentioned “It’s much faster and easier to identify the for and against positions that are already categorised for me.” Correspondingly, G13 found the stance and sentiment summary provided in DiscourseDash “very useful”, allowing participants to promptly grasp public opinion.

Contrary to Reddit, participants highlighted the challenges they faced with the same tasks. G3 explained, “[In Reddit] it takes ages to find what I want to do, and if I want to determine sentiments, I need to check these replies manually.” G19 shared a similar thought “ It’s like I need to read, for example, thousands of comments […] to get an idea [from Reddit] while the analysis here [dashboard] saves a lot of time […].” G23 added, “In Reddit, I have to like maybe read the comment twice to understand the sentiments and stances.” G6 pointed out that “if you want to do this work properly, you need a tool to help you […]. Maybe a ready dashboard […] there’s too much information [on Reddit] that is too hard to see the big picture.” Overall, DiscourseDash was perceived as more efficient and effective than manually navigating through large volumes of unstructured data.

Theme 2: Guidance towards articulation of ideas. Participants highlighted how DiscourseDash facilitates understanding of discourse by revealing ideas, correlations, and trends. For example, G15 articulated their view that “The discussion is high on the first topic, which states that there is some confusion or statements made without proper facts being spread […]. Here [stance and sentiment analysis] clearly states that they are contradictory opinions, and that’s not being resolved.” Similarly, popularity was useful in revealing correlations between different topics of discourse. For example, G16 articulated their understanding of the interconnected trends across various topics, noting that “whenever there’s a spike in topic one, there’s a spike in topic three as well. This [particular topic] is also interesting because it follows a correlation with these [two other topics].”

Another example illustrates the interpretation of some ideas around the discourse by G2, “[…] at the same time they are talking about the second dose, they are also talking about side effects, with [negative sentiments towards it] […] they don’t like vaccines and very few of them are thankful for all the work done [for vaccines] so far.” Another key observation, G7 illustrated how the data trends in DiscourseDash stimulated curiosity “I tend to [want to find out] what happened around that time [a decrease in popularity]. What kind of event might have occurred at that point?” These analyses demonstrated how DiscourseDash supports and guides a user’s thought process.

An example of how stance and sentiment analysis shape participants’ ideas, G18 concluded their thoughts that “What I can make out is that people are happier in taking a vaccine rather than not taking it […] because from both the graphs [stance and sentiment], […] the number of positive is more and here the favour is more than against.” While the harmfulness label was interpreted as “high rating of harmfulness is probably more important” (G10). Adding to that, G17 “feel harmfulness also determines newsworthiness […] So I’m leaning [my decision] towards the first two [topics labelled as high harmfulness].” Instead of the term harmfulness, G18 “would rather it be a saying impact, but I guess it denotes the negative sense of these topics.” These observations highlight how the harmfulness label was used and interpreted throughout the decision-making process.

Finally, the Detailed Analysis facilitated participants in formulating ideas about the factors influencing different stances. For example, G15 articulated their understanding that “there are some people who want to take the vaccine, they show negative sentiment […] because of limited supply and they feel insecure as they are the essential workers, […] people who have a neutral stance and sentiment, they are not exactly sure how the vaccination works and how it helps.” Another informed idea articulated by G25 is “a lot of people are asking how the government is going to [take action towards the vaccine], […] there’s a lot of confusion.” Consequently, most participants expressed similar ideas when concluding their investigation.

Theme 3: Supporting trust through detailed discourse-level analysis. Several participants expressed initial scepticism toward the overview page due to limited transparency. For instance, G6 remarked, “I have to blindly trust this classification […] I have no idea what data this has been generated on.” Similarly, G26 asked, “Where are the sources from?” However, after exploring the Detailed analysis page, G6 noted, “I do have access to the data […] this addresses what I criticised [previously]”. This shift illustrates how access to underlying discourse-level data can improve perceived explainability and trust.

Another key observation highlighting how Detailed analysis facilitated the investigative tasks is evident in G26’s experience. They initially described having difficulty distinguishing between stance and sentiment. However, they noted that examining examples in the discourses helped them better understand these concepts, highlighting the value of this feature in clarifying their meaning. They expressed that their understanding of stance and sentiment, and their correlation, was “not very clear until I toggled around.” After reviewing several “different combinations and permutations [of stance and sentiment pairs]” in the discourse, G26 understood the correlation better. This suggests that while the overview page provides a high-level summary, examining the detailed data in the discourses is necessary to understand the complexities behind these summaries.

Theme 4: Concerns about analysis measures. A common concern raised during the study was the disagreement participants experienced between their manual inspection of the data and the labels provided on DiscourseDash. For example, G16 pointed out that DiscourseDash “incorrectly marked some sentiments” indicating perceived inaccuracies in the sentiment classification. Similarly, G6 stated that “when I dig into the data, which I think is important that I have the option to, I very quickly identify several [labels] for me don’t match up [with own judgement], […] which reduces the reliability of what I saw in overview.” These observations suggest that improving transparency in the labelling process is necessary to enhance trust and confidence in the system’s outputs.

Usefulness of features in influencing decisions

Here, we grouped the features mentioned by participants during their interactions to show how they evaluated each feature’s usefulness when performing tasks on DiscourseDash.

Theme 1: Effectiveness of harmfulness labels. Harmfulness labels were indicated as helpful in guiding the prioritisation of topics that were both widely discussed and deemed significant due to their potential impact. For example, G16 noted that “the harmfulness is quite high. So I’m leaning towards like the first two.” Similarly, G17 articulated that “harmfulness also determines newsworthiness, so it might be important to [prioritise] more harmful news.” They recognised that higher harmfulness signifies more pressing issues that deserve greater attention in decision-making. However, some participants expressed confusion about the interpretation of harmfulness levels. For example, G22 pointed out that “I don’t understand what the high harmfulness means […]” though they still interpreted that “if the harmfulness is high, then it is important.”

Theme 2: Roles of stance and sentiment analysis. The analysis of varying stances and sentiments reveals how these factors can influence the perceived importance of a topic. For example, G19 mentioned that “although people talked about it [the topic] a lot [from the stance detection], not a lot of people have a clear opinion on that [topic]. So, that might [warrant] a discussion in the article [as a fact checker to address it].” This example shows that the presence of diverse opinions signals to users to explore the discourse more thoroughly. Additionally, G26 observed that stance detection helps to correlate public sentiment, stating “we can tell that not many people are just singing to the choir; the public seems to agree on this [topic] but may have more questions about it.” This indicates areas of both agreement and contention, suggesting topics that may benefit from further investigation. G22 mentioned, “I think for me the stances and sentiments help shape which [topic of discourse] is important […] because if you have [a contrasting opinion like against a topic] it enriches the discussion and it’s more exciting [to investigate].”

Theme 3: Use of popularity over time. Participants leveraged popularity over time to connect shifts in interest with specific events. For example, G19 observed, “Especially here [spike in the graph] you can see some different changes, which might correspond to specific events like news that happened at that moment.” The potential application of popularity for future content planning by fact-checkers was also noted. G26 noted “comparing these [topics], although there is a decreasing amount of interest in the general vaccine, […] maybe a follow-up article like what might inform my work [as a fact checker] next would be about the second dose, which is the next trending topic [observed from the trends].” This shows how tracking popularity trends prepares decisions for interventions as a fact-checker. Besides, G07 mentioned the longitudinal aspect of popularity data commenting, “[during] the investigation, focusing on specific points like maybe this part [decrease in engagement trend] would be interesting to see what kind of events happened.” Overall, “although [a topic] is less popular than [another] topic” the popularity graph helped participants determine that “it’s rising in importance” based on the emerging relevance.

Results for phase 2: interview study with industry experts

This section presents qualitative findings from Phase 2 interviews with industry experts. A thematic analysis of expert response summaries identified recurring themes, highlighting shared insights and perspectives. These themes reveal how experts perceive DiscourseDash, its alignment with workflows, and considerations for future design.

Thematic analysis

Findings are organised around the structure of the interview: interactions with DiscourseDash before, during, and after use. This structure supports a systematic presentation of themes to our research questions, with each section reflecting insights relevant to that stage. Themes are presented in a mixed format based on their proportion of contribution: tables are used for brevity; long-form paragraphs offer more evidence that directly addresses the research questions (consistent with Phase 1); and bullet points provide concise yet elaborated information to enhance readability. This approach balances clarity with space efficiency. Additional details are available in the dataset.

Understanding existing workflows before we introduce DiscourseDash

This section demonstrates how experts engage with social media discourses—their roles, workflows, tools, and challenges to set the scene for the next results section.

Theme 1: Usage of social media discourses in decision-making contexts. This theme contextualises how experts engage with social media discourses in their day-to-day decision-making. Practices from their domains show how understanding discourses is interconnected within their organisational workflows and priorities. This means that they influence both high-level strategies and immediate responses (see Table 3).

| Sub-themes | Description |

|---|---|

| Integrating insights into strategic coordination | Supports both long-term strategy and immediate response by turning discourse data into reports that align team efforts and inform decisions. |

| Understanding audiences for public communication | Awareness of audience perspectives and platform dynamics helps tailor messaging, build trust, and sustain engagement. |

| Responding to crisis and misinformation | Experts monitor discourses to act quickly during crises, applying cultural sensitivity, detecting issues early, and preventing harm. |

| Using public signals for reporting and policy | Social media signals support evidence-based reporting for government, emergency response, and internal policy planning. |

Theme 2: Guiding factors in decision-making. Experts described workflows from data aggregation and analysis to planning and management, highlighting factors from social media discourses that guide decisions. We identified three consistent patterns: (1) data-driven indicators, including measurable metrics and observable patterns; (2) interpretive insights, involving subjective assessments and qualitative readings; and (3) situational/contextual dependence, where decisions are shaped by organisational goals, stakeholder expectations, or broader events (see Table 4).

| Sub-themes | Description |

|---|---|

| Data-driven indicators in practice | Experts use metrics such as engagement rates, follower growth, reach, and content performance to plan and assess strategies. They also consider demographics (age, gender, profession) to tailor messages for specific audiences. |

| Interpreting insights from social media discourses | Experts analyse sentiment, tone, and keywords to gauge public opinion and track emerging topics. For example, E10 monitors sentiment changes to update responses, while E11 uses these signals to combat misinformation with culturally sensitive approaches. |

| Situational and contextual dependence | Experts view social media as one part of a larger process, combining it with surveys, interviews, or fieldwork for a richer understanding. Some prefer controlled studies for reliability, while others value anonymised interviews in sensitive contexts. |

Theme 3: Navigating social media discourses. Experts tasked with interpreting social media discourses face challenges not only in data collection but also in making sense of this rapid, extensive, and often ambiguous information. We summarise how they navigate this space, extracting transferable insights relevant to their decision-making workflows. Our findings identify two approaches: (1) manual exploration and (2) automated analysis. These approaches are used independently or in combination, depending on the needs (see Table 5).

| Sub-themes | Description |

|---|---|

| Manual exploration | Experts typically begin with social media features such as trending topics, hashtags, and keywords to filter relevant discourses, followed by manual review to extract insights that guide interpretation. |

| Adopting automated or software tools | Used for handling large-scale or time-sensitive data. These include: AI tools for categorisation, summarisation, and sentiment analysis; social media monitoring/listening tools for managing accounts, tracking engagement, mentions, and sentiment; and data analytics software for detailed data structuring, management, and visualisation tailored to domain-specific needs. |

Theme 4: Limitations of current approaches in workflows. This section summarises key limitations experts identified in their current methods for navigating and analysing social media discourse, informing how the design of DisocurseDash addresses these challenges and highlighting the need for improved tools across workflows (see Table 6).

| Sub-themes | Description |

|---|---|