Chicken swarm optimization and associated variants: a literature review

- Published

- Accepted

- Received

- Academic Editor

- Ankit Vishnoi

- Subject Areas

- Artificial Intelligence, Optimization Theory and Computation

- Keywords

- Chicken swarm optimization, Metaheuristic algorithm, Optimization

- Copyright

- © 2026 Liu et al.

- Licence

- This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, reproduction and adaptation in any medium and for any purpose provided that it is properly attributed. For attribution, the original author(s), title, publication source (PeerJ Computer Science) and either DOI or URL of the article must be cited.

- Cite this article

- 2026. Chicken swarm optimization and associated variants: a literature review. PeerJ Computer Science 12:e3609 https://doi.org/10.7717/peerj-cs.3609

Abstract

Chicken swarm optimization (CSO) is a new metaheuristic algorithm inspired by biologically inspired metaheuristic algorithms that imitate the behavior of chicken flocks. CSO has the advantages of strong global search ability, high stability, and strong multi-subgroup collaborative search ability, and has important research potential. It is widely used in various optimization problems in real life. However, CSO also has some problems, including slow convergence, insufficient local search ability, and easy to fall into local optimality. Therefore, various variants of CSO have been proposed. This article presents a structural review of Chicken Swarm Optimization (CSO) and its variants published between 2014 and 2025. The review systematically organizes and synthesizes existing research by outlining the fundamental principles of CSO, categorizing improvement strategies, and analyzing the characteristics and performance of different variants. Instead of conducting an empirical comprehensive study, this structural review focuses on mapping algorithmic developments, identifying methodological trends, and summarizing application domains such as engineering design, energy systems, image processing, and intelligent diagnosis. Furthermore, the review highlights current limitations of CSO, discusses theoretical considerations, and provides future research directions. The presented structural framework offers clearer insights into the evolution of CSO and serves as a reference for researchers seeking to understand or extend CSO-based methods.

Introduction

In both social interactions and engineering design, we frequently encounter a multitude of optimization problems (Braik, Sheta & Al-Hiary, 2021). The fundamental objective of optimization lies in crafting a suitable objective function, such that its extreme value solution aligns precisely with the sought-after optimal solution (Gendreau & Potvin, 2005). In essence, optimization involves identifying the most favourable solution from the range of values assumed by decision variables within specified conditions (Blum & Roli, 2003). This endeavour translates into determining the maximum or minimum value of a fitness function subject to a set of constraints (Gebreel, 2021). With the escalating demands for improved quality of life and industrial advancement, the intricacy of optimization problems has correspondingly surged, involving an increasing number of objectives and constraints, thereby posing formidable challenges to the study of optimization problems (Hannan et al., 2020). Consequently, in the face of increasingly complex challenges in practical engineering applications (Bai et al., 2023), the study of optimization problems is of great significance to social development (Qin, Zain & Zhou, 2022).

As computer speeds continue to increase, the complexity of optimization problems has also increased (Chen et al., 2024b). Many existing technologies are unable to solve these more complex optimization problems (Pannu, Singh & Malhi, 2019). At the same time, the methods for solving optimization problems are also expanding. In order to make up for the limitations of other classical optimization methods in dealing with these problems, the advantages of metaheuristic algorithms in the study of optimization problems have become increasingly prominent (Weerasuriya et al., 2021).

Metaheuristic algorithms are an algorithmic framework that is generally applicable to a variety of optimization problems. They can be adapted to specific problems with only minor modifications and presented in a more intuitive form, making them easier to understand (Yue et al., 2021). However, metaheuristic algorithms are not specifically designed for specific problems. Their methods are usually approximate and inherently allow for simple parallel implementations (Tomar, Bansal & Singh, 2023). Osman (2003) divided metaheuristic algorithms into construction-based metaheuristic algorithms, local search algorithms, and population-based metaheuristic algorithms. Among them, local search algorithms optimize results by repeatedly fine-tuning a single solution, while construction-based and population-based metaheuristic algorithms build a complete solution by gradually adding some components. Fister et al. (2013) further divided existing metaheuristic algorithms into non-natural heuristic algorithms and natural heuristic algorithms, the latter of which include algorithms based on swarm intelligence, biological heuristic algorithms, and algorithms inspired by different factors such as social and emotional factors. Over time, various algorithms have continued to evolve and develop (Ye et al., 2023). Since the swarm optimization technology was proposed, it has received widespread attention. Many researchers have conducted in-depth research on it from different angles, focusing on its convergence, robustness, and other characteristics (Wang et al., 2023c). Akyol & Alatas (2017) proposed another similar classification, Binitha & Sathya (2012) proposed another biologically inspired metaheuristic algorithm, and Ruiz-Vanoye et al. (2012) proposed an algorithm based on animal populations.

Meng et al. (2014) first proposed the chicken swarm optimization algorithm (CSO). They believe that CSO is an intelligent optimization algorithm that plans parameter change processes based on the behavior of chicken swarms. With the joint efforts of scholars around the world, the algorithm has achieved remarkable results in the application of optimization problems by improving parameter settings and introducing new mechanisms and strategies, and has been successfully applied to many practical engineering fields, such as energy management of smart grids (Nuvvula et al., 2022), image processing (Cristin, Kumar & Anbhazhagan, 2021), feature extraction (Zhang et al., 2022), etc. Many researchers continue to innovate and provide rich cases and implementation methods for the chicken swarm optimization algorithm. Nuvvula et al. (2022) combined the differential evolution algorithm with the CSO algorithm for energy management of power grids to explore whether the algorithm can reduce power consumption and costs. Yu et al. (2022) used the CSO algorithm for feature extraction and proved the advantages of the swarm optimization algorithm in the field of feature extraction by comparing it with other algorithms. Therefore, in-depth research and application on optimization problems make CSO of great significance in solving optimization problems in social life and industrial fields.

CSO is a recognized effective meta-heuristic algorithm with advantages such as simple principle, easy implementation, simple parameter setting, and strong global search ability (Wang et al., 2020). At the same time, it also has disadvantages, such as easy to fall into local optimality and slow convergence speed. The reason for this disadvantage is that the CSO algorithm cannot adaptively adjust parameters according to the current optimization results (Qu et al., 2017). Due to the above reasons, many scholars have introduced different CSO variants to enhance the algorithm to solve the problem.

In recent years, biologically inspired meta-heuristic algorithms have continued to evolve in complex and dynamic optimization scenarios, and three cutting-edge trends have emerged that are closely related to this article. The first category is the fusion of quantum inspiration and swarm algorithms: injecting ideas such as quantum state superposition and tunneling into swarm search to enhance global exploration and the ability to escape local optimality. Representative work includes the hybrid and gravity-guided framework of quantum-inspired particle swarms (QIGPSO), which shows faster convergence and stronger robustness in high-dimensional and feature selection tasks; this type of method provides a structural advantage for rapid relocation in dynamic environments (Malik et al., 2025). The second category is the systematization and engineering of “quantum-meta-heuristic” methods: Recent reviews have summarized the scalable solutions and implementation points of quantum-inspired meta-heuristics in complex constrained problems, starting from applications such as Industry 4.0 and network security, and emphasizing the use of quantum mechanisms to enhance the search efficiency and adaptability of classical algorithms in uncertain environments (Sood, 2025). The third category is the structural transformation of CSO and its multi-objective/dynamic extensions: for example, non-dominated sorting CSO (NSCSO) couples multi-objective Pareto dominance with the social structure of the flock; while adaptive/enhanced CSO variants for combination and dynamic scenarios achieve a more robust trade-off between early diversity maintenance and late convergence acceleration (Huang et al., 2024). At the same time, the latest CSO review points out that in high-dimensional, noisy and dynamically changing scenarios, traditional CSO still has bottlenecks of premature maturity and insufficient convergence speed, which urgently need to be alleviated through hybrid strategies such as adaptive perturbation, differential mutation, elite retention and cross-modal (such as quantum inspiration) (Chen et al., 2024a).

The difference between the CSO and other algorithms

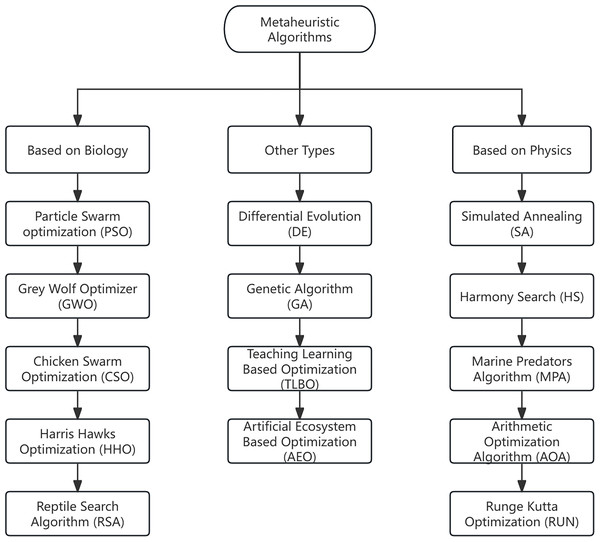

Swarm optimization represents a metaheuristic approach drawing inspiration from diverse fields such as biological science (Tang et al., 2024; Wang, Yue & Cao, 2022), operations research, and physics (Qin et al., 2025). It stands as a vital methodology for addressing real-world optimization challenges. Metaheuristic techniques construct tailored models for particular problems by delving into the dynamics of biological, chemical, and social systems, subsequently engineering intelligent, iterative search and optimization algorithms (Abdel-Basset, Abdel-Fatah & Sangaiah, 2018). Some notable examples include Genetic Algorithm (GA) (Holland, 1992), Differential Evolution (DE) (Storn & Price, 1997), CSO (Meng et al., 2014), Particle Swarm Optimization (PSO) (Kennedy & Eberhart, 1995), Grey Wolf Optimizer (GWO) (Mirjalili, Mirjalili & Lewis, 2014), Harris Hawks Optimization (HHO) (Heidari et al., 2019), Reptile Search Algorithm (RSA) (Abualigah et al., 2022), Teaching–Learning–Based Optimization (TLBO) (Rao, Savsani & Vakharia, 2011), Artificial Ecosystem–Based Optimization (AEO) (Zhao, Wang & Zhang, 2020) Simulated Annealing (SA) (Kirkpatrick, Gelatt & Vecchi, 1983), Harmony Search (HS) (Geem, Kim & Loganathan, 2001), Marine Predators Algorithm (MPA) (Faramarzi et al., 2020), Arithmetic Optimization Algorithm (AOA) (Abualigah et al., 2021), Runge–Kutta Optimization (RUN) (Yıldız et al., 2022) etc., (see Fig. 1). With the continuous development of various metaheuristic methods, their unique advantages can be found in practical optimization problems (Zitar, 2022). CSO is a random optimization method based on the search behavior of chicken swarms, simulating the hierarchical structure and behavior of chicken swarms (Meng et al., 2014). Compared with other algorithms, it has the characteristics of fast convergence speed, high search accuracy, and strong robustness. With these advantages, CSO outperforms other classical algorithms in research and experiments and shows broad application prospects.

Figure 1: Different metaheuristic algorithms.

To further clarify the relative position and characteristics of CSO among existing metaheuristic algorithms presented in Fig. 1, a concise comparison with several representative algorithms is provided below. Among which PSO models the collective movement of bird flocks, where each particle updates its velocity and position by learning from its own best record and the swarm’s global (or neighborhood) best. This social-learning mechanism yields very fast convergence on continuous optimization but can suffer from premature stagnation without diversity control. Numerous variants add inertia weights, constriction factors, or topology changes to balance exploration and exploitation. Compared with CSO’s subgroup hierarchy, PSO uses a single-swarm structure and typically has fewer control rules, which simplifies implementation but may reduce robustness on complex multimodal landscapes (Kennedy & Eberhart, 1995).

GWO imitates the leadership hierarchy (alpha, beta, delta, omega) and encircling–attacking behaviors of grey wolves. Candidate solutions are guided by the weighted influence of the top wolves, gradually shrinking the search radius to intensify exploitation. Its rank-based leadership facilitates stable convergence with limited parameters, though it may converge early if elites are misleading. Relative to CSO, which uses role-specific motion rules (roosters, hens, chicks), GWO emphasizes elite guidance and distance-shrinking dynamics (Mirjalili, Mirjalili & Lewis, 2014).

HHO is inspired by the surprise pounce tactics of Harris hawks and alternates between exploration and exploitation via soft/hard besiege phases. A time-varying energy factor and randomized jumps help the algorithm switch strategies and avoid local traps. HHO has shown strong performance on complex functions and engineering tasks, but can still stagnate without diversification. Compared with CSO, HHO relies on an adaptive “attack” model rather than subgroup roles; hybrids often leverage CSO’s diversity and HHO’s aggressive exploitation (Heidari et al., 2019).

RSA mimics reptilian hunting—tracking, encircling, and ambushing—to design exploration and exploitation steps with adaptive control. It promotes wide coverage early and focuses on local refinement near promising regions later; several works report competitive results on high-dimensional benchmarks and feature selection. RSA, however, may require parameter tuning to maintain diversity. In comparison with CSO’s hierarchical interactions, RSA uses stage-driven movement policies; CSO–RSA hybrids are used to combine diversity (CSO) with RSA’s strong intensification (Abualigah et al., 2022).

DE is a classical, physics-inspired population heuristic that perturbs target vectors by scaled differences of randomly sampled individuals, followed by crossover and selection. Its few, interpretable parameters and powerful self-recombination make it a staple for continuous, nonconvex optimization. DE may stagnate if diversity vanishes, motivating adaptive and ensemble strategies. Relative to CSO, DE’s operators are vector arithmetic rather than behavior rules; CSO–DE hybrids often inject DE’s strong local refinement into CSO’s diverse search (Storn & Price, 1997).

GA formalizes Darwinian evolution—selection, crossover, and mutation—on encoded candidate solutions. Schema propagation enables broad exploration of the search space, while niche methods maintain diversity on multimodal problems. Drawbacks include parameter sensitivity and potentially slow convergence without elitism or adaptive rates. Compared with CSO’s role-based dynamics, GA focuses on genetic recombination; recent CSO hybrids using GA-style crossover can increase diversity between subgroups (Holland, 1992).

TLBO simulates a classroom where solutions learn from the best “teacher” (teacher phase) and from peers (learner phase). It needs few algorithm-specific parameters and often provides robust baseline performance across engineering designs. The absence of explicit mutation can slow escape from deep local minima; many improved TLBOs add adaptive or chaotic strategies. When paired with CSO, TLBO’s parameter-light exploitation complements CSO’s exploration from subgroup interactions (Rao, Savsani & Vakharia, 2011).

AEO abstracts producers, consumers, and decomposers in an ecosystem to construct sequential update modes for exploration (production/consumption) and exploitation (decomposition). The alternating roles encourage both diversity and intensified search near high-quality regions. Studies since 2020 show competitive results on engineering and energy scheduling problems; performance depends on properly balancing stage frequencies. Against CSO, AEO is also multi-role but emphasizes stage cycling of the entire population rather than fixed social hierarchies (Zhao, Wang & Zhang, 2020).

SA is a single-solution metaheuristic based on thermal annealing: at high “temperature,” worse moves may be accepted to escape local minima; as temperature cools, the search becomes greedy. Its simplicity and theoretical grounding are appealing, but performance hinges on a well-designed cooling schedule. SA is often embedded in hybrids for local polishing. In CSO contexts, SA can serve as a post-optimizer to refine CSO’s best subgroup solutions (Kirkpatrick, Gelatt & Vecchi, 1983).

HS emulates musical improvisation: new “harmonies” (solutions) are generated from a memory bank using memory consideration, pitch adjustment, and randomization. The memory structure maintains diversity while incremental pitch changes provide local improvement. Parameter settings (HMCR, PAR, bandwidth) influence the balance of exploration and exploitation. CSO–HS hybrids use HS’s memory to stabilize subgroup search while CSO supplies behavioral diversity (Geem, Kim & Loganathan, 2001).

MPA models predator–prey foraging with Brownian/Lévy motions and a fish aggregating devices (FADs) mechanism to alternate wide-range exploration and focused pursuit. It is noted for strong performance on complex multimodal tasks and has been widely applied since 2020 in imaging, energy, and scheduling. Parameter control remains important to avoid drift. Combining MPA with CSO often couples MPA’s long-jump exploration with CSO’s structured subgroup learning (Faramarzi et al., 2020).

AOA designs four arithmetic operators (addition, subtraction, multiplication, division) with a density factor and accelerator to shift from diversification to intensification. Its operator set yields flexible step sizes and competitive results with modest parameter burden. Reported issues include sensitivity to density scheduling on rugged landscapes; adaptive AOA variants address this. With CSO, AOA operators can replace or augment role updates to refine exploitation without sacrificing diversity (Abualigah et al., 2021).

RUN leverages the Runge–Kutta integration scheme to guide candidate updates via higher-order local approximations, improving exploitation accuracy while retaining randomized exploration. It has achieved strong results on engineering design and feature selection since 2022. Tuning exploration terms is key to avoid overfitting local regions. In CSO hybrids, RUN often acts as a local search layer to sharpen the best rooster/hen positions (Yıldız et al., 2022). To provide a clearer comparison between CSO and other representative metaheuristic algorithms, Table 1 summarizes their structural features, strengths, and limitations.

| Algorithm | Inspiration source | Structure type | Strengths | Limitations |

|---|---|---|---|---|

| CSO | Chicken hierarchy | Multi-subgroup | Strong exploration, robust search | Parameter sensitivity |

| PSO | Bird flocking | Single-swarm | Fast convergence, easy implementation | Premature convergence |

| GA | Biological evolution | Population-based | Good diversity, global search | Slow convergence |

| DE | Differential vectors | Population-based | Few parameters, strong optimizer | Risk of stagnation |

| GWO | Wolf pack hierarchy | Leader-based | Stable convergence, few parameters | Early convergence risk |

| HHO | Hawk hunting | Phase-based | Strong exploitation, adaptive | May stagnate on multimodal |

| RSA | Reptile behavior | Stage-based | Balanced search, flexible control | Needs parameter tuning |

| TLBO | Teaching-learning | Two-phase model | No parameters, stable performance | Weak escape ability |

| AEO | Ecosystem roles | Role-cycling | Balanced exploration/exploitation | Stage frequency sensitive |

| SA | Thermal annealing | Single-solution | Good local refinement | Depends on cooling schedule |

| HS | Music improvisation | Memory-based | Diversity via memory, flexible | Sensitive to parameters |

| MPA | Predator–prey search | Mixed strategy | Strong in multimodal problems | Drift without tuning |

| AOA | Arithmetic operators | Operator-based | Flexible steps, few parameters | Density scheduling sensitive |

| RUN | Runge–Kutta scheme | Math-driven update | Accurate exploitation | Risk of overfitting locally |

Research objective and motivation

The objective of this study is to provide a structural review of CSO and its variants, offering an organized synthesis of algorithmic mechanisms, improvement strategies, and application trends. Rather than performing a comprehensive empirical investigation, this review analyzes and classifies existing research according to algorithmic strategies, highlights the evolution of CSO, and identifies research gaps that remain unaddressed. This article specifically discusses the following aspects: Introduction to standard CSO; including inspiration, main components, flowchart, and algorithm steps. At the same time, it outlines the research methods used and the improvements of the CSO algorithm, as well as the research and application of CSO in different fields. Finally, it includes a discussion and conclusion. Although two prior CSO reviews exist, both leave clear gaps that justify the present study. The early survey “Recent Studies on Chicken Swarm Optimization Algorithm: A Review (2014–2018)” concentrates on the formative stage of CSO and mainly reports the standard model with a few preliminary variants, without a structured taxonomy of improvement strategies (e.g., hybridization, adaptive control, binary/discrete modeling, or multi-objective extensions), cross-domain performance comparisons, or quantitative trend analysis (Deb et al., 2020a). The more recent “A comprehensive survey on the chicken swarm optimization algorithm and its applications: state-of-the-art and research challenges” summarizes developments up to roughly 2023 and outlines application areas, but it does not provide (i) a year-wise publication distribution or an objective-oriented categorization (single vs. multi-objective; continuous vs. binary; hybrid vs. non-hybrid), (ii) a consolidated comparison of algorithmic mechanisms and parameter-adaptation schemes across CSO variants, or (iii) an explicit mapping from CSO strategy families to recurring problem archetypes (e.g., engineering design, energy scheduling, WSN routing, medical diagnosis) (Chen et al., 2024a).

In contrast, this review covers 2014–2025 and contributes: (i) an objective- and strategy-based taxonomy that organizes CSO variants (hybrid, adaptive, binary, multi-objective) together with their application domains; (ii) a quantitative, year-wise distribution to reveal research growth phases; and (iii) a synthesis of comparative insights on strengths/limitations and domain suitability of recent CSO advances (e.g., fuzzy/chaotic enhancements, dual-population/adaptive role updates, CSO–DE/PSO/GA/HHO hybrids). These additions complement and extend prior CSO surveys rather than duplicate them, clarifying where CSO variants are most effective and where open challenges remain.

Literature search and selection strategy

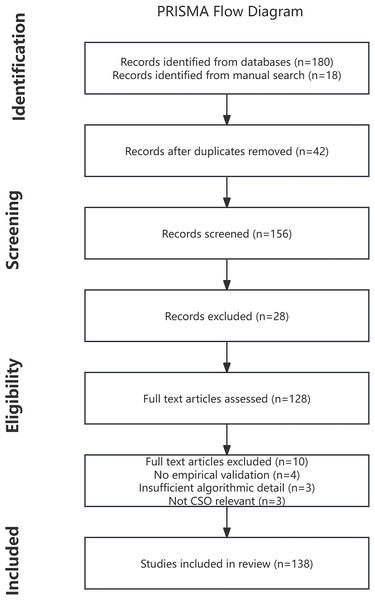

To ensure the reliability and academic quality of the reviewed materials, the majority of the studies analyzed in this article were sourced from peer-reviewed international journals indexed in Web of Science, Scopus, IEEE Xplore, and ScienceDirect. In cases where journal versions were not yet available, a limited number of high-impact conference publications were considered to capture recent advancements. This selection strategy ensures that the review is grounded in rigorously vetted research while preserving coverage of emerging CSO developments. To ensure transparency and reduce selection bias, the literature retrieval and filtering process was conducted in accordance with the PRISMA 2020 guidelines. The procedure consisted of four stages: identification, screening, eligibility assessment, and final inclusion.

A total of 198 studies related to CSO and its variants were initially identified. Among them, 180 records were retrieved from academic databases such as Google Scholar, while 18 additional studies were located through manual searches, including citation tracing and reference cross-checking. After the removal of 42 duplicates, 156 unique records were retained.

The titles and abstracts of the remaining 156 studies were screened to exclude irrelevant or clearly unsuitable publications. During this phase, 28 records were removed based on a lack of topic relevance, incomplete methodological description, or insufficient connection to CSO. A total of 130 articles remained for full-text assessment.

Full-text evaluation was performed on these 128 articles using predefined inclusion and exclusion criteria. During this stage, 10 publications were excluded due to one or more of the following reasons: absence of experimental validation (n = 4), insufficient algorithmic detail (n = 3), and lack of CSO relevance or comparative analysis (n = 3).

Finally, 138 eligible studies, These articles form the basis for examining the development, hybridization strategies, performance evaluation, and real-world applications of CSO and its variants. The entire identification and screening process is illustrated in the updated PRISMA 2020 flow diagram (Fig. 2).

Figure 2: PRISMA flow diagram.

Survey methodology

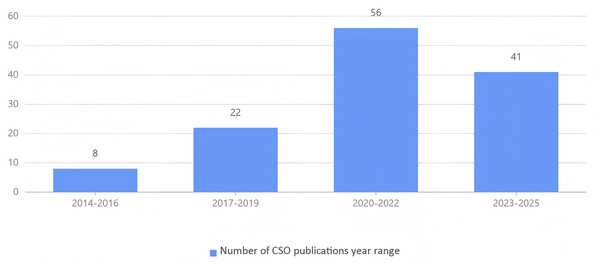

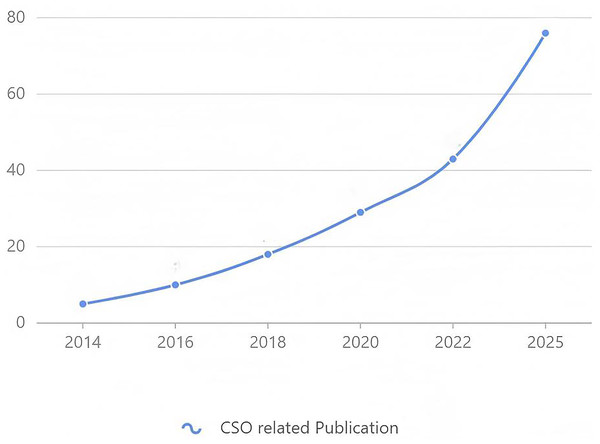

This review follows a structural literature analysis approach, which aims to organize and classify existing research without applying the strict procedural requirements of a systematic review. All relevant articles are from databases such as Google Scholar, Web of Science, and IEEE Xplore. The specific search strategy is to determine the year (2014–2025), and the keywords used to determine the search are keywords including: “swarm optimization algorithm”, “swarm optimization algorithm variants”, “improved swarm optimization algorithm”, “hybrid swarm optimization algorithm”, “swarm optimization algorithm review”, “swarm optimization algorithm application”, etc. The main article criteria used are published journals or conference articles that have proposed or improved the CSO field and applied it to practical problems. After screening, the selected swarm optimization algorithm improvements are mainly concentrated in parameter optimization, hybrid algorithm improvement, introduction of new mechanisms, and adaptive algorithms. These improved algorithms have been widely used in many fields, mainly involving engineering, manufacturing, energy, and medicine. Duplicate literature and research articles that are not related to optimizing swarm intelligence are excluded. According to the research field and research focus, this article structural analyzes, classifies, and summarizes the articles, and conducts in-depth discussions based on their practical application fields and theoretical foundations. After excluding irrelevant article results, a total of 138 articles were selected for this review. To further illustrate the temporal trend of CSO-related research, As shown in Fig. 3, early works appeared during 2014–2016 (eight articles), followed by steady growth in 2017–2019 (22 articles), and a significant rise in 2020–2022 (56 articles). Research activity remains strong in 2023–2025 (41 articles), with broad applications in engineering, energy, wireless sensor networks, and medicine.

Figure 3: Number of CSO-related publications by year range.

Theoretical convergence considerations of hybrid CSO variants

Although metaheuristic algorithms are predominantly heuristic and stochastic in nature, their search behavior can still be theoretically justified under established convergence frameworks. Classical population-based optimizers, including CSO and its hybrid variants, can be interpreted as randomized iterative processes or Markov chains operating over a continuous or discrete search space (Marini & Walczak, 2015). Under the assumption of irreducibility and bounded stochastic perturbation, such processes are capable of converging in probability toward a global attractor set as the number of iterations tends to infinity.

In the context of hybrid CSO models, convergence is strengthened by the integration of mechanisms such as Lévy flight, differential evolution, adaptive parameter control, and elite retention. Lévy-based perturbations enlarge the global search radius and ensure a non-zero probability of escaping local minima (Verma, Sahu & Sahu, 2024), while DE-style mutation and crossover operators improve directional guidance in high-dimensional landscapes. The elite or memory-based retention of best-performing individuals preserves solution quality across generations, satisfying the conditions for asymptotic stability (Deng et al., 2021). Formally, if denotes the position of an individual (or best solution) at iteration , and is a global optimum or element of the optimal set, then a common convergence statement in stochastic metaheuristics asserts:

(1)

This expression does not imply deterministic convergence to a single point, but rather convergence in probability to an -neighborhood of the global optimum. The use of randomization, adaptive step sizes, and subgroup role updates in CSO satisfies two essential conditions typically cited in convergence theories: (i) a sufficiently diverse exploration phase with non-zero probability of sampling any region in the search space, and (ii) an exploitation mechanism that asymptotically narrows the search radius (Huang, Qiu & Riedl, 2023).

Moreover, hybrid CSO variants introduce multi-stage update rules that preserve ergodicity while reducing variance in the latter stages of search, thereby making the convergence bound tighter compared to the static version. Although a full formal proof for every variant is beyond the scope of this review, the combination of stochastic global reach, adaptive local search pressure, and elite solution retention aligns with the convergence models established for PSO, DE, and ACO-type metaheuristics. Consequently, the improved CSO frameworks surveyed in this article possess a reasonable theoretical foundation under stochastic convergence analysis, supporting their algorithmic stability and long-term search reliability.

Taxonomy of CSO and its variants

Since the standard CSO algorithm was first introduced in 2014, a wide range of improved, hybrid, binary, multi-objective and application-oriented variants have emerged to address its limitations in convergence speed, exploitation capability, parameter dependency, and problem adaptability. To provide a clearer understanding of how these developments differ in design philosophy and application scope, this section presents a systematic taxonomy of CSO-related research. The classification is based on the primary optimization strategy adopted by each study, including performance enhancement through parameter adaptation or chaos mechanisms, hybridization with other metaheuristics, discrete and binary transformations, domain-specific customization, and multi-objective extensions. This taxonomy not only reveals how CSO has evolved over time but also highlights the motivations behind different improvement routes and the contexts in which they are most effective.

Chicken swarm optimization algorithm

The CSO algorithm was initially introduced by Meng et al. (2014) in 2014. Their gregarious nature allows them to coordinate their foraging efforts (Yan et al., 2021). They posited that CSO functions as an intelligent optimization method, strategically orchestrating parameter adjustments by emulating the behavioural patterns observed in chicken flocks. The algorithm models the behaviour of chicken flocks by categorizing parameter variables into three distinct groups: roosters, hens, and chicks. It then establishes a parameter adjustment process that mirrors the foraging behaviour exhibited by these flocks (Carvalho et al., 2022). In this framework, the entire chicken flock is partitioned into multiple subgroups, each comprising a single rooster, several hens, and their respective chicks. Within each subgroup, these different categories of individuals adhere to their own unique movement rules and engage in diverse forms of interaction, such as learning and competition. At the same time, the hierarchical structure within the chicken flock will be continuously updated with multiple generations of evolution (Ishikawa et al., 2020).

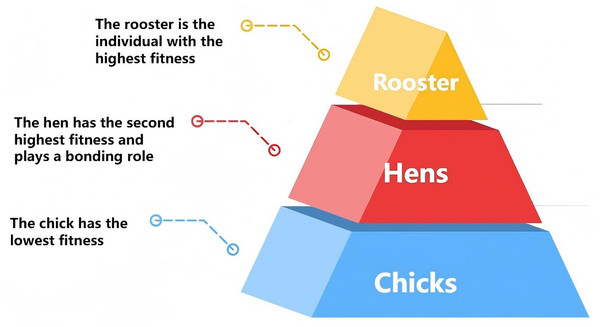

Chicken swarm algorithm characteristics

Chickens are gregarious animals that usually forage in groups. The entire group can be divided into three types of individuals: roosters, hens, and chicks. Due to the difference in foraging ability, a clear hierarchy is formed within the group, as shown in Fig. 4. Hens have weaker foraging ability than roosters, so in the hierarchy, hens usually follow roosters to forage, while chicks with poorer foraging ability are ranked last. In a foraging group, the rooster is in the center of the group, the hens move around the roosters, and the chicks forage around the hens. Because of this, a pattern of mutual learning and competition is naturally formed between different members, and the positions of individuals are constantly updated according to their respective movement rules (Jonsson & Vahlne, 2023).

Figure 4: Flock hierarchy.

Silhouettes and icons adapted.Within the entire population, the identities, mating relationships, and mother-child connections among individuals remain static over a span of G generations (where G represents the iteration cycle). Following this period, these relationships undergo an update (Singh & Kumar, 2023). Within each smaller subgroup of the population, hens typically adhere to their respective mates, the roosters, in their quest for sustenance, while concurrently engaging in random competitions for food with other members of the subgroup. Notably, individuals boasting superior fitness values are more likely to secure food resources (Wang et al., 2023a).

The entire flock can be categorized into three groups: RN, HN, and MN, which denote the respective counts of roosters, hens, and chicks. The rooster with the highest fitness value is designated as the best rooster (RN), while the chick with the lowest fitness value is classified as the worst chick (CN). All other individuals are considered hens. Thus, a total of N chickens are engaged in foraging activities within a D-dimensional space. Their position , let represent the position of the represent the position of the chicken, chicken, represents the iteration, and represents the dimension of the search space, where , and the maximum number of iterations is T.

Mathematical modeling

Parameter control within the CSO algorithm holds significant importance, and its key operational components are illustrated in Fig. 3 (Wu et al., 2015).

In the context of the CSO algorithm, the size of the flock is determined through empirical means. When the flock contains too few chickens, the quality of the solution to the optimization problem tends to be suboptimal. Conversely, an excessively large flock size can escalate the computational expense of running the algorithm. Furthermore, the ratio of hens, chicks, and roosters within the flock is crucial and exerts a notable influence on the algorithm’s search efficacy. A recommended ratio for the distribution of chicks, hens, and roosters is 2:6:2.

When the algorithm undergoes G iterations, it is essential to restructure the chicken hierarchy. Consequently, the choice of the dislike parameter G is of significant importance. If the value of G is set too high, it may hinder the CSO algorithm from effectively identifying the optimal solution to the problem. Conversely, if G is too low, the algorithm becomes susceptible to premature convergence. Therefore, selecting an appropriate value for G not only enhances the algorithm’s diversity but also provides opportunities for the algorithm to escape local optima during its execution. when , good optimization results can be achieved for most of the optimization problems.

In the CSO algorithm, the coefficient FL, which represents the learning factor for chicks following hens, plays a crucial role. When FL is set to a smaller value, it enhances the chicks’ ability to conduct preliminary local searches, thereby potentially improving their exploration in the early stages of the algorithm. However, if FL is too large, it may negatively affect the algorithm’s performance in later iterations. Typically, the FL value is maintained within the range of [0.4, 1] to balance exploration and exploitation effectively. When the CSO algorithm is updated after the maximum number of iterations, the algorithm will stop iterating down and output the final obtained optimal value, and when the convergence accuracy reaches a certain degree will also terminate the iteration.

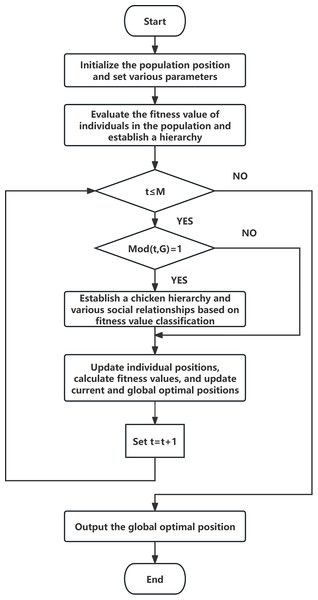

The CSO algorithm begins by initializing the population and subsequently evaluates the fitness value of each individual within the population. Based on these fitness values, the chickens are categorized into roosters, hens, and chicks, establishing a hierarchical structure and a maternal-offspring relationship. Following the update of all individual positions, the current optimal position for each chicken is updated and potentially replaced. The overall best value of the entire chicken group is also updated, and the fitness value of each chicken is recalculated. The algorithm then checks whether the termination condition has been met to determine if the operation should be terminated. If the optimal position has been obtained, the iteration is halted, and the result is output. Otherwise, the algorithm reverts to the previous step, and this loop continues until the stopping criterion is satisfied. The standard CSO algorithm can be divided into three fundamental steps: parameter initialization, generation of new solutions, and updating the flock’s iterative positions. The flowchart for CSO is illustrated in Fig. 5, and the algorithm comprises the following nine steps.

Figure 5: Flowchart of CSO.

(1) Initialize flock location.

In the CSO method, there are three distinct types of chickens: roosters, hens, and chicks. When the CSO algorithm is applied to solve an optimization problem, each chicken within the flock corresponds to a potential solution for that problem. Each type of chicken—roosters, hens, and chicks—employs a unique strategy in their pursuit of the optimal solution. Within the flock, the total number of chickens is denoted as N, which includes the combined count of roosters, hens, and chicks. Specifically, where denotes the number of roosters, the number of hens is denoted by , The number of chicks is denoted by , denotes the value of the latitude of the individual at the t iteration, where , represents the spatial dimension of the optimization problem, and Maximum iteration times at M. The initial positions of the whole flock are randomly generated in the search space according to equation:

(2) where and denote the upper and lower bounds of everyone in the j dimension, respectively, and Rand denotes a random distribution between [0, 1].

In the chicken swarm optimization algorithm, the chickens are categorized into roosters, hens, and chicks based on their fitness values. Each category employs a distinct position update formula, which can be expressed as follows:

(2) Rooster location update

Roosters with superior fitness values possess a competitive edge in foraging compared to those with lower fitness, enabling them to explore a broader range of spaces in search of food. The equation governing the position update for roosters is presented as follows:

(3)

(4) where is the selected rooster with index , represents a normal distribution with mean 0 and standard deviation , is a constant, and its meaning is such that the denominator is not 0. denotes the objective function of the current rooster, denotes the rooster’s evil objective function value.

(3) Hen location update

Hens not only followed the roosters of their subgroup in search of food but could also randomly steal food that other hens find. In feeding competitions, hens with higher fitness values have an advantage over hens with lower fitness values. The update formula for hen is as follows:

(5)

(6)

(7) where , Rand denotes the number of random distributions between [0, 1] again; corresponding to the hen, denotes either body inside the rooster and the hen, .

(4) Chick position update

Within each subgroup, chicks forage in the vicinity of the hen and adjust their positions in accordance with Eq. (7).

(8) where is the position of the chick’s mother such that , FL is a parameter that signifies the extent to which a chick follows its mother in terms of speed. Taking into account the individual variations among chicks, FL is randomly selected within the range of [0.2].

Convergence and theoretical basis analysis

The convergence analysis of the swarm optimization algorithm is usually carried out with the help of Markov chain theory. Wang et al. (2019) first proposed a theoretical framework for using random processes to establish a Markov chain model for CSO convergence analysis. They constructed the following Markov chain model:

(9)

Among them, the state transition probabilities of different roles (rooster, hen, chick) are defined respectively, and the conditions and limitations of CSO global convergence and local convergence are analyzed in detail. This theoretical analysis shows that although CSO has a strong global search capability, it is easy to fall into local optimality or premature convergence when the population size is unreasonable or the parameters are poorly set, which limits its performance in complex problems. To this end, it is necessary to improve its local search capability and parameter sensitivity through adaptive mechanisms or hybrid methods.

Comparative analysis of CSO-based and IEEE TEVC 2024–2025 algorithms

To provide a clearer performance perspective of CSO and its recent enhancements, a comparative analysis was conducted based on the original benchmark settings reported in the respective publications. Rather than forcing all methods into a single test environment, each algorithm is evaluated according to its native experimental protocol. This approach ensures that the reported improvements, dimensions, and baselines remain authentic and methodologically consistent.

First, representative CSO variants from 2017 to 2024 were summarized with respect to their originally adopted benchmark suites, performance highlights, and relative effectiveness. Tables 2 and 3 presents the comparative results. To avoid artificial ranking bias, relative performance is expressed using categories such as Top-1, Top-3, Top-5.

| Algorithm | Year | Benchmark used | Dim | Performance summary | Rank |

|---|---|---|---|---|---|

| ADPCCSO | 2023 | CEC2017/standard sets | 30D/50D | Outperforms multiple CSO variants; strong high-D convergence | Top-1 |

| ICSO-RHC | 2024 | CEC2022/standard functions | 50D | Best on multimodal functions; improved success rate | Top-3 |

| AMLCSO | 2022 | CEC/standard functions | 30D/50D | Faster convergence and higher precision | Top-3 |

| MFCSO | 2022 | CEC2020 | 50D | Better than PSO/HHO/CSO in mean error/stability | Top-5 |

| ICSO | 2017 | CEC2014 | 30D | Improved performance in 25/30 benchmark functions | Top-5 |

| PECSO | 2023 | Engineering/IK + test sets | Various | Robust in engineering tasks and constraints | Top-5 |

| HGBCSO | 2021/22 | Standard benchmarks | 30D/50D | Hybrid strategies enhance global exploration | Top-5 |

| LFCSO | 2023 | Two-stage FS + prediction | n/a | Lévy flight improves global exploration | Top-5 |

| Algorithm | Year | Benchmark used | Dim | Performance summary | Rank |

|---|---|---|---|---|---|

| QMPA (Quantum MPA) | 2025 | CEC2020/CEC2022 | 30D/50D | Top performance vs. MPA/PSO-class baselines | Top-1 |

| RHHO (Reinforced HHO) | 2025 | CEC2017/CEC2020 | 30D/50D | Strong on multimodal/composite functions | Top-3 |

| ADMO (Adaptive DE Mayfly) | 2025 | CEC2017/engineering | 30D/50D | Better mean ranks, reduced variance | Top-5 |

| QODE (Quantum-Orthogonal DE) | 2024 | CEC2017/CEC2020 | 30D/50D | Improves solution quality vs. DE family | Top-5 |

| EFA (Enhanced Firefly Alg.) | 2024 | CEC2017/CEC2020 | 30D/50D | Lower mean errors, stable across diverse functions | Top-5 |

| HHO-DE (Hybrid HHO–DE) | 2025 | CEC2020/engineering | 30D/50D | Boosted exploitation with maintained diversity | Top-5 |

| AO-GA (Arithmetic–GA Hybrid) | 2024 | CEC2017/BBOB | 30D/50D | Balanced global–local search, improved ranks | Top-5 |

| QPSO-AV (Adaptive Var. QPSO) | 2025 | CEC2017/dynamic sets | 30D/50D | Stronger in dynamic settings, competitive static results | Top-5 |

Following the analysis of CSO-based strategies, attention is extended to the latest evolutionary optimization research published in IEEE Transactions on Evolutionary Computation (TEVC) during 2024–2025. These algorithms frequently integrate concepts such as quantum-inspired operators, hybrid search strategies, orthogonal learning, or chaotic dynamics, and they often benchmark on CEC2017, CEC2020, CEC2022, BBOB, or real-world engineering problems. Table 3 provides an overview of these cutting-edge approaches using the same ranking categories to maintain comparability.

Overall, the CSO-derived algorithms demonstrate competitive performance among classical swarm intelligence approaches, particularly in unimodal and multimodal settings. Meanwhile, the emerging TEVC 2024–2025 methods highlight new research directions (e.g., quantum search mechanisms, hybrid exploitation–exploration strategies, and adaptive parameter control). The side-by-side comparison underscores a performance gap that motivates further integration of these advanced strategies into next-generation CSO variants.

Improvement of the swarm optimization algorithm

With the ongoing expansion of application domains and the escalating complexity of problems (Li et al., 2022), the CSO algorithm is simple and efficient. It can divide the parameters into three different populations. The search range and traversal range of different populations will also be different (Ding, Zhou & Bi, 2020). The CSO algorithm has progressively revealed certain limitations (Nadikattu, 2021), including sluggish convergence speed, inadequate local search capabilities, and a propensity to become trapped in local optima (Zouache et al., 2019). In the realm of population initialization, researchers have implemented a range of strategies, such as mutation mechanisms, chaos theory, and fuzzy theory, to augment population diversity (Ahmed, Hassanien & Bhattacharyya, 2017), thereby facilitating more efficient discovery of optimal solutions. Nevertheless, the majority of these enhancement methodologies predominantly concentrate on optimizing specific update procedures.

The most significant drawback of the CSO algorithm lies in its susceptibility to becoming ensnared in local optima, with improper parameter settings serving as the primary catalyst for this issue (Qi et al., 2022). When parameter configurations are ill-advised, the algorithm is unable to accurately forecast the optimal solution, leading to suboptimal final outcomes. Within the CSO algorithm, the hen individuals consistently adhere to the rooster for parameter substitution, while the chicks follow the hens, rendering the rooster a pivotal element in the positional updating of hens. Should the rooster operator succumb to local optimality, the hens and chicks will likewise become entrapped, prompting premature convergence of the algorithm (Ren & Long, 2021). This makes the algorithm form a closed loop, hindering the further optimization of subsequent operators. Compared with traditional swarm intelligence algorithms, CSO shows better efficiency and resilience, mainly because of the overall architecture, stability and portability of the algorithm (Singh et al., 2023). Key factors such as the number of hens in the CSO algorithm will affect the convergence of the algorithm (Wu, 2021). Therefore, in view of these limitations of the CSO algorithm, many researchers have improved it and derived multiple variants (Yang et al., 2023). This section will elaborate on the improvement methods of the CSO algorithm.

CSO algorithms classified by improvement principle

The improvement methods of the chicken swarm optimization algorithm can be summarized as follows (Box 1).

| Classification method | Author | Main features | Applicable issues |

|---|---|---|---|

| Parameter optimization | Wu et al. (2015) | Introducing inertia weights and learning factors | General optimization problem |

| Adaptive method | Liang, Wang & Ma (2023) | Dynamically adjust the parameter G and introduce the artificial fish swarm algorithm | High-dimensional optimization problem |

| Hybrid optimization | Kong, Dagefu & Sadler (2020) | Combining bat algorithm and CSO | Complex optimization problems |

| Binary methods | Han & Liu (2017) | Greedy and mutation strategies | Discrete optimization problems |

Parameter optimization strategy

To address the issue of the swarm optimization algorithm’s susceptibility to premature convergence and getting trapped in local optima, Wu et al. (2015) introduced an enhanced variant known as the Improved Chicken Swarm Optimization (ICSO) algorithm. This innovative approach incorporates a mechanism that enables chicks to learn from roosters during the positional update process and introduces inertia weights and learning factors. Experimental findings indicate that this optimization algorithm effectively mitigates premature convergence, thereby enabling it to escape local optima. The refined formula is presented as follows:

(10)

In this context, denotes the index of the hen to which the chick belongs, while signifies the index of the rooster within the subgroup. The parameter represents the learning factor, which quantifies the extent to which the chick learns from the rooster. Additionally, stands for the chick’s self-learning coefficient, which functions analogously to the inertia weight in PSO.

Wu, Xu & Kong (2016) conducted an in-depth study on the convergence of the CSO algorithm. They established a Markov chain model of CSO through a random process. The optimization algorithm successfully solved the problems of global convergence and convergence speed. The algorithm formula is as follows:

(11) where is the fixed value between 0 and 1.

(12) where and are the fixed values between 0 and 1,

(13) Markov chain model of the chicken swarm optimization. The formula is as follows:

(14)

(15)

(16) Wang et al. (2017) proposed a mutation cluster optimization (MCSO) based on nonlinear inertia weight to address the problems in the original CSO algorithm, in which chicks follow the mother hen to forage and the roosters have weak global search ability. The algorithm introduced a mutation factor when updating the positions of chicks and used a nonlinear decreasing weight based on an open upward parabola when updating the positions of roosters. By improving the update formulas for chicks and roosters, the nonlinear inertia weighted mutation flock optimization algorithm is easier to obtain the global optimal solution than the original flock optimization algorithm.

(17) is a random variable with a Gaussian (0, 1) distribution

(18)

is the nonlinear decreasing weight of the initial upward parabola.

Xue et al. (2018) introduced an enhanced version of the CSO algorithm, referred to as the Improved Chicken Swarm Optimization (ICSO) algorithm, to tackle the issue of the original CSO algorithm’s tendency to get trapped in local optima. This refined algorithm modifies the position update equations for both hens and chicks. Experimental outcomes demonstrate that the improved algorithm exhibits superior global convergence and higher convergence accuracy.

(19)

(20) is the optimal individual.

Wang et al. (2019) developed an enhanced variant of the CSO algorithm, termed Improved Chicken Swarm Optimization with Revised Hierarchical Control (ICSO-RHC), to counteract the challenges of slow convergence and susceptibility to local optima when tackling high-dimensional problems. The algorithm’s enhancements span four key areas: rooster position updating, hen position updating, chick position updating, and population updating, collectively contributing to an optimized overall algorithm performance.

(21) where R indicates that the individual is a rooster, and is a Gaussian distribution with mean −1 and variance .

(22) where represents a uniformly distributed random number within the interval [0, 1]. The variable R signifies that the individual is a rooster, whereas H indicates that the individual is a hen. The term denotes the individual chosen as the elite learning exemplar for hens during the iteration. Furthermore, Hao refers to the count of elite individuals preserved within the population.

(23) where is the index of the chicks mother, i.e., the companion of the chick. R means the individual is a rooster. H means the individual is a hen. C means the individual is a chick, FL and are learning factors.

Liang, Kou & Wen (2020) introduced an enhanced CSO (ICSO) algorithm to mitigate the issue of getting trapped in local minima during iterative processes. Their improvement strategy involves incorporating Levy Flight into the position updating process of hens, thereby augmenting algorithm perturbation and enhancing population diversity. Additionally, a nonlinear weight reduction strategy is integrated into the position updating of chicks to bolster their self-learning capabilities. Through comparative experiments with other algorithms, it has been validated that this optimized algorithm exhibits superior performance in terms of convergence accuracy and stability. The refined formulas are as follows:

(24) where is the jump path of a random search with a step size following a distribution, is a scaling parameter in the , and is a vector operator representing a dot product.

(25)

(26) where is the minimum inertia weight , is the maximum inertia weight, = 0.95 and C is the acceleration factor, .

Wu et al. (2021) proposed an improved chicken colony optimization algorithm (ICSO) to address the problems existing in the traditional CSO algorithm. They introduced a target optimization strategy in the rooster update process, added a mutation factor in the hen update process, and enhanced the influence of roosters in the chick update process. The efficacy and superiority of the ICSO algorithm were substantiated through testing on benchmark functions.

(27)

(28)

(29)

is a randomly selected rooster, and is a randomly selected hen.

(30)

and are the corresponding hierarchical relationships of roosters and hens respectively. All the improvements to CSO are shown in Table 4.

| Researchers/Ref. | Year | Algorithm | Rooster | Hens | Chicks |

|---|---|---|---|---|---|

| Meng et al. (2014) | 2014 | ICSO | ✗ | ✗ | ✗ |

| Wu et al. (2015) | 2015 | ICSO | ✗ | ✗ | ✓ |

| Wu, Xu & Kong (2016) | 2016 | MCSO | ✓ | ✓ | ✓ |

| Wang et al. (2017) | 2017 | ICSO | ✓ | ✗ | ✓ |

| Xue et al. (2018) | 2018 | ICSO | ✗ | ✓ | ✓ |

| Wang et al. (2019) | 2019 | ICSO-RHC | ✓ | ✓ | ✓ |

| Liang, Kou & Wen (2020) | 2020 | ICSO | ✗ | ✓ | ✓ |

| Wu et al. (2021) | 2021 | ICSO | ✓ | ✓ | ✓ |

Adaptive strategy

Wang et al. (2021) identified two primary issues with the CSO algorithm: sluggish convergence speed and challenges in achieving the global optimum. To address these issues, they introduced an Adaptive Fuzzy Chicken Swarm Optimization algorithm (FCSO). This algorithm dynamically adjusts the number of chickens and random factors via a fuzzy system to strike a balance between the algorithm’s exploitation and exploration capabilities. Verified by the black-box optimization benchmark function, the proposed algorithm outperforms other algorithms in terms of convergence speed and optimization accuracy. Liang, Wang & Ma (2023) proposed an adaptive dual-species collaborative chicken swarm optimization algorithm (ADPCCSO) to address the problems of low solution accuracy and slow convergence speed of the CSO algorithm. They used an adaptive dynamic adjustment parameter G to balance the algorithm’s capabilities in breadth search and depth optimization. In addition, the algorithm’s solution accuracy and optimization capabilities were improved by improving the foraging behavior strategy, and the artificial fish swarm algorithm (AFSA) (Zhang et al., 2014) was introduced to construct a dual-species collaborative optimization strategy based on chicken swarms and artificial fish swarms to enhance the algorithm’s ability to escape local optimality. Benchmark test results show that the proposed algorithm outperforms other swarm intelligence algorithms in terms of solution accuracy and convergence.

Xu, Xia & Zhong (2024) developed an Improved Chicken Swarm Optimization algorithm (mCSO) to enhance the recognition accuracy of structural parameters in manipulators. The algorithm addressed the issue of low convergence accuracy by dynamically and adaptively adjusting the moving step size of the chicken. Ultimately, the effectiveness of the optimization algorithm was validated through a single-point cone hole repeatability experiment on front and rear manipulators. Xing et al. (2021) aimed to enhance displacement prediction accuracy in landslide early warning systems, ensuring greater reliability, while resolving the CSO algorithm’s tendency to fall into local optimality and premature convergence. They proposed an Adaptive Chicken Swarm Optimization algorithm (ACSO) that integrates chaos mapping with an adaptive inertia weight strategy to minimize and refine the objective function based on the prediction interval. The algorithm introduces an adaptive mechanism during the hen position update process to increase population diversity, reduce the uncertainty of random initial populations, and enable the algorithm to escape local optimal values. By comparing with other algorithms and validating against benchmark test functions, ACSO demonstrated its effectiveness and overcame local convergence issues. Zhou et al. (2021) presented an Adaptive Dynamic Distributed Chicken Swarm Optimization algorithm (DCSO) to address the shortcomings of the CSO algorithm, including low solution accuracy and susceptibility to local optimal solutions. They employed a dynamic weight strategy to enhance algorithm accuracy and utilized a normal distribution learning factor to mitigate the risk of falling into local optimal solutions. Finally, the algorithm’s performance was evaluated using a benchmark test function, revealing that DCSO exhibits high solution accuracy and effectively escapes local optimal solutions. Table 5 summarizes the explanations and performance of all improvements to the adaptive CSO.

| Researchers/Ref. | Year | Adaptive algorithm | Description | Performance |

|---|---|---|---|---|

| Wang et al. (2021) | 2021 | FCSO | Adaptive adjustment of fuzzy system | Improve convergence speed |

| Liang, Wang & Ma (2023) | 2023 | ADPCCSO | Adaptive dynamic parameter G, introduce artificial fish swarm algorithm | Improve convergence and convergence accuracy |

| Xu, Xia & Zhong (2024) | 2024 | mCSO | Adaptive dynamic improvement of chicken step length | Improve solution accuracy |

| Xing et al. (2021) | 2021 | ACSO | Add chaos mapping and adaptive inertia weight at the hen position | Escape from local optimum |

| Zhou et al. (2021) | 2021 | DCSO | Dynamic weight strategy solves the problem of reduced accuracy | Improve solution accuracy and escape from local optimum |

Hybrid optimization strategy

The CSO algorithm demonstrates robust global search capabilities; however, it exhibits certain constraints in local search efficiency. Consequently, integrating CSO with other optimization algorithms is anticipated to yield a more potent search algorithm. Deb et al. (2020b) devised a hybrid multi-objective evolutionary algorithm tailored for optimizing the layout of electric vehicle charging stations. This algorithm amalgamates the Pareto dominant CSO algorithm with a teaching-learning-based optimization (TLBO) (Rao, 2016) approach to derive the Pareto optimal solution. The hybrid algorithm enhances overall search efficacy and effectively circumvents the issue of premature convergence. Additionally, Deb & Gao (2021) proposed a hybrid algorithm (ALOCSO) that combines CSO and ant lion optimization (ALO) for the optimal placement of chargers, a task involving multiple design variables, objective functions, and constraints. This method effectively prevents the algorithm from becoming trapped in local optima and improves convergence.

Kong, Dagefu & Sadler (2020) introduced a hybrid optimization algorithm (CSO-BA) that integrates the bat algorithm (BA) with CSO. The bat algorithm excels in short-range, high-precision searches, while CSO possesses strong global search capabilities. By combining the two, CSO-BA not only enhances search accuracy but also effectively avoids local optimal traps. Gawali & Gawali (2021) developed a hybrid optimization method based on CSO and the deer hunting optimization algorithm (DHOA) (Brammya et al., 2019) to facilitate knowledge and skill transfer in expert reinforcement learning within human-computer interactions. The hybrid algorithm addresses the CSO algorithm’s tendency to fall into local optima in high-dimensional optimization problems. Through test function validation, this method exhibits higher accuracy and lower error loss compared to other algorithms when solving high-dimensional engineering problems. Bourki Semlali, Riffi & Chebihi (2018) proposed a hybrid chicken swarm optimization algorithm (HCSO) that incorporates parallel computing technology to tackle the quadratic allocation problem. By integrating the GRASP construction process and employing multi-threading to generate the initial population, the algorithm reduces the likelihood of becoming trapped in local optima. Experimental results indicate that HCSO can attain the global optimal solution more swiftly and reliably. Table 6 summarizes the description and performance of all improvements to the hybridized CSO.

| Researchers/Ref. | Year | Hybrid strategy | Description | Performance |

|---|---|---|---|---|

| Deb et al. (2020b) | 2020 | TLBO | Enhance overall search capability | Avoid premature convergence |

| Deb & Gao (2022) | 2021 | ALO | Improve population utilization and speed up convergence | Improve algorithm convergence |

| Kong, Dagefu & Sadler (2020) | 2020 | BA | Use bat algorithm for short-range high-precision search | Jump out of local optimum |

| Gawali & Gawali (2021) | 2021 | DHOA | DHOA has fast convergence speed and good performance | Quick convergence |

| Semlali, Riffi & Chebihi (2018) | 2018 | GRASP | Parallel computing technology | Global optimum |

Binary strategy

From the existing research, most CSO-related research focuses on optimization problems in discrete or continuous space, while there are relatively few studies on binary problems. Han & Liu (2017) put forward a binary improved chicken swarm optimization algorithm (BGCSO) tailored for solving the 0–1 knapsack problem. This algorithm incorporates a greedy strategy within the CSO framework to enhance the feasibility of solutions and employs a mutation strategy on individuals with lower fitness levels to boost population diversity. Ultimately, the researchers carried out numerical experiments on 10 instances of the 0–1 knapsack problem. The findings indicated that the algorithm outperformed the wolf pack algorithm and particle swarm algorithm in terms of optimization capability, convergence rate, and stability. Lee & Zhuo (2021) introduced a hybrid genetic binary chicken swarm optimization algorithm (HGBCSO) to construct an efficient fault diagnosis model for induction motor rotors. This methodology effectively reduces the dimensionality of raw data, extracts pivotal features, and mitigates background noise. The algorithm integrates the GA to augment global search capabilities, enhance population diversity, and circumvent the issue of premature convergence. Table 7 summarizes the description and performance of all improvements to the binary CSO.

| Researchers/Ref. | Year | Binary algorithm | Description | Performance |

|---|---|---|---|---|

| Han & Liu (2017) | 2017 | BGCSO | Use greedy strategy and mutation strategy | Improve convergence speed and stability |

| Lee & Zhuo (2021) | 2021 | HGBCSO | Combining BCSO with GA to update position | Improve global exploration and prevent premature convergence |

Application fields and performance analysis of CSO algorithm

The swarm optimization algorithm is widely used in multi-objective optimization, high-dimensional optimization, engineering design, energy management and medicine. The application characteristics of different fields are analyzed in depth as follows:

Applications of CSO in real-world domains

With the progressive development of standard CSO and its numerous variants, the algorithm has been increasingly applied to a broad spectrum of real-world optimization problems. The flexibility of CSO in handling continuous, discrete, constrained, and multi-objective tasks has encouraged its use in engineering design, energy management, wireless sensor networks, medical diagnosis, image processing, scheduling, and structural optimization, among other fields. To illustrate the practical value and adaptability of CSO-based methods, this section reviews major application domains and summarizes how different versions of the algorithm have been customized to meet domain-specific requirements. By linking algorithmic improvements to real use cases, the discussion highlights the growing relevance of CSO in complex optimization scenarios that demand robustness, accuracy, and computational efficiency.

Deep learning–based methods and integration trends

In recent years, deep learning (DL) has rapidly emerged as a dominant paradigm across various fields, including computer vision, natural language processing, and increasingly, optimization and control systems. Its capacity for automatic feature extraction and nonlinear function approximation has made it a powerful tool for modeling complex relationships in high-dimensional and dynamic environments.

In the context of industrial control and cyber-physical systems (CPS), DL techniques have been widely adopted for anomaly detection, predictive maintenance, and energy management. A recent review by Aslam, Tufail & Irshad (2025) provides a comprehensive survey of deep learning frameworks tailored for securing industrial CPS, emphasizing their ability to process time-series data and detect real-time threats with minimal feature engineering.

Moreover, the integration of deep learning with swarm-based optimization has received increasing attention. For example, Azevedo, Rocha & Pereira (2024) proposed a novel framework that combines deep convolutional neural networks (CNNs) with swarm intelligence to improve the recognition accuracy of 3D human activity, demonstrating superior performance compared to traditional feature-engineered methods. This hybrid paradigm exemplifies how deep learning can benefit from population-based heuristics, enhancing global search while maintaining high adaptability.

In the energy and automation sectors, Abbassy et al. (2025) reviewed the application of data-driven and deep learning control strategies for CPS energy systems. They highlighted key challenges such as model generalization, real-time responsiveness, and robustness against noise—aspects that swarm intelligence algorithms like CSO can naturally complement through their exploration–exploitation balance and population diversity maintenance.

While these studies underline the strengths of DL in complex modeling, they often focus on purely data-driven learning pipelines and lack interpretability, controllability, or deployment readiness in real-time environments. Furthermore, few works explore the combination of deep learning with evolutionary algorithms under embedded platforms such as MATLAB/Simulink or Hardware-in-the-Loop (HIL) simulation.

Therefore, this study complements prior efforts by focusing on (your algorithmic framework or CSO variant) that retains the optimization interpretability of swarm-based models, while offering a potential bridge for future integration with DL frameworks. This also opens the door for adaptive hybrid approaches that leverage DL for feature representation and CSO variants for robust search and parameter adaptation.

Emerging integration with quantum-inspired and quantum-assisted optimization

Although CSO and its variants have demonstrated strong performance across engineering design, energy management, scheduling, healthcare analytics, and other real-world domains, recent research trends show a clear shift toward fusing swarm-based metaheuristics with quantum-inspired or quantum-assisted mechanisms. Foundational work on quantum-inspired evolutionary computation (QEA) (Han & Kim, 2002) and quantum-behaved particle swarm optimization (QPSO) (Sun, Feng & Xu, 2004) formalized q-bit representations, superposition-based search, and probabilistic state transitions that can strengthen exploration while controlling parameterization. Subsequent analyses and variants of QPSO (e.g., Gaussian-attractor QPSO) rigorously studied convergence behavior and reported performance gains on standard test suites (Sun et al., 2011, 2012).

Recent applied studies further validate quantum-inspired swarm ideas in practical settings. For instance, quantum-inspired PSO has been adopted for service placement and resource allocation under edge/cloud scenarios with competitive results on real workloads (Bey et al., 2024). Likewise, quantum-corrected Harris Hawks Optimization (QC-HHO) integrates quantum perturbation and chaos to improve robustness on CEC2014 and engineering problems (Zhu et al., 2022). At the landscape level, a 2024 scientometric review maps the growth and hotspots of quantum-inspired metaheuristics—including QEA/QPSO families—highlighting their acceleration in optimization and Industry 4.0 contexts (Pooja & Sood, 2024).

These advances indicate that similar hybridization strategies can be transferred to CSO-based frameworks, especially for high-dimensional, multimodal, or dynamic tasks. From a hardware perspective, demonstrated NISQ-era capabilities (Arute et al., 2019) suggest that variational hybrids (e.g., QAOA/VQE-style components) may become feasible partners for swarm heuristics in the near term. Consequently, evolving CSO toward quantum-inspired or quantum-assisted architectures is both credible and timely, aligning with current trends in high-impact venues and offering a principled path to scalability and efficiency.

Representation and analysis in multi-objective optimization problems

Liang, Wang & Ma (2022) introduced an enhanced CSO algorithm, referred to as RECSO, which incorporates replication and elimination diffusion operations inspired by the bacterial foraging algorithm (BFA) (Passino, 2002) and the particle swarm optimization algorithm (PSO) (Liu & Nishi, 2022). This enhancement was prompted by the tendency of the CSO algorithm to exhibit premature convergence when tackling multi-peak optimization problems. They identified chicks as the most vulnerable individuals within the CSO framework and, to bolster the algorithm’s search capabilities, integrated the replication operation and elimination mechanism from the BFA into the position updating process for chick foraging, thereby preventing chickens from settling into local. In terms of how to efficiently solve multi-objective optimization problems (MOPs), Huang et al. (2024) proposed a non-dominated sorting swarm optimization algorithm (NSCSO). This method uses fast non-dominated sorting to assign ranks to individuals in the population and uses the crowding distance strategy to sort operators within the same rank. In addition, the algorithm combines the elite adversarial learning strategy to improve the multi-objective optimization solution capability. Benchmark function evaluations and comparative analyses across various engineering design problems demonstrate that NSCSO exhibits superior performance. In scenarios where high-dimensional data in the stock market might lead to reduced prediction accuracy. Kiruthiga & Vennila (2020) proposed an innovative multi-objective chaotic Darwinian swarm optimization (CDCSO) system for energy-efficient QoS and load balance-aware task scheduling. The multi-objective CDCSO algorithm combines chaos theory and Darwinian theory into standard swarm optimization, thereby increasing the global search capability and accelerating the convergence process. CDCSO is able to effectively assign tasks to virtual machines (VMs) with optimal energy saving, cost and time minimization, while maintaining optimal load balance. Experimental results show that the proposed multi-objective CDCSO provides better performance in task scheduling, minimizing energy, cost and time while optimizing load balance. Tumor region classification in MRI brain imaging is a crucial task. Due to the diversity and complexity of cancer, MRI brain imaging has very high requirements for tumor classification and segmentation. Table 8 summarizes the optimization type, methods and characteristics, and application of all multi objective optimization of CSO.

| Researchers | Year | Algorithm | Optimization type | Methods and characteristics | Application |

|---|---|---|---|---|---|

| Liang, Wang & Ma (2022) | 2022 | RECSO | Multi-objective optimization | Non-dominated sorting, crowding distance, elite adversarial learning | General multi-objective optimization, engineering design |

| Huang et al. (2024) | 2024 | NSCSO | Multi-objective optimization | Chaos theory, Darwin theory, energy-saving QoS, load balancing | Cloud computing task scheduling (high-dimensional data tasks) |

| Kiruthiga & Vennila (2020) | 2020 | RECSO | Multimodal optimization | Blocking and diffusion elimination (BFA), particle swarm optimization (PSO) | General multimodal optimization problems |

Representation and analysis in high-dimensional optimization problems

Zhou, Gao & Yi (2022) highlighted that, in the standard CSO algorithm, the position update formulas across different levels are interdependent, potentially leading to diminished algorithm convergence. Furthermore, when the highest level becomes trapped in a local optimum during the later stages, it can adversely affect the overall algorithm’s search capabilities, consequently reducing solution accuracy. To address this, they proposed an adaptive mutation learning chicken swarm optimization algorithm (AMLCSO). By introducing adaptive weighting strategies (Sun, Dai & Cheng, 2023), Gaussian mutation, and normal distribution learning strategies, they optimized the position update methods at each level, thereby mitigating the issue of the algorithm falling into local optima and enhancing solution accuracy and convergence performance. Gu et al. (2022) presented an adaptive simplified chicken swarm optimization algorithm based on inverted S inertia weight (ASCSO-S) to tackle the problems of premature convergence and insufficient solution accuracy associated with the standard CSO algorithm in high-dimensional optimization scenarios. They eliminated chicks from the chicken swarm and introduced inverted S inertia weights into the position update process for roosters and hens. By dynamically adjusting the step size of movement, they improved the algorithm’s convergence speed and solution accuracy.