Better data for better predictions: data curation improves deep learning for sgRNA/Cas9 prediction

- Published

- Accepted

- Received

- Academic Editor

- Nicole Nogoy

- Subject Areas

- Bioinformatics, Computational Biology, Microbiology, Molecular Biology, Data Mining and Machine Learning

- Keywords

- Machine learning, High throughput sequencing, CRISPR prediction, Data cleaning, Model building, CRISPR model, SgRNA activity prediction, Bacterial CRISPR prediction

- Copyright

- © 2026 Browne et al.

- Licence

- This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, reproduction and adaptation in any medium and for any purpose provided that it is properly attributed. For attribution, the original author(s), title, publication source (PeerJ) and either DOI or URL of the article must be cited.

- Cite this article

- 2026. Better data for better predictions: data curation improves deep learning for sgRNA/Cas9 prediction. PeerJ 14:e20706 https://doi.org/10.7717/peerj.20706

Abstract

The Cas9 enzyme along with a single guide RNA molecule is a modular tool for genetic engineering and has shown effectiveness as a species-specific antimicrobial. The ability to accurately predict on-target cleavage is critical as activity varies by target. Using the sgRNA nucleotide sequence and an activity score, predictive models have been developed with the best performance resulting from deep learning architectures. Prior work has emphasized robust and novel architectures to improve predictive performance. Here, we explore the impact of a data-centric approach through optimization of the input target site adjacent nucleotide sequence length and the use of data filtering for read counts in the control conditions to improve input data utility. Using the existing crisprHAL architecture, we develop crisprHAL Tev, a bacterial SpCas9 prediction model with performance that generalizes across related species and across data types. During this process, we also rebuilt two prior Escherichia coli Cas9 datasets, demonstrating the importance of data quality, and resulting in the production of an improved bacterial eSpCas9 prediction model. The crisprHAL models are available through GitHub: https://github.com/tbrowne5/crisprHAL.

Introduction

The CRISPR-Cas9 system is capable of a wide range of genetic engineering tasks through the production of DNA double-strand breaks. In this system, the Cas9 nuclease and a single guide RNA (sgRNA) bind a DNA target containing a 20-nucleotide sequence complementary to the target site. The activated nuclease then induces a double-strand DNA break (Deltcheva et al., 2011; Jinek et al., 2012). Binding and cleavage activity by Cas9 requires a protospacer adjacent motif (PAM) immediately downstream of the target DNA. For the commonly used Streptococcus pyogenes Cas9 nuclease (SpCas9), this PAM sequence is NGG (Jinek et al., 2012). Along a sequence of DNA this PAM site will occur once every 16 nucleotides on each strand, assuming a random and equal nucleotide (nt) distribution, highlighting the numerous potential targets that exist. Given the ease of designing the sgRNA sequence there is broad potential for the system as a reprogrammable site-specific nuclease. Despite the large number of sites, prior work has shown wide variations in so-called “on-target” activity, defined as cleavage of the chosen target site (Doench et al., 2014; Wang et al., 2014; Doench et al., 2016; Guo et al., 2018; Hamilton et al., 2019; Ham et al., 2023).

Several machine-learning models have been constructed that use the nucleotide sequence of the target site, and often other biological parameters, to predict on-target activity (Guo et al., 2018; Chuai et al., 2018; Wang & Zhang, 2019; Dimauro et al., 2019; Wang et al., 2019; Zhang, Dai & Dai, 2020; Kim et al., 2019; Lin et al., 2020; Wan & Jiang, 2023; Ham et al., 2023). The best performing of these models typically rely upon deep learning architectures to make their predictions. Most existing machine-learning models have been constructed for mammalian applications; only three bacteria-specific Cas9 prediction models exist (Guo et al., 2018; Wang & Zhang, 2019; Ham et al., 2023). Regardless of their application, recent work has generally focused on improving on-target activity predictions through two methods: first by updating the model architecture and second by using datasets with greater numbers of unique sgRNA sequences. While both factors are clearly important, examining and improving the quality of data has been neglected, and this is the aim of this work. We focus on two key aspects to improve predictive performance: improving the accuracy of the on-target activity score, and the inclusion of relevant sequences adjacent to the chosen target site.

On-target sgRNA/Cas9 activity datasets suitable for machine-learning training have been generated using a variety of methods which contrast control and experimental conditions and use DNA sequencing read counts as a proxy for on-target sgRNA/Cas9 activity (Guo et al., 2018; Ham et al., 2023). Due to the compositional nature of these datasets—a single read count is an arbitrary metric dependent upon sequence depth and requires a transformation for interpretability—the read counts are normalized in some fashion before activity scores are calculated (Quinn et al., 2019). The “depletion” datasets that measured killing efficiency of a wild-type and enhanced specificity SpCas9 in Escherichia coli, generated by Guo et al. (2018), used a mean normalization followed by a non-targeting control adjustment and then a data standardization using a Z-score statistic. In contrast, the datasets that measured TevSpCas9 and SpCas9 activity in E. coli and Citrobacter rodentium, generated by Ham et al. (2023), used ALDEx2 (Fernandes et al., 2013) to generate a difference-between metric derived from a centre log ratio-based approach (Fernandes et al., 2014). All datasets measured killing efficiency through genomic cleavage (sgRNA depletion) or elimination of a plasmid encoding a toxin gene resulting in growth (sgRNA enrichment).

Regardless of the method used, low control-condition read counts cause two issues. First, low counts reduce the precision of measurement, and second, the absolute range of observable values is smaller (Fernandes et al., 2013; Love, Huber & Anders, 2014; Gloor et al., 2016). In depletion experiments low read counts in the control condition constrain the precision by which we can measure activity, and in enrichment, inactivity. In either case, this imprecision results in inaccurate activity scores if appropriate filtering for a minimum control-condition read count is not performed. Prior to this point, no group has investigated the role that optimizing for the minimal read count in the training set has on model performance. This is not to suggest that dynamic range concerns have been ignored, read count cutoffs have been used previously, but these have not been investigated systematically. We suggest that each dataset may have a minimum read cutoff which balances the reduction in data with the improvement in scoring accuracy.

A second important component to the on-target activity scores is the target site sequence supplied to a model as the input feature. In many existing models, this sequence is simply the 20 nucleotide sgRNA target site, often with the addition of its respective PAM sequence—NGG when using an SpCas9 dataset (Zhang, Dai & Dai, 2020; Chuai et al., 2018; Wang et al., 2019; Dimauro et al., 2019; Wan & Jiang, 2023). However, the nucleotides flanking the target site do contribute to nuclease activity (Wang & Zhang, 2019; Ham et al., 2023). Prior work found Cas9-DNA interactions eight nucleotides downstream of the sgRNA target site which appear to mediate target search (Yang et al., 2021). More distant interactions were also found 14 nucleotides downstream of the PAM, which appears to regulate nuclease binding and dissociation (Zhang et al., 2019). Work by Ham et al. (2023) examined and optimized model performance through the incremental inclusion of target site adjacent nucleotides to their model. This method found optimal performance using an input sequence comprising the sgRNA, NGG PAM, and five nucleotides downstream, noting that the inclusion of upstream nucleotides only decreased performance. Though this provides insight into adjacent nucleotide impacts on activity, the small dataset sizes used potentially constrain the parameter increase required by longer input sequences. The potential for large datasets to compensate for this parameter increase is promising for the capture of more distant target site adjacent nucleotide impacts on activity, provided the data is sufficiently accurate. It should also be noted that different Cas enzymes are expected to have differing nucleotide sequence preferences, especially nucleases with changes in their binding characteristics that increase specificity such as eSpCas9 (Slaymaker et al., 2016). Thus, the input sequence length that is optimal for SpCas9 model performance may be sub-optimal when applied to training an eSpCas9 model, or to an enzyme derived from a different species.

Here we test bacterial Cas9 prediction models constructed on the existing crisprHAL architecture from Ham et al. (2023) to demonstrate the importance of data quality for performance optimization. We present an accurate SpCas9 activity prediction model, trained on a C. rodentium TevSpCas9 dataset, with performance that generalizes across related species. We refer to this new model as crisprHALTev. We also recreate two prior datasets from Guo et al. (2018) for SpCas9 and eSpCas9 in E. coli which demonstrate the general benefit of data optimization on model performance, resulting in the production of an improved bacterial eSpCas9 activity prediction model.

Methods

Datasets

Portions of this text were previously published as part of a preprint (Browne, Edgell & Gloor, 2025). For model development, training, and testing we used three large and three small datasets that measured bacterial Cas9 activity by multiple different assays. Table 1 provides information on the experimental design used to generate these datasets and Table 2 provides information on sequencing depth and the number of available single guide RNAs (sgRNA) for training and validation. The three large datasets measure killing efficiency (depletion) targeting sgRNAs to the host genome which activates cleavage when combined with Cas9 expression; in these assays active sgRNAs are depleted from the library. In the small datasets, activity is measured through cellular growth (enrichment) when the sgRNA/Cas9 complex cleaves a plasmid harbouring the CcdB toxin. Our primary SpCas9 prediction model is constructed from a set of 30,138 sgRNAs and the read counts from nine experimental and one E. coli Epi300 control replicate generated by Ham et al. (2023). This depletion assay measured the activity of sgRNAs in the presence of the dual nuclease TevSpCas9 to cleave the genome of C. rodentium and concomitant loss of the active sgRNA from the pool. The three independent testing sets, also created by Ham et al. (2023), were developed in E. coli K-12, each with 10 control and 10 experimental replicates. Two of these datasets measured cleavage of a CcdB toxin-harbouring plasmid with the TevSpCas9 and SpCas9 nucleases, referred to as the pTox TevSpCas9 and pTox SpCas9 datasets. The two pTox datasets have overlapping sgRNA target sites, providing the opportunity to compare cleavage by the TevSpCas9 and SpCas9 enzymes directly. An additional testing dataset measured TevSpCas9 cleavage of the KatG gene cloned from Salmonella enterica inserted on a plasmid in E. coli, and is referred to as the KatG TevSpCas9 dataset.

| Dataset name | Nuclease | Target | Host | Type | Control condition | Control replicates | Experimental replicates | Source |

|---|---|---|---|---|---|---|---|---|

| C. rodentium TevSpCas9 | TevSpCas9 | Host genome contig assembly | C. rodentium DBS100 | Depletion | E. coli Epi300 pre-transformation | 1 | 9 | Ham et al. (2023) |

| E. coli eSpCas9 | eSpCas9 | Host genome coding regions | E. coli K-12 | Depletion | Catalytically dead eSpCas9 | 2 | 2 | Guo et al. (2018) |

| E. coli SpCas9 | Wild-type SpCas9 | Host genome coding regions | E. coli K-12 | Depletion | Catalytically dead SpCas9 | 2 | 2 | Guo et al. (2018) |

| pTox TevSpCas9 | TevSpCas9 | Plasmid target (pTox) | E. coli K-12 | Enrichment | Repressed expression | 10 | 10 | Ham et al. (2023) |

| pTox SpCas9 | Wild-type SpCas9 | Plasmid target (pTox) | E. coli K-12 | Enrichment | Repressed expression | 10 | 10 | Ham et al. (2023) |

| KatG TevSpCas9 | TevSpCas9 | Plasmid host S. enterica KatG gene | E. coli K-12 | Enrichment | Repressed expression | 10 | 10 | Ham et al. (2023) |

| Dataset name | Initial pool | Mean control read coverage | Mean selective read coverage | Unfiltered sgRNAs | Filtering cutoff | Filtered sgRNAs | Training set size | Testing set size |

|---|---|---|---|---|---|---|---|---|

| C. rodentium TevSpCas9 | 31,596 | 228.6 | 73.2 | 30,138 | 56 | 25,244 | 20,195 | 5,049 |

| E. coli eSpCas9 | 65,928 | 178.5 | 98.7 | 64,021 | 31 | 59,489 | 47,591 | 11,898 |

| E. coli SpCas9 | 65,928 | 106.7 | 49.7 | 61,002 | 73 | 33,495 | 26,795 | 6,700 |

| pTox TevSpCas9 | 304 | 1,903.9 | 1,968.0 | 279 | 20* | 260 | – | 260 |

| pTox SpCas9 | 304 | 261.8 | 321.3 | 303 | 20* | 255 | – | 255 |

| KatG TevSpCas9 | 317 | 1,635.8 | 1,659.0 | 268 | 20* | 268 | – | 268 |

Notes:

We also obtained two additional depletion datasets from Guo et al. (2018) that tested a pool of 65,928 sgRNAs in E. coli. We reconstructed both datasets from publicly available sequencing data and used the original datasets for comparison. For these datasets, E. coli eSpCas9 (n = 45,071) and E. coli SpCas9 (n = 44,163), we use the scores as calculated by Guo et al. (2018) with the 43nt model input sequences from Wang & Zhang (2019), and an improved scoring scheme outlined below. The original datasets and scoring schemes are referred to as the original E. coli eSpCas9 dataset and the original E. coli SpCas9 dataset.

Expanded eSpCas9 and SpCas9 datasets

For the generation of a new E. coli eSpCas9 and a new E. coli SpCas9 model, we reconstruct the two datasets from Guo et al. (2018) using sequencing data generated in E. coli K-12 substr. MG1655. This reconstruction was performed in order to make use of all available data for model training. The original data was curated based upon a minimum of five sgRNAs mapping to an E. coli gene for inclusion—valid for their use case, but unnecessary for model training. The initial sgRNA pool comprised 65,928 sgRNAs targeting the eSpCas9 or SpCas9 nuclease to the E. coli genome. Each dataset consisted of two control and two experimental condition samples, with catalytically dead eSpCas9 or SpCas9 being used for the control condition. The paired-end reads were merged with usearch (Edgar, 2010) and we obtained read counts for sgRNAs from the sequencing output using an in-house script, with sequence reads being counted for both replicates in the control and experimental conditions.

Data processing

The raw read counts for each sgRNA target in control and experimental conditions were used as the input to ALDEx2, with the ALDEx2 diff.btw, a non-parametric log2 fold-change metric, used as the activity score; hereafter referred to as log2FC (Fernandes et al., 2014). This metric differs from the Z-score activity metric used by Guo et al. (2018) for the original E. coli eSpCas9 and SpCas9 datasets used for comparison testing in that it is the expected value of a Bayesian posterior derived from the data and ensures that all values are linearly related (Aitchison, 1986; Fernandes et al., 2013). Additionally, given the common use of a log2FC metric in differential expression analysis, using such values for training may improve the interpretability of resulting predictions. The enrichment pTox TevSpCas9, pTox SpCas9, and KatG TevSpCas9 datasets used a control-condition read cutoff of 20. To test control-condition read count minimum cutoff impacts on activity, we constructed the C. rodentium TevSpCas9, E. coli eSpCas9, and E. coli SpCas9 datasets with incrementally increasing minimum average control-condition read count cutoffs—100 variants of each dataset ranging from a cutoff of 1 to a cutoff of 100 (Fig. 1A).

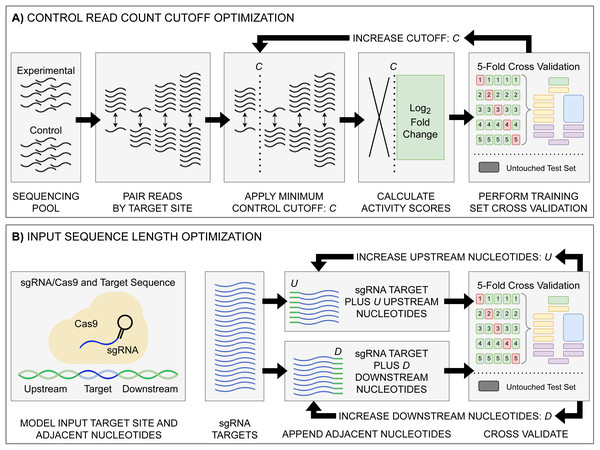

Figure 1: Model input and score optimization workflow.

(A) Identification of an optimal control read count minimum cutoff value C. Read counts for each feature in control and experimental replicates were paired by their respective sgRNA target site. Beginning with C = 1, all sgRNAs with a control read count below the cutoff C were removed, with subsequent log2FC scores then calculated. The output sgRNAs and corresponding scores were split into training and held-out testing sets. Model performance metrics for the cutoff value were calculated using five-fold cross validation on the training set. The cutoff value C was then increased, with the cutoff curation, score calculation, and 5-fold cross validation repeated. (B) Identification of optimal upstream and downstream target site adjacent nucleotide inclusion to the model input sequence. Two tests were performed: one upstream and one downstream, starting with values of U = 0 and D = 0. Beginning with the 20 nucleotide sgRNA target site, U upstream nucleotides were appended, and the data was split into training and testing sets, with subsequent performance metrics obtained via 5-fold cross validation. The same test was performed with D downstream nucleotides appended in place of the upstream nucleotides. The process was then repeated, incrementing the number of appended upstream U and downstream D nucleotides. The optimal input sequence length was then obtained using the optimal U and D upstream and downstream nucleotides, with a final input length of U+20+D. Across all optimization tests, like standard model hyper-parameter tuning, the hold-out test set was untouched, being used only to test the final model.To determine the effect of adjacent nucleotides on prediction accuracy, we extracted the target site sequences from each datasets associated target DNA sequence—C. rodentium DBS100, E. coli K-12, and the respective plasmid sequences, examining and the impacts to model performance when included alongside the sgRNA and PAM (Fig. 1B). For each set of target sites, we extended the sequence by 500 nucleotides upstream and downstream of the sgRNA plus the NGG PAM. Prior to model input, these sequences are transformed into a model-friendly one-hot encoding format of 4×N shape: four nucleotide options by an N-length sequence input.

During the process of dataset generation, a fixed seed value was used. For the primary datasets used in model development, 20% of the data was separated and remained untouched until final model testing (Table 2). For replication, data generation scripts are made available at the associated repository. During dataset generation, and additionally during model testing, checks were performed to ensure identical input sequences were not present across training and testing sets. Target site sgRNA sequence similarity between each training and held-out test set was checked via hamming distance (Fig. S1).

Model architecture

Throughout we use the term “architecture” when referring to the programming setup, and “model” when referring to the output of the architecture after training. Given the focus of this work on improving predictive performance through a data-centric approach, we train all models on the existing crisprHAL model architecture from Ham et al. (2023) (Fig. 2). This architecture uses a deep learning approach with a dual branching structure comprising convolutional and recurrent neural network layers. The convolutional neural network (CNN) layers aim to learn local features of the target site which impact on-target activity through optimizing the weights of convolutional filters, whereas the recurrent neural network (RNN) aims to learn sequential features (Rumelhart, Hinton & Williams, 1985; LeCun, Bengio & Hinton, 2015). The dual branching structure passes an encoded sgRNA input through an initial convolutional block to either several successive convolutional blocks or a bidirectional gated recurrent unit (BGRU) RNN (Cho et al., 2014). Each convolutional block consists of a one-dimensional CNN followed by a LeakyReLU activation function and one-dimensional max pooling. Each branch then sends their output through two dense layers, each followed by a dropout layer, finally being concatenated into a single output node. This structure is unchanged from the original crisprHAL architecture, save for a small alteration of the BGRU to allow for better GPU usage. A major focus of the original architecture was transfer learning with all parameters frozen in the CNN-branch. Here we do not make use of transfer learning, instead focusing on performance improvement through optimal data curation.

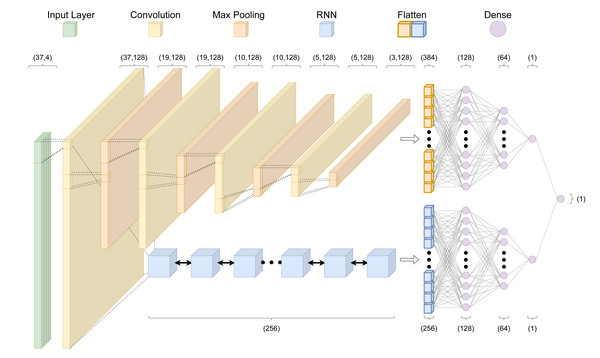

Figure 2: The crisprHAL model architecture.

The forward propagation of a one-hot encoded input nucleotide sequence through the dual branch CNN and bi-directional GRU RNN structure, resulting in a final activity prediction. This diagram depicts an input sequence length of 37 nucleotides used by crisprHALTev.Hyper-parameter adjustments were made in order to better utilize the larger training sets—the original model was designed for use with small dataset fine-tuning. As such, we reduced the learning rate to 0.0005 and increased the batch size to 1,024. Unchanged hyper-parameters include using the “Adam” optimizer with a mean squared error loss function (Kingma & Ba, 2017). For each final presented model, performance is reported following training on the entire training set using hyper-parameters determined by 5-fold cross validation (5CV) using the respective training set. These include the previously mentioned hyper-parameters in addition to the average control-condition read count minima(cutoff), upstream and downstream target site adjacent nucleotide inclusion to the model input, and total training epochs. Each final model architecture and parameter count is available in Table S1.

Prior models

To benchmark our TevSpCas9, eSpCas9, and wild-type SpCas9 models—crisprHALTev, crisprHALeSp, and crisprHALWT—we use six existing models from three sources (Table 3). These models are: the eSpCas9 and wild-type SpCas9 models from Guo et al. (2018), GuoeSp and GuoWT; the eSpCas9 and wild-type SpCas9 models from Wang & Zhang (2019) named here as DeepSgRNAeSp and DeepSgRNAWT; and the crisprHAL transfer learning-based TevSpCas9 and wild-type SpCas9 models from Ham et al. (2023), crisprHALTL-Tev and crisprHALTL-WT. All models are publicly available: crisprHAL (https://github.com/tbrowne5/A-generalizable-Cas9-sgRNA-prediction-model-using-machine-transfer-learning), DeepSgRNA (https://github.com/biomedBit/DeepSgrnaBacteria), and Guo (https://github.com/zhangchonglab/sgRNA-cleavage-activity-prediction). Models have been installed and tested with appropriate configurations as laid out in their respective installation guides. For prior models which do not report specific performance metrics for a held-out test set, when relevant, we compare model performance against their reported 5-fold cross validation metrics.

| Model name | Training data | Model design | Activity scoring | Training CV set | Five-fold CV Spearman | Held-out test Set | Test set Spearman |

|---|---|---|---|---|---|---|---|

| crisprHALTev | C. rodentium TevSpCas9 | CNN-RNN Hybrid | Log2FC | 20,195 | 0.754 | 5,049 | 0.746 |

| crisprHALeSp | E. coli eSpCas9 | CNN-RNN Hybrid | Log2FC | 47,591 | 0.783 | 11,898 | 0.782 |

| crisprHALWT | E. coli SpCas9 | CNN-RNN Hybrid | Log2FC | 26,795 | 0.697 | 6,700 | 0.695 |

| GuoeSp | E. coli eSpCas9* | GBR & Bio-feature | Z-Score | 36,056 | 0.682 | 9,014 | 0.68 |

| GuoWT | E. coli SpCas9* | GBR & Bio-feature | Z-Score | 35,330 | 0.542 | 8,833 | 0.54 |

| DeepSgRNAeSp | E. coli eSpCas9* | CNN & Bio-feature | Z-Score | 45,070 | 0.711 | – | – |

| DeepSgRNAWT | E. coli SpCas9* | CNN & Bio-feature | Z-Score | 44,163 | 0.582 | – | – |

| crisprHALTL-Tev |

E. coli eSpCas9a pTox TevSpCas9a |

CNN-RNN Hybrid & Transfer Learning | Log2FC Log2FC |

45,010 279 |

– 0.630 |

– – |

– – |

| crisprHALTL-WT |

E. coli eSpCas9a pTox SpCas9a |

CNN-RNN Hybrid & Transfer Learning | Log2FC Log2FC |

45,010 303 |

– 0.627 |

– – |

– – |

Notes:

Model performance metrics

To judge model performance we use the Spearman rank correlation coefficient (ρ) and Pearson correlation coefficient (r); referred to as Spearman correlation and Pearson correlation for simplicity. We report both these metrics to highlight the properties of the predictions since these metrics are concordant in Normally distributed data that is linearly dependent. Across most sgRNA/Cas9 activity prediction models Spearman correlation has been the primary, or only, metric reported, and therefore, this is the main metric we use to benchmark the models. We use the ‘spearmanr’ and ‘pearsonr’ functions from the package SciPy (Virtanen et al., 2020).

Results

We developed new models for predicting sgRNA activity with SpCas9 trained on depletion datasets by incorporating a different scoring metric, by optimizing the minimum read count used in each training set, and by including substantially more information from the sequence flanking the sgRNA binding site. We present first the performance of the optimized model in the first section of the results, then show how the individual optimizations improve model performance in the later results sections. In the final results section, we show how these models perform to predict activity in an enrichment experiment which is an independent type of sgRNA assay.

An improved bacterial SpCas9 activity prediction model

For the development of an updated and improved SpCas9 prediction model, we use the hybrid convolutional and recurrent neural network-based crisprHAL architecture from Ham et al. (2023) as a framework. For clarity, we denote the new model generated with the C. rodentium dual nuclease-derived TevSpCas9 dataset as crisprHALTev. The C. rodentium TevSpCas9 dataset comprises 25,244 sgRNA target-site sequences and their corresponding on-target activity scores from a depletion dataset (Methods) that uses the non-parametric log2FC scoring metric derived from the ALDEx2 tool. Here we used a minimum control read count of 56 (Table 2) derived as in the next section, and included 378 nt input sequence for training, derived as described in the third results section. This dataset was split into an 80% training set (n = 20,195) and 20% held-out testing set (n = 5,049), with additional checks performed to ensure no sequence overlap (Fig. S1). The training set was used in model performance optimization and final model generation (Table 3). Hyper-parameters for training epochs, input sequence length, and score cutoff were determined by 5-fold cross validation (5CV).

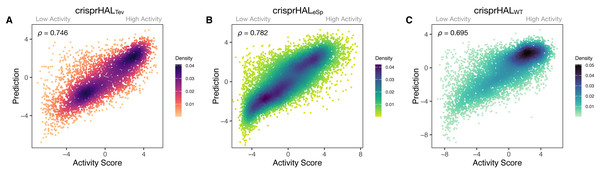

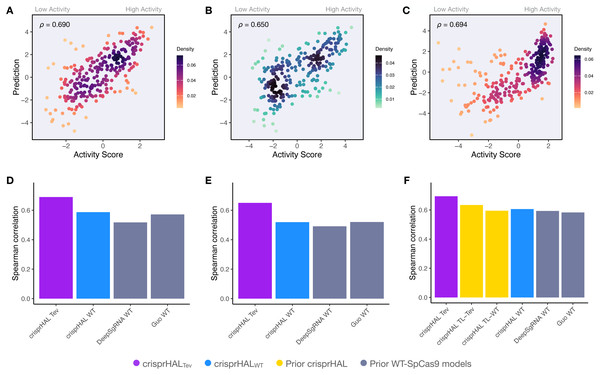

Following tuning and cross-validation, we observed the optimal mean 5CV performance of 0.754 Spearman correlation and 0.751 Pearson correlation (Fig. S2). We noted that this new model showed similar performance in the held-out 20% test set, with a 0.746 Spearman correlation and 0.744 Pearson correlation (Fig. 3A).

Figure 3: Predictions versus actual log2FC activity scores for each model’s respective 20% held-out test set.

(A) crisprHALTev on the C. rodentium TevSpCas9 testing set of n = 5,049, (B) crisprHALeSp on the E. coli eSpCas9 testing set of n = 11,898, and (C) crisprHALWT on the E. coli SpCas9 testing set of n = 6,700.Curation of prior eSpCas9 and SpCas9 data for better models

We now show how the strategy used for the optimized crisprHALTev model is generally useful when using datasets obtained from the literature. To provide maximal opportunity for our model to learn sequence features associated with eSpCas9 activity, we chose to regenerate the dataset without the sgRNA inclusion criteria present in the existing eSpCas9 dataset from Guo et al. (2018). From an initial pool of 64,021 sgRNAs, using a minimum average control-condition read count criteria of 31 (Table 2, and described in later sections), we obtain a final eSpCas9 dataset of 59,489 sgRNAs and corresponding scores—14,419 more than the original version of the dataset from Guo et al. (2018). The sgRNAs not present in the original eSpCas9 dataset are distributed across a similar range of scores to the pre-existing sgRNAs, however, do cluster more towards lower activity (Fig. S3). This expanded dataset was then split into 80% training (n = 47,591) and 20% testing (n = 11,898) sets, with the training set used for all subsequent eSpCas9 model five-fold cross validation testing (Table 3).

Our eSpCas9 model, crisprHALeSp, shows improved performance versus the existing eSpCas9 models with a Spearman correlation of 0.782 and a Pearson correlation of 0.780 (Fig. 3B) in the held-out data. This performance is obtained following 62 epochs as determined through five-fold cross validation. The best of the existing eSpCas9 models, DeepSgRNAeSp, reports a mean five-fold cross validation Spearman correlation of 0.715, which is substantially lower than the value the new model shows with held-out data. The other eSpCas9 model, from Guo et al. (2018), obtains a Spearman correlation of 0.682 on its held-out 20% test set. To highlight the importance of the data, using the original E. coli eSpCas9 dataset, Guo et al. (2018) with the 43nt input used by DeepSgRNA (Wang & Zhang, 2019)—we obtain a Spearman correlation of 0.717 and a Pearson correlation of 0.716 on a held-out 20% test set (n = 9,015).

We also reconstructed a new dataset from the E. coli SpCas9 data from Guo et al. (2018) to support the proposed data curation methods discussed. Using an average control-condition read count cutoff of 73 (Table 2, and later sections), we obtain 33,495 sgRNAs and scores from an initial pool of 61,002—a reduction of 10,668 sgRNAs from original version of the dataset from Guo et al. (2018). Following this curation, the E. coli SpCas9 dataset was split into an 80% training (n = 26,795) and 20% testing (n = 6,700) sets (Table 3).

After training, the E. coli SpCas9 model, crisprHALWT, shows a Spearman correlation and Pearson correlation of 0.695 and 0.720 on the held-out test set (Fig. 3C). The GuoWT and DeepSgRNAWT models, constructed on the prior E. coli SpCas9 data, obtain 0.542 and 0.582 Spearman correlation—a difference of 0.153 and 0.113 respectively. To provide a direct comparison, akin to the eSpCas9 test, training the crisprHAL model using the original E. coli SpCas9 dataset results in a peak performance of 0.582 Spearman correlation and 0.611 Pearson correlation on their respective held-out 20% test set (n = 8,833). To emphasize the importance of curation, the original model obtains substantially lower performance when using the full-size dataset despite training on 33% more data than does the new model trained on the curated dataset.

Limiting dynamic range inhibits performance

In this section we show the importance of choosing an appropriate minimum read count cutoff for each dataset that maximizes the performance of the model trained on that curated dataset. Model training is reliant upon the accuracy of the data itself, both the input feature and label; i.e., the target site sequence and the activity score. The log2FC scores are reliant upon sufficient read count abundance in the control condition to facilitate a robust dynamic range in scores for model use. When we examine the entire set of C. rodentium TevSpCas9 sgRNA activity scores versus their control-condition read counts we note a substantial score reduction associated with low control-condition read counts (Fig. 4A). Since these data are generated using a depletion assay, low control-condition read counts are expected to reduce the range of scores obtainable for highly active sgRNA sites; for example, a control read count of 1 allows only a read count of 0 to result in a positive score if an absolute value-based difference between calculation is used. This reduction can be seen across both the E. coli eSpCas9 and SpCas9 activity scores as well (Figs. 4B–4C). Given the potential for these low quality scores to impact model performance, we generated subsets of the C. rodentium TevSpCas9 dataset by incrementally increasing the control-condition read count minimum (Fig. 1A). Comparing a control read count of 1 to the previously used minimum of 20 (Guo et al., 2018), we see a substantial improvement; a 5CV mean Spearman correlation increase of 0.036 (Fig. 4E). As we increase this minimum count cutoff we see a steady increase in performance, peaking at a Spearman correlation of 0.754 with a read count cutoff of 56, shown in purple in (Fig. 4E). This cutoff removes 16.2% of the data; a loss of 4,894 sgRNAs (Fig. 4D). Examining how this cutoff affects the overall distribution of log2FC scores, we note only a minor reduction in the number of low activity scores (Fig. S4). Given the implication that including these guides inhibits model training, we next examined the error between log2FC scores and the predictions from crisprHALTev for all sgRNAs eliminated by the 56 cutoff, separated by control read count (Fig. 5). As expected, we note a high mean absolute error for between-model predictions and sgRNAs with low control-condition read counts. When error is separated by positive and negative predictions, the loss of predictive utility is observed to occur in the positive predictions. This is unsurprising, as low control-condition read counts reduce the precision of the readout for potentially active on-target sites. Above control read counts of 10-to-20 the error rate is substantially reduced and slowly decreases, in line with the model performance versus control cutoff results.

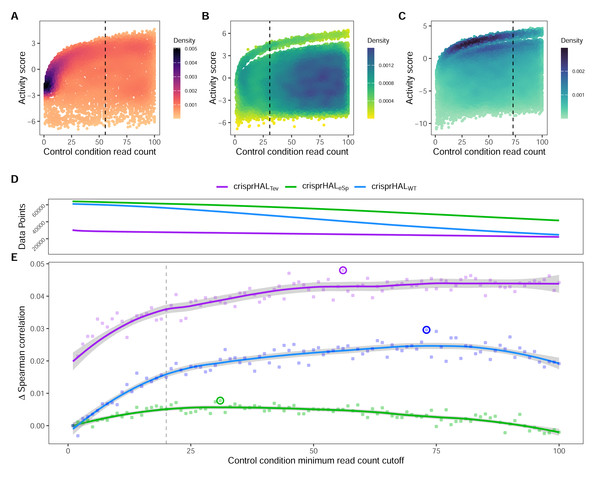

Figure 4: Control-condition read counts and cutoff impacts on dataset size and model performance.

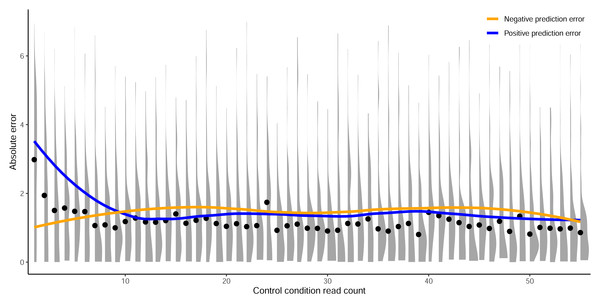

The log2FC activity scores versus average control-condition read counts from 1-to-100 for the (A) C. rodentium TevSpCas9, (B) E. coli eSpCas9, and (C) E. coli SpCas9 datasets, each with their respective final model minimum read count cutoff.(D) Total data points in the C. rodentium TevSpCas9 (purple), E. coli eSpCas9 (green), and E. coli SpCas9 (blue) datasets at each control condition minimum read count. (E) Change in mean 5CV Spearman correlation values across increasing minimum control-condition read counts for the C. rodentium TevSpCas9 model (purple), the E. coli eSpCas9 model (green), and the E. coli SpCas9 model(blue) versus a cutoff value of 1. The best performing cutoff value for each dataset and subsequent final model is circled. A previously used cutoff value of 20 used is indicated with the dashed line (Guo et al., 2018).Figure 5: Prediction error by control read count cutoff.

Absolute error between predictions from crisprHALTev and the C. rodentium TevSpCas9 data removed by the optimal identified minimum control-condition read count cutoff of 56. Means (black dots) and densities of absolute error between predictions and log2FC scores are separated by control-condition read count. Trend lines show mean absolute error between positive (blue) and negative (orange) value predictions versus log2FC scores.When we examine the impact of optimizing the control-condition read count cutoff on the E. coli eSpCas9 dataset we again see an increase in model performance (green line in the figure), though not as substantial as seen in the C. rodentium TevSpCas9 example (purple). Model performance improves consistently as low cutoff values increase, peaking at an average control-condition read count cutoff of 31 reads across the two control replicates. This cutoff negligibly alters the distribution of log2FC scores compared to a cutoff of 1 (Fig. S4). The use of a read count minimum is likely less impactful to E. coli eSpCas9 model performance due to the higher read counts across the dataset, with comparatively few sgRNAs with low control read counts. Only 4.37% of sgRNAs in the eSpCas9 set have a mean control read count of less than 20 compared to 7.71% and 8.30% in the SpCas9 and TevSpCas9 sets, respectively. It would follow that datasets containing more sgRNAs with low quality activity scores will see a larger performance benefit from their removal.

When this process is applied to the E. coli SpCas9 dataset, we again observe a similar trend, with model performance improving until a minimum average control read count of 73 is reached. At this cutoff the 5CV average Spearman correlation is 0.697, an improvement of 0.029 over a cutoff of 1, and an improvement of 0.014 over using a cutoff of 20 (Fig. 4E). Using this cutoff results in minimal alteration to the distribution of log2FC scores, with a minor reduction in the already over-represented higher activity scores (Fig. S4). The performance boost using a cutoff of 73 is notable as 45.1% of the total data is removed in this example, reducing the dataset size from 61,002 to 33,495 sgRNAs. The loss of a large fraction of the dataset is unsurprising given that this dataset has the lowest read count coverage across all tested datasets. Thus, we conclude that removing lower quality scores outweighs the benefit of retaining more training data across multiple datasets.

Adjacent nucleotides contain on-target activity information

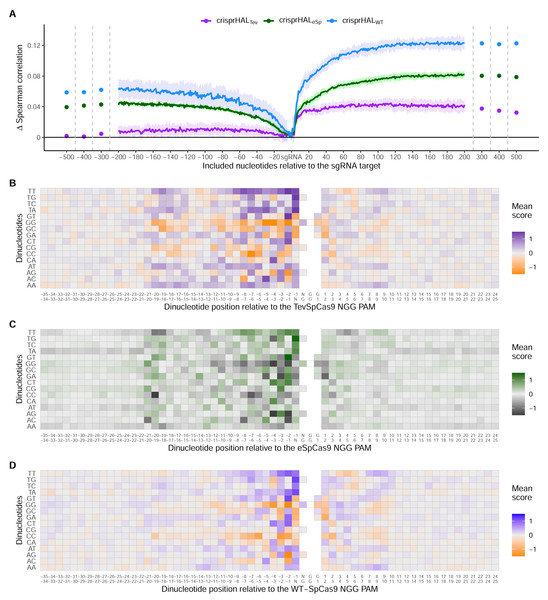

We next examined the effect on predictive accuracy as a function of the model input sequence length. Prior work has noted the on-target Cas9 activity information contained in the nucleotides adjacent to the sgRNA target site, especially in the nucleotides immediately downstream of the PAM (Wang & Zhang, 2019; Ham et al., 2023). To test the performance benefit of including additional nucleotides upstream and downstream of the sgRNA target site, we train our TevSpCas9, eSpCas9, and SpCas9 models with input sequences of increasing length. We started from just the sgRNA and increased the sequence length incrementally one nucleotide at a time either upstream or downstream, but not both, with performance measured by average five-fold cross validation Spearman correlation (Fig. 1B).

Across all three models, the most substantial boost to performance was obtained when the nucleotides immediately downstream of the PAM were included in the input sequence (Fig. 6A). For crisprHALTev this region extends 11 nucleotides downstream of the PAM, before the performance benefit of adding more nucleotides to the input sequence begins to level off. This trend can be seen when examining average log2FC activity scores for each di-nucleotide position within and surrounding the sgRNA target, where most information is located between the sgRNA, PAM, and 11 nucleotides downstream (Figs. 6B–6D). Analysis of more distant positional preferences for TevSpCas9 reveals little information, in line with model performance (Fig. S5). We note that the E. coli SpCas9 model obtains the greatest performance boost from this downstream nucleotide inclusion, with a 5CV average increase of +0.07 Spearman correlation units when including the PAM plus 10 nucleotides downstream compared to just using the sgRNA (Fig. 6A). Though the C. rodentium TevSpCas9 and E. coli eSpCas9 models do also see a performance benefit, more information appears to be contained within the sgRNA target site itself, especially in the PAM-distal region.

Figure 6: Target site and adjacent nucleotide on-target Cas9 activity information.

(A) Model performance when upstream or downstream nucleotides are included alongside the sgRNA sequence as the model input. The change in model performance is reported as five-fold cross validation mean Spearman correlation compared to a model input of just the sgRNA for C. rodentium TevSpCas9 and crisprHALTev (purple), E. coli eSpCas9 and crisprHALeSp (green), and E. coli SpCas9 and crisprHALWT (blue). Mean log2FC score for each di-nucleotide option relative to the PAM for the (B) C. rodentium TevSpCas9, (C) E. coli eSpCas9, and (D) E. coli SpCas9 datasets.Examining upstream nucleotides, there is a marginal performance benefit for crisprHALTev when including three nucleotides, with a potential case to be made for including more. However, upon examination of the positional di-nucleotide mean scores across the C. rodentium TevSpCas9 and E. coli SpCas9 datasets, little activity information appears to be contained in this region (Figs. 6B/6D). By contrast, we notice a trend within the E. coli eSpCas9 dataset which is present both upstream of the sgRNA target and beyond 10 nucleotides downstream of the PAM: A-T rich regions are disfavoured (Fig. 6C). Extending the di-nucleotide mean score plots 100 nucleotides upstream and downstream of the sgRNA-PAM site, this trend is maintained (Fig. S6). Though A-T rich adjacent regions are disfavoured, target sites with T rich PAM-proximal regions are very strongly favoured. This PAM-proximal T-preference is present across all three nucleases. There is also a G-preference for the N-position of the NGG PAM, in line with prior work suggesting local sliding of SpCas9 across adjacent PAM motifs (Corsi et al., 2022).

An interesting finding is the use of very long sequence length inputs for E. coli SpCas9 model performance. Appending 189 nucleotides upstream to the sgRNA target model input increases the mean 5CV Spearman correlation by 0.065, while appending the PAM plus 166 nucleotides downstream increases performance by 0.125 (Fig. 6A). Combined, a model built with a 378 nucleotide input sequence comprising 189 upstream, 20 sgRNA target, three PAM, and 166 downstream nucleotides obtains a mean 5CV Spearman correlation of 0.697, 0.143 over the performance seen when using an input of just the sgRNA-target. The sgRNA-target only model attains a mean 5CV Spearman correlation of 0.554. This finding is also repeated with the E. coli eSpCas9 model, with a moderate boost to performance seen when including 193 upstream and the PAM plus 190 downstream nucleotides—a total sequence length of 406. However, for eSpCas9, this can be more easily understood through the disfavouring of A-T rich regions, an attribute not seeming to be present for SpCas9 (Fig. S7). Examining the positional di-nucleotide mean scores for these adjacent regions in the E. coli SpCas9 dataset identifies limited discernible information compared to the sequence length optimization testing. However, if we instead examine sequence length-based performance improvements per added nucleotide, rather than cumulatively, results are similar to the di-nucleotide analyses in terms of signal. Examining per nucleotide position performance improvement—using the crisprHALWT model and final input sequence length versus just the sgRNA target site input—we obtain a change in mean Spearman correlation of +0.00034 per upstream and +0.00074 per downstream nucleotide added. While together the longer input sequences improve crisprHALWT model performance, as well as crisprHALeSp model performance, the per-position impact is minimal.

SpCas9 model predictions and methodology generalize

Since the training sets were all generated using depletion assays, we explored if the data-driven performance improvements extended to datasets generated using a different methodology. We benchmarked crisprHALTev against existing machine learning (ML) models using three independent enrichment datasets: E. coli pTox plasmid TevSpCas9 (n = 260), pTox SpCas9 (n = 255), and KatG TevSpCas9 (n = 268) (Table 2). Across the three testing sets, using both Spearman and Pearson correlation metrics, crisprHALTev achieves better performance than all six prior models tested—the original crisprHAL models, crisprHALTev and crisprHALWT, GuoWT, and DeepSgRNAWT Cas9 models (Fig. 7). Specifically, the performance metrics for crisprHALTev are: pTox TevSpCas9 0.690 Spearman correlation and 0.693 Pearson correlation, pTox SpCas9 0.650 Spearman correlation and 0.659 Pearson correlation, and KatG TevSpCas9 0.694 Spearman correlation and 0.615 Pearson correlation (Figs. 7A–7C). Since the original crisprHAL models are constructed with the pTox TevSpCas9 and pTox SpCas9 datasets, only their performance on the KatG dataset is used for comparison. Performance from crisprHALeSp on the test sets is reported in Fig. S8 due to differences in enzyme preferences. Since SpCas9 and TevSpCas9 do not appear to share the characteristic of eSpCas9 that disfavours AT-rich adjacent regions, the inclusion of such regions in crisprHALeSp reduces performance on the non-eSpCas9 datasets. When shorter input sequences from other models are used, that exclude the disfavoured AT-rich regions, crisprHALeSp performance on the TevSpCas9 and SpCas9 datasets improves (Fig. S8). We note that although the crisprHALTev model is trained on a dataset which tests the TevSpCas9 nuclease, it still outperforms the models constructed on SpCas9 data on the pTox SpCas9 dataset, supporting the use of a TevSpCas9 data based model for SpCas9 prediction tasks.

Figure 7: Benchmarking of the crisprHALTev and crisprHALWT models.

Predictions from crisprHALTev trained on C. rodentium TevSpCas9 versus the log2FC activity scores for (A) the E. coli pTox plasmid TevSpCas9 activity set (n = 260), (B) the E. coli pTox plasmid SpCas9 activity set (n = 255), and (C) the S. enterica KatG target in E. coli TevSpCas9 activity set (n = 268). Model performance comparisons of crisprHALTev (purple) and crisprHALWT (blue), the original crisprHALTL-Tev and crisprHALTL-WT models (gold), and the prior models, DeepSgRNAWT and GuoWT (grey) on (D) the E. coli pTox plasmid TevSpCas9 activity set, (E) the E. coli pTox plasmid SpCas9 activity set, and (F) the S. enterica KatG target in E. coli TevSpCas9 activity set.A secondary result is the generalization of performance seen from crisprHALWT compared to both prior models constructed on the original E. coli SpCas9 data (Figs. 7D–7F). Performance on the three testing sets exceeds both prior models, with the exception of pTox SpCas9, where performance is similar to the GuoWT model. Using Pearson correlation as test set performance metric, similar results are attained (Fig. S9). We note that this performance is obtained using the ultra long 378 nucleotide model inputs.

Finally, given the data-centric nature of this approach, we tested if the performance improvements identified were specific to the crisprHAL architecture. To perform this, we retrained the five-layer CNN architecture from the DeepSgRNA model using the three training datasets. Across both Spearman and Pearson correlation metrics, mean 5CV performance corresponded with the results obtained when using the crisprHAL architecture (Fig. S10). Specifically, the input sequence lengths of 37 for C. rodentium TevSpCas9, 406 for E. coli eSpCas9, and 378 for E. coli WT-SpCas9 obtained improved performance compared input sequence lengths of 43, 30, and 28 used by prior models, and the baseline 20nt sgRNA target site only input (Wang & Zhang, 2019; Guo et al., 2018; Ham et al., 2023). Using the final input sequence lengths with the five-layer CNN model resulted in mean 5CV Spearman correlations improvements of 0.036 (TevSpCas9), 0.102 (eSpCas9), and 0.160 (WT-SpCas9), compared to the baseline 20nt input. Performance also benefited from using the higher control-condition read count cutoff data, compared to the previously used cutoff value of 20 and baseline cutoff of 1 (Guo et al., 2018; Wang & Zhang, 2019). Compared to a cutoff of 1, 5CV Spearman correlations increased by 0.052(TevSpCas9), 0.008 (eSpCas9), and 0.023 (WT-SpCas9).

Discussion

In this paper we show the importance of data quality by examining the input features (target sites) and labels (activity scores) and develop accurate models for bacterial SpCas9 and eSpCas9 activity prediction. While a great deal of work has been spent on optimizing model architectures for CRISPR-Cas9 activity prediction, the model itself is only one part of the equation. Our work shows that a focus on curating the data from which the models are trained is as impactful on model performance as is developing novel ML approaches for this task.

The performance of a predictive model is constrained by its training data, and here we show that the accuracy of that data is as important as the size of the training set since smaller curated datasets can produce more accurate models than does the entire uncurated dataset. Inaccuracies with the feature (input) or label (score) can easily lead to sizable reductions in performance, and so data curation must be performed. Curation discards a small portion of a dataset due to low quality and ensures that the data are useful and accurate. However, as ML relies on large datasets, it is not obvious that setting a cutoff that eliminates nearly half of the data points, as done with the E. coli SpCas9 data, would be expected to improve predictions. All three final models presented use minimum control read count cutoff values higher than previously used, with the SpCas9 and TevSpCas9 datasets seeing optimal performance in spite of the removal of substantial amounts of training data. Thus, increasing data quality outweighs reducing dataset size even when that reduction in data size is substantial. We found that the need for a cutoff arises from sparse control-condition read counts that are found in depletion experiments. Very low read counts do not provide an accurate estimate of the initial feature abundance, and so reduce the dynamic range of activity scores and their accuracy (Rapaport et al., 2013; Fernandes et al., 2013; Love, Huber & Anders, 2014). Alongside methods like the control read count cutoff discussed, other strategies to mitigate this issue include increasing sequencing depth to directly improve read count abundance, and using more control replicates to better represent the measurement variance. However, it is important to make note of the differences in optimal cutoff values across the three tested datasets. As such, it is apparent that such cutoff values are specific to the dataset and cannot be applied universally. Future use of such a method may involve a replication of the presented cutoff testing, as curation has a broad optimum. If not possible, a cutoff of 20 may be sufficient for reasonable confidence in the quality of resulting activity scores. In addition, we clarify that this methodology is specific to sequencing-based bacterial sgRNA/Cas9 activity assays. The killing efficiency assay design used for these bacterial datasets is distinct from the editing efficiency assays used in eukaryotic sgRNA/Cas9 activity measurement. It would be inappropriate to suggest this method be applied without modification to data outside of its intended domain.

Akin to score curation, little emphasis has been placed on optimizing model inputs for the inclusion of relevant target-site adjacent nucleotides. Some approaches across the landscape have been quite conservative, with the inclusion of only the sgRNA and sometimes the NGG PAM, missing out on substantial relevant information. The sgRNA target site, and more specifically the PAM-proximal side, does contain the most relevant information for on-target activity. This is in line with optimal model performance found by Ham et al. (2023) during their input sequence length optimization, as well as prior biological findings of downstream Cas9-DNA interactions (Zhang et al., 2019; Yang et al., 2021). Across all three depletion datasets for TevSpCas9, eSpCas9, and SpCas9, we note substantial on-target information contained in the region encompassing the PAM and up to 11 nucleotides downstream. Of note is that nearly identical positional and di-nucleotide preferences can be seen across the three nucleases. Moving upstream from the sgRNA, limited arguments can be made for the importance of the 1-to-3 adjacent nucleotides. However, what is clear from the E. coli eSpCas9 data is a distinct disfavouring of AT rich target site adjacent regions, a preference extending beyond 100 nucleotides both upstream and downstream of the sgRNA—excluding the PAM and directly adjacent downstream nucleotides. These unique preferences, distinct from WT-SpCas9 and TevSpCas9, may be the result of the mutations made to create eSpCas9 (Slaymaker et al., 2016). These mutations, designed to reduce off-target cleavage, weaken nonspecific DNA interactions and result in a greater sensitivity to sgRNA-DNA mismatches (Wang et al., 2019). Due to this, eSpCas9 also has a reduced editing efficiency, with Guo et al. (2018) suggesting sensitivity to interference by bacterial transcriptional machinery. When combined, such factors may explain the unique target site adjacent nucleotide preferences.

Less obvious sequence preferences exist in the E. coli SpCas9 dataset; however, no such notable trend occurs for the C. rodentium TevSpCas9 dataset. Given the overlap in sgRNA target sites between both E. coli datasets, it is unclear if the trend is due to enzymatic preferences, is species/strain specific, or if it is an artifact of sgRNA target site selection itself. What is clear is the improvement to both held-out and independent test set performance when the E. coli SpCas9 model uses long input sequences containing nearly 200 nucleotides upstream and downstream of the target site. Were this improvement only present within a single dataset, it would be easy to dismiss the improvement as being related to some aspect of assay design or another dataset characteristic. The long input sequences appear to provide relevant on-target activity information. These adjacent nucleotides may affect Cas9 activity through multiple avenues including impacting DNA geometry as well as Cas9-DNA search and interrogation (Farasat & Salis, 2016; Globyte et al., 2019; Cofsky et al., 2022; Vora, Bhandari & Sundar, 2024). However, these potential mechanisms should not be construed as strong evidence supporting the use of such long input sequences. Given the minimal performance improvement per single added nucleotide, identifiable positional information is limited. At present, the adjacent nucleotide activity information identified should be best viewed as a direction for future exploration. If new bacterial SpCas9 and eSpCas9 activity datasets are generated, especially in more distantly related bacterial species, it would be pertinent to re-perform the adjacent nucleotide analyses. Based upon this, given the combination of improved performance and the use of a shorter 37nt input sequence, the crisprHALTev model is recommended for use over the crisprHALWT model for both TevSpCas9 and wild-type SpCas9 bacterial prediction tasks.

When optimizations to input sequence length and score are applied, we obtain excellent performance on the three independent datasets from both the crisprHALTev and crisprHALWT models. Though crisprHALTev performs best for SpCas9 and TevSpCas9 activity prediction, and is the model recommended for such tasks, crisprHALWT is arguably more informative. Unlike the E. coli eSpCas9 dataset, when curation is performed for the SpCas9 dataset the amount of data remaining is reduced to a point lower than the original E. coli SpCas9 dataset. It is this reduction that makes performance on the held-out test set and, more importantly, the three independent test sets, so important. The E. coli SpCas9 model performs better, and more consistently, than the prior models on each test in spite of having less training data. Though crisprHALeSp does also boast improved performance compared to models trained on the original E. coli eSpCas9 data, the lack of independent testing data and the increased amount of training data limits strong statements about data curation-based performance improvement. However, what is notable is that when training crisprHAL with either original E. coli dataset, we obtain performance barely exceeding that of DeepSgRNAeSp. Additionally, when using the five-layer CNN architecture from DeepSgRNA (Wang & Zhang, 2019), we obtain improved performance using the curated data. Though the crisprHAL architecture performs well for transfer learning applications, as it was designed to do, in this case it is not the architecture that is responsible for the performance improvements; it is the data.

Here we present crisprHALTev, a deep learning model designed for bacterial sgRNA/SpCas9 prediction tasks with performance that exceeds that of existing models across all tested datasets. This performance was obtained through focusing on the quality of the model inputs and scores rather than through the common method of a novel architecture or other machine learning methodology. To support this data-centred approach, we revisited two prior datasets measuring eSpCas9 and SpCas9 activity in E. coli, remaking the data, and generating subsequent models with improved performance. During analysis of the model inputs we noted a trend of identical downstream nucleotide on-target activity information which suggests that the nucleotides directly downstream of the PAM have a notable impact on activity, with more distant upstream and downstream nucleotides also seeming to impact activity. Using these methods, we present models which provide accurate TevSpCas9, SpCas9, and eSpCas9 predictions and demonstrate the importance of data curation to improve model performance.

Conclusion

A great deal of work has been spent on the optimization of model architectures for CRISPR-Cas9 activity prediction; however, the model itself is only one part of the equation. Across the sgRNA/Cas9 prediction field, numerous papers have been published which present novel machine learning approaches and updates to improve activity prediction. While these papers succeed at providing performance improvements, relatively little focus has been spent on cleaning and optimizing the data from which the models are trained. Here we show the importance of examining the target site inputs and activity score labels through the development of accurate bacterial sgRNA/Cas9 activity prediction models.